Toviah Moldwin

3.5K posts

@TMoldwin

Computational neuroscientist @ELSCbrain @Segev_Lab. Singer and guitarist for the rock band @SynfireChain. Dualist. Founder, https://t.co/YMBvt487lT.

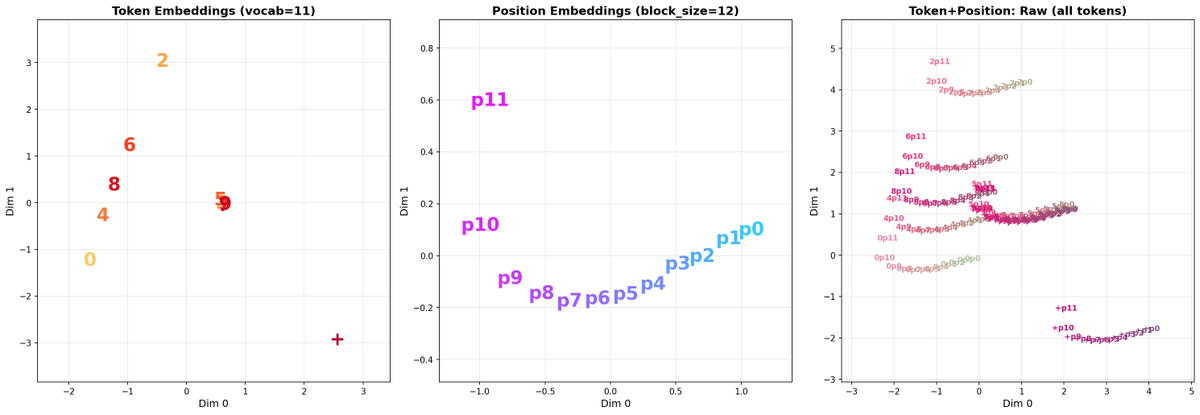

“Girls like smart guys” is indeed true This is very bad news for a lot of guys since altering your intelligence is extremely difficult/on some accounts impossible

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

Hope everyone involved is doing okay

Some branches of neuroscience (and science in general) are unlikely to see real progress for a long time. This stagnation stems from an academic echo chamber that very effectively silences dissent. young researchers introducing new ideas are often marginalized or driven out before they can establish themselves. There must be quite a few of these people who left academia this way and are now working in industry. The current institutional framework simply won't fund or validate anyone bold enough to challenge entrenched ideas. It's a conformist paradise gated by tenure. BTW these stagnant areas tend to have dominant senior people who have never been wrong and shall remain "authorities" forever.