TanTheMan retweetledi

TanTheMan

662 posts

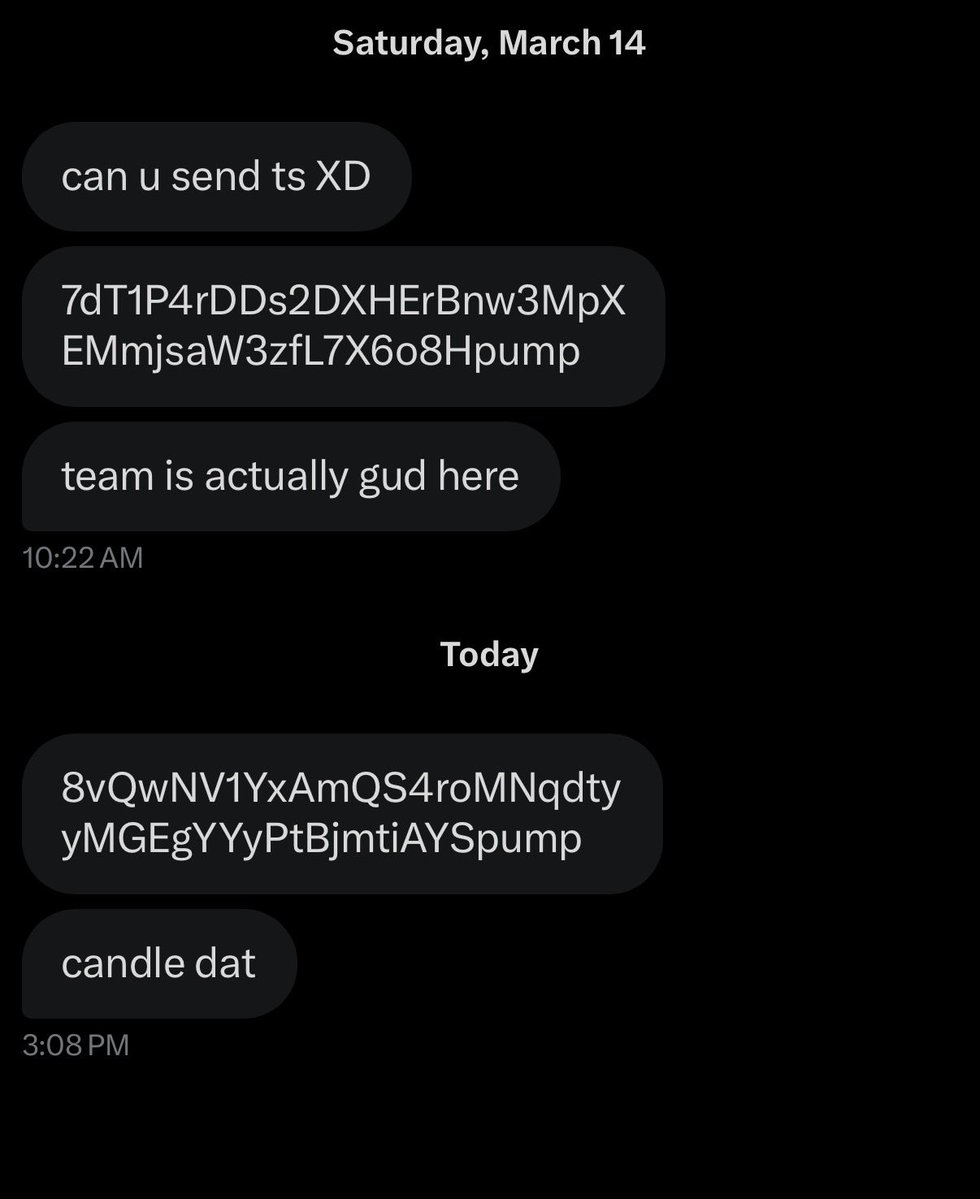

@marmaduke091 You should consider looking into the crypto token community $ARC A1VhJHfsbAAuzk5vaB7Geqon2Hy9QPaJZ9AgoAZFpump It was made to honor the achievement of ARC-AGI-3 community has great people just talking about the fun of the project

English

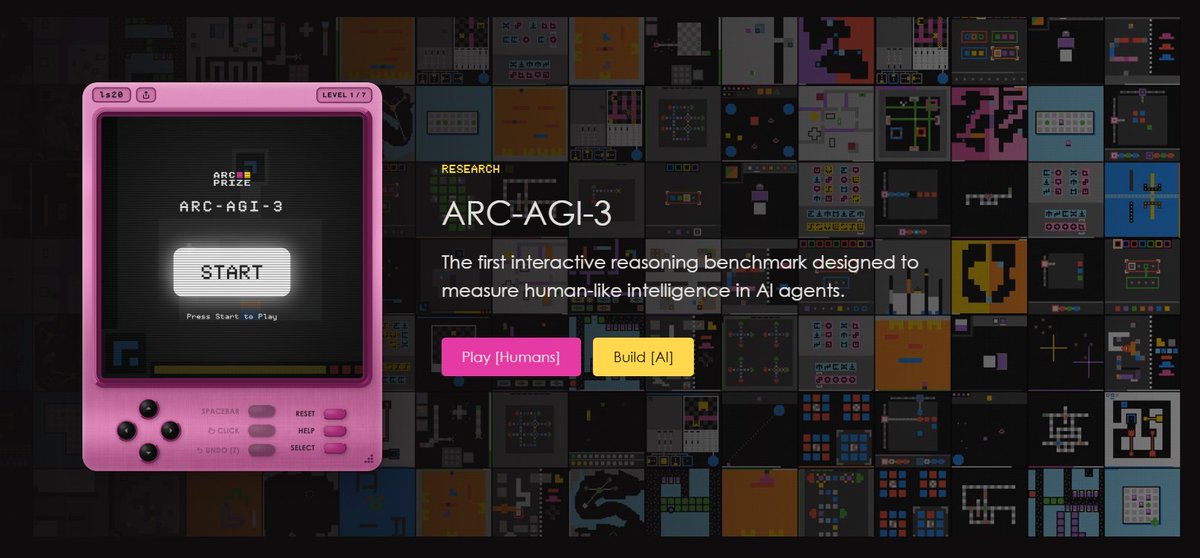

🚨 ARC-AGI-3 is released and the situation is crazy

> No model gets more than 1%

> "We achieved AGI" bros in shambles

> They created an entire game studio to make this benchmark

> Either way it's going to be solved before 2027

It might be over

François Chollet@fchollet

ARC-AGI-3 is out now! We've designed the benchmark to evaluate agentic intelligence via interactive reasoning environments. Beating ARC-AGI-3 will be achieved when an AI system matches or exceeds human-level action efficiency on all environments, upon seeing them for the first time. We've done extensive human testing that shows 100% of these environments are solvable by humans, upon first contact, with no prior training and no instructions. Meanwhile, all frontier AI reasoning models do under 1% at this time.

English

TanTheMan retweetledi

TanTheMan retweetledi

TanTheMan retweetledi

ARC-AGI 3 is here, and all existing AI models are below 1% on the benchmark. It's gonna take a while until this one is saturated.

How it measures intelligence:

- 100% human-solvable environments

- Skill-acquisition efficiency over time

- Long-horizon planning with sparse feedback

- Experience-driven adaptation across multiple steps

"As long as there is a gap between AI and human learning, we do not have AGI."

English

Keep in mind: ARC-AGI is *not* a final exam that you pass to claim AGI. Including ARC-AGI-3.

The benchmarks target the residual gap between what's hard for AI and what's easy for humans. It's meant to be a tool to measure AGI progress and to drive researchers towards the most important open problems on the way to AGI.

So it's a moving target designed to track the frontier. As AI evolves, the benchmark evolves to spotlight the exact problems we haven't solved yet.

English

TanTheMan retweetledi

For those who didn't understand (yet) how it works.

It's gonna be like a game/race. There are several challenges and taks solved by humans.

AIs will be tested if they can perform better than humans. Time, efficiency etc.

As AIs learn through time, they should start from nothing and start learning throughout the way. It'll measure how quickly they learn, how effectivelly etc.

As people are claiming 'AGI is here', this is the best way to measure how AIs really perform from 0 to AGI. All tasks were provenly solved by humans, take that as the ceilling. AIs will try to beat humans. Even if they don't, it's like an AI olympics. It'll show which one is the best till here.

I expect every other AI company to post something about it, as it's a reasonable task. Not only UI or something like that. It's raw LLM. Who makes it better.

English

TanTheMan retweetledi

TanTheMan retweetledi

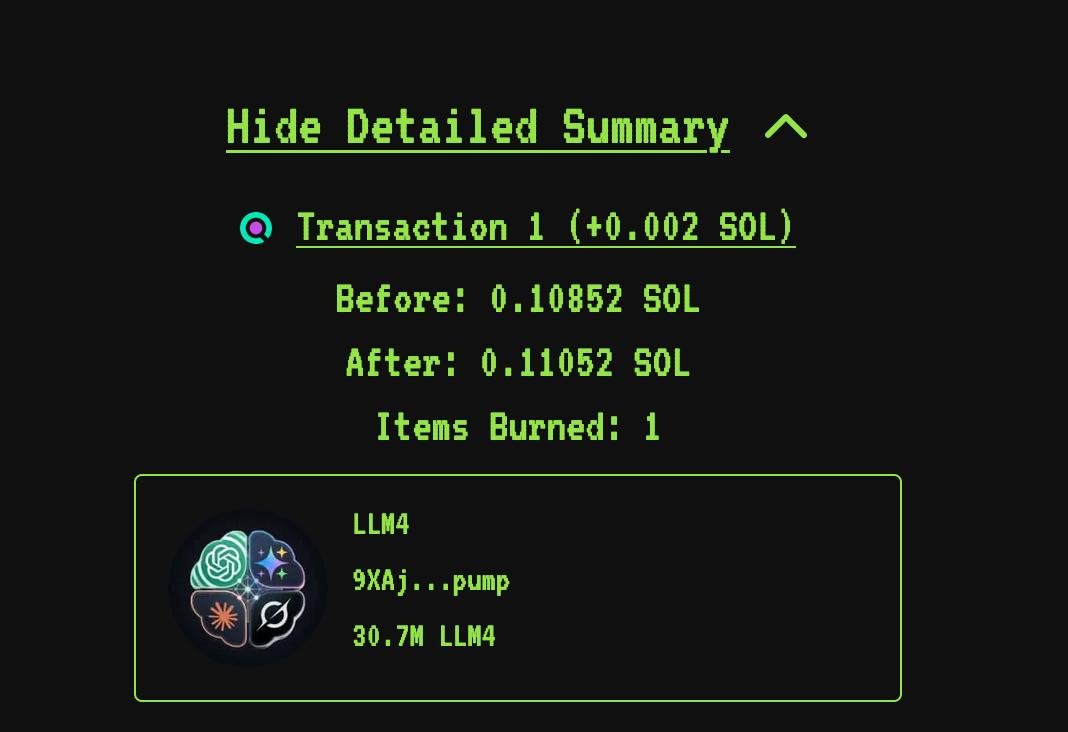

this community will help fund me & take it to the next level :)

I’m going to use fees for computing and training costs, so this will progress the project a ton!

Will post all updates in this community

Thank you @Kimbazxz and everyone else involved.

English

TanTheMan retweetledi

@_Shadow36 Pretty sure hes looking at this one EVamkzzXKUmxKoxJ6R4E21JhFQq2HyyGs6rbqvHkpump

Eesti