Tengda Han

133 posts

Tengda Han

@TengdaHan

Research Scientist @GoogleDeepMind. Previously PhD @Oxford_VGG

Human learns from unique data -- everyone's OWN life -- but our visual representations eventually align. In our recent work "Unique Lives, Shared World" @GoogleDeepMind, we train models with "single-life" videos from distinct sources, and study their alignment and generalisation.

A SINGLE encoder + decoder for all the 4D tasks! We release 🎯 D4RT (Dynamic 4D Reconstruction and Tracking). 📍 A simple, unified interface for 3D tracking, depth, and pose 🌟 SOTA results on 4D reconstruction & tracking 🚀 Up to 100x faster pose estimation than prior works

Work from @SaynaEbrahimi, myself, and @dilaragoekay, @goolygu, Maks Ovsjanikov, Iva Babukova, @DanielZoran_ , Viorica Patraucean, @joaocarreira , Andrew Zisserman and @dimadamen at @GoogleDeepMind. Arxiv: arxiv.org/abs/2512.04085

Excited to share our latest work! Grateful for the guidance from all my collaborators, and special thanks to Tengda for being such an amazing mentor during my internship @GoogleDeepMind 😊

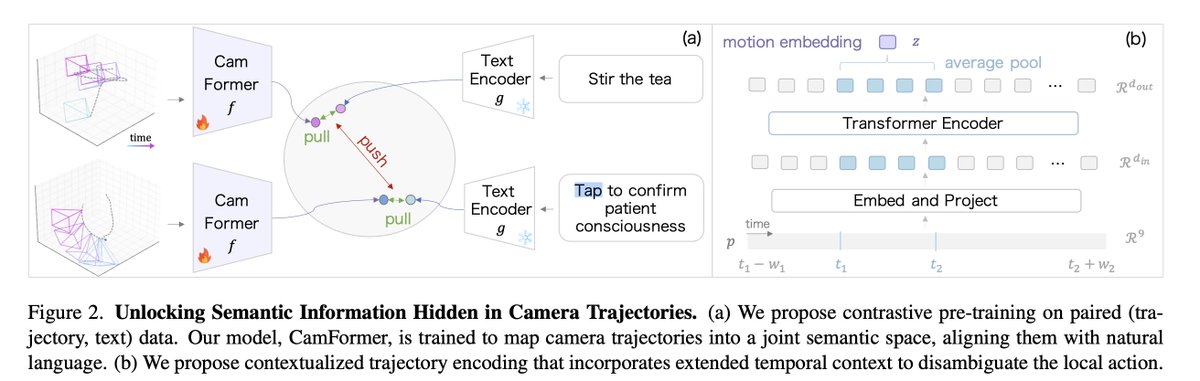

Seeing without Pixels: Perception from Camera Trajectories @sherryx90099597 Kristen Grauman @dimadamen Andrew Zisserman @TengdaHan tl;dr: in title. I love such "blind baseline" papers. arxiv.org/abs/2511.21681

Being able to understand, describe and even enjoy movies is one of the pinnacles of computer vision. Interested in movie understanding and audio description? Check out our SLoMo workshop at @ICCVConference #ICCV2025!!

Movies are more than just video clips, they are stories! 🎬 We’re hosting the 1st SLoMO Workshop at #ICCV2025 to discuss Story-Level Movie Understanding & Audio Descriptions! Website: slomo-workshop.github.io Competition: huggingface.co/spaces/SLoMO-W…