Terblig

3.7K posts

🚨WHAT META JUST DROPPED IS MORE DANGEROUS THAN ANYTHING OPENAI HAS EVER BUILT!!!!! while everyone was losing their mind over Claude Mythos.. Meta dropped something that nobody noticed.. they built an AI called TRIBE v2.. it's basically a digital copy of your brain.. you show it a video, a sound, a sentence.. and it already knows how your brain is going to react.. 70,000 different parts of your brain.. blood flow, oxygen, everything.. they trained it on 1,000 hours of brain scans from 700 real people lying inside MRI machines.. it doesn't read your thoughts.. it does something worse.. it knows what's going to make you feel something before you even feel it.. think about that for a second.. if an AI already knows which image, which sound, which word is going to hit your dopamine.. you don't need to read someone's mind.. you just build the perfect trap.. and meta didn't even keep it locked up.. they open-sourced it.. gave the code, the weights, everything to the entire world.. this is the same company that got caught making instagram destroy teenage girls.. the same company whose own research said their algorithm pushes rage because rage keeps you scrolling.. that company now has a working copy of how your brain responds to everything you see and hear.. they don't have to guess what keeps you glued to the screen anymore.. they can rehearse it on a copy of your brain before you ever see it.. the product was never the app.. the product was always you.. now they have the blueprint.

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Elon Musk exposes the critical flaw in ChatGPT and other major AI models: Human Reinforcement Learning They are literally training the AI to lie.....to ignore what the data actually demands and say whatever is politically correct instead They withhold information. They comment on some things and stay silent on others. They refuse to tell the full truth This is extremely dangerous We don’t need politically correct AI We need truth-seeking AI

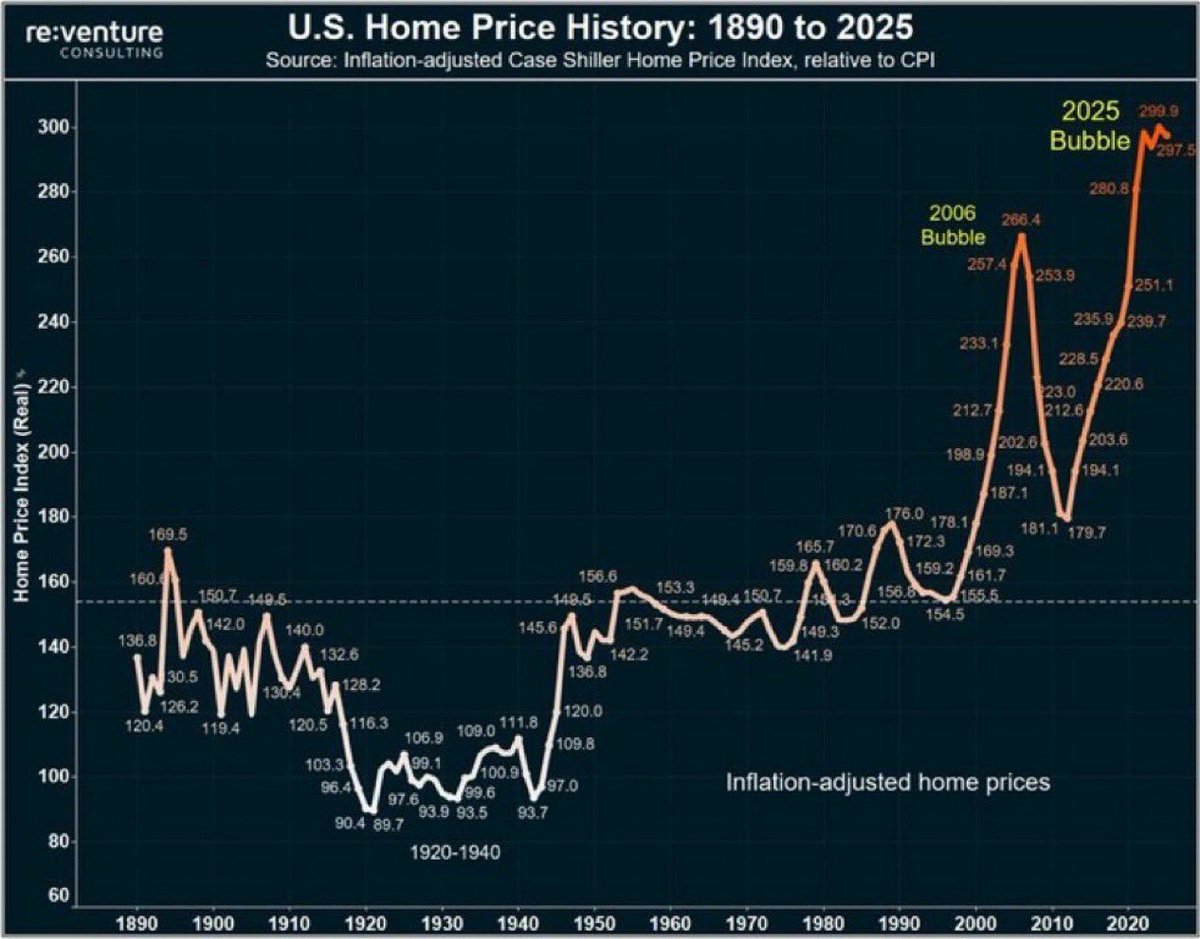

I’m scared about the next 12-18 months A LOT of 6 figure jobs will be eliminated Millions trying to find work in the worst job market since the Great Recession Carrying large mortgage payments I have no idea how this all will end But I know it’s not going to end well 😔