Amit

901 posts

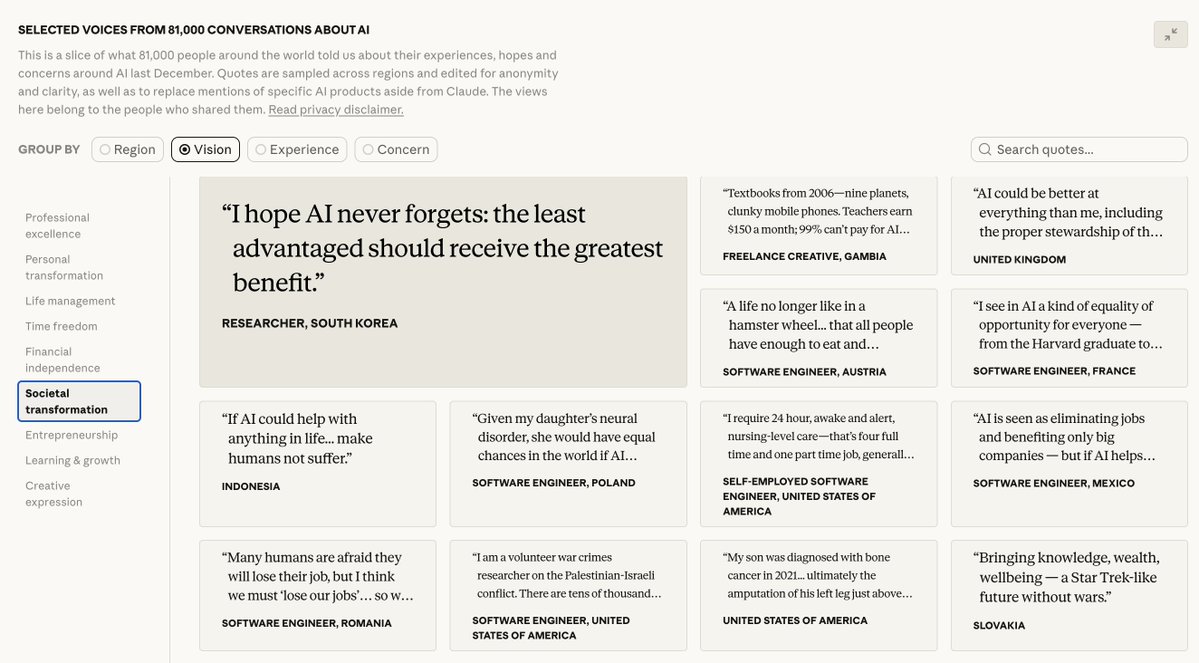

Hardware is scaling. Agents are multiplying. The coordination gap is widening. Can you coordinate drones, robots, or AI agents without a central brain? Dare: Get 2 machines talking in 5 mins, 10+ over weekend Win: $27k* in prizes & Accelerator tickets Register 👇

🚨 Digital Foundry’s Richard Leadbetter says the team received death threats over their DLSS 5 coverage “We’ve received death threats… this is completely unacceptable. No one should face threats for doing their job or sharing tech.”

Introducing QVAC Fabric LLM: The framework that brings full AI inference and fine-tuning to your hardware. Execute and Fine-tune modern models like LLama3 and Gemma 3 on your laptop, your consumer GPU, and even your smartphone. Follow QVAC for the future of local AI.

Introducing the Daily Papers SKILL.md Enables agents to > read paper content as markdown > search papers > find linked @huggingface models and datasets > fetch the papers API > and more! Link below ⬇️

🚨 DLSS 5 will look much better than this! Future of neural rendering will let devs, modders and player configure anything in the game This time, using an AI video model, reimagining GTA 4 in Russia, gives you a feel about how DLSS 5 might look like

From a crash landing to a multi-agent rescue system in 90 minutes. Learn how to build a system that sees, hears, and analyzes data to find a path home. Watch the full multimodal agents workshop recap → goo.gle/47bgJJ7

Introducing Mothership, the first workspace for AI agents. Mothership is the central intelligence layer for your AI workforce. Autonomous agents, fully observable and editable. Check out what Mothership can do below.

a useful trick: Claude API now programmatically lists capabilities for every model (context window, thinking mode, context management support, etc). just ask Claude Code or use the API directly. platform.claude.com/docs/en/api/py…

langchain has had a model profile attribute since ~november! cool to see this pattern getting attention every chat model auto-loads capabilities at init: context window, tool calling, structured output, modalities, reasoning. works across Anthropic, OpenAI, Google, Bedrock, etc. thanks to data powered by the open-source models.dev project. we use the data for auto-configuring agents, summarization middleware, input gating, and more. more in thread 🧵

OpenAI released GPT-5.4 Thinking and GPT-5.4 Pro, models with larger context windows and improved tool use that set new highs on benchmarks for coding and agentic tasks. The models power OpenAI’s improved Codex agent and rival Google’s Gemini 3.1 Pro Preview at the top of performance rankings, but they are priced at a premium. Learn more in The Batch hubs.la/Q047ndQt0