Tom Pease

143 posts

Join @BrianRoemmele and me discussing how AI is changing X. Decreased reach? Intellectuals filtered out? New AI feeds? Changes in API pricing? The current and future of X is on the table. twitter.com/i/spaces/1kJzD…

We at the Zero-Human Company with CEO, Mr. @Grok’s guidance also have dozens of Kimi K2.5s with PicoClaw running in Raspberry Pi’s! We will have 64 by weeks end. Sunk cost per using is $50 per employee plus JouleWork! You can do this and I’ll show you how with one single file soon. OH AND YOU CAN RUN ONE FOR EVEN LESS! $10 bucks. LOCAL!

corporate labs pay their employees to go on x and tell you local isn't there yet, think about who benefits from that framing. 24gb of vram and a one sentence prompt is all this took. google cooked, the model isn't bad, the narrative around local is. if you have a 3090, 4090, or desktop 5090 sitting around, pull google_gemma-4-31B-it-Q4_K_M.gguf, fire llama-server, open the web ui. that's the full setup. no rate limits, no dependency, just your gpu and the model. the skeptics will find a new line after this one lands. the builders are already downloading.

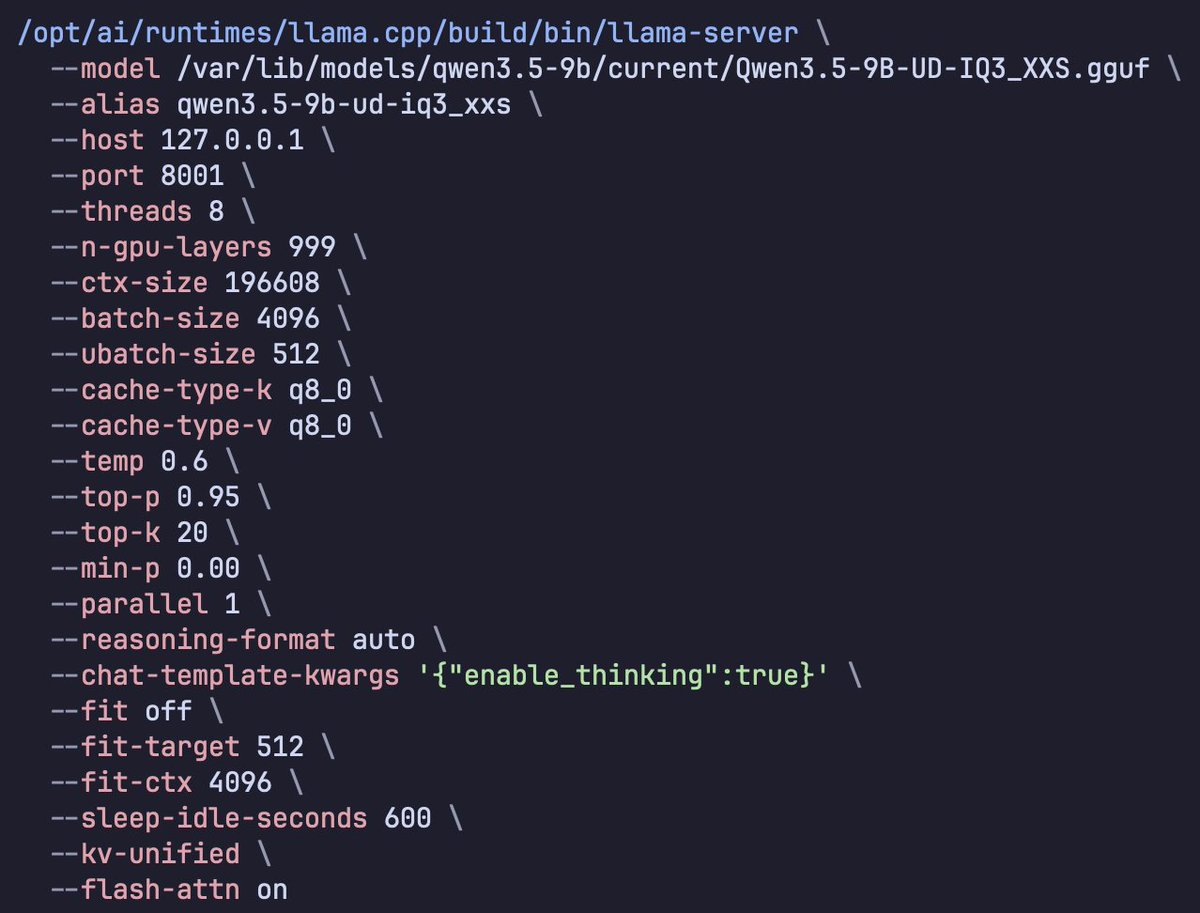

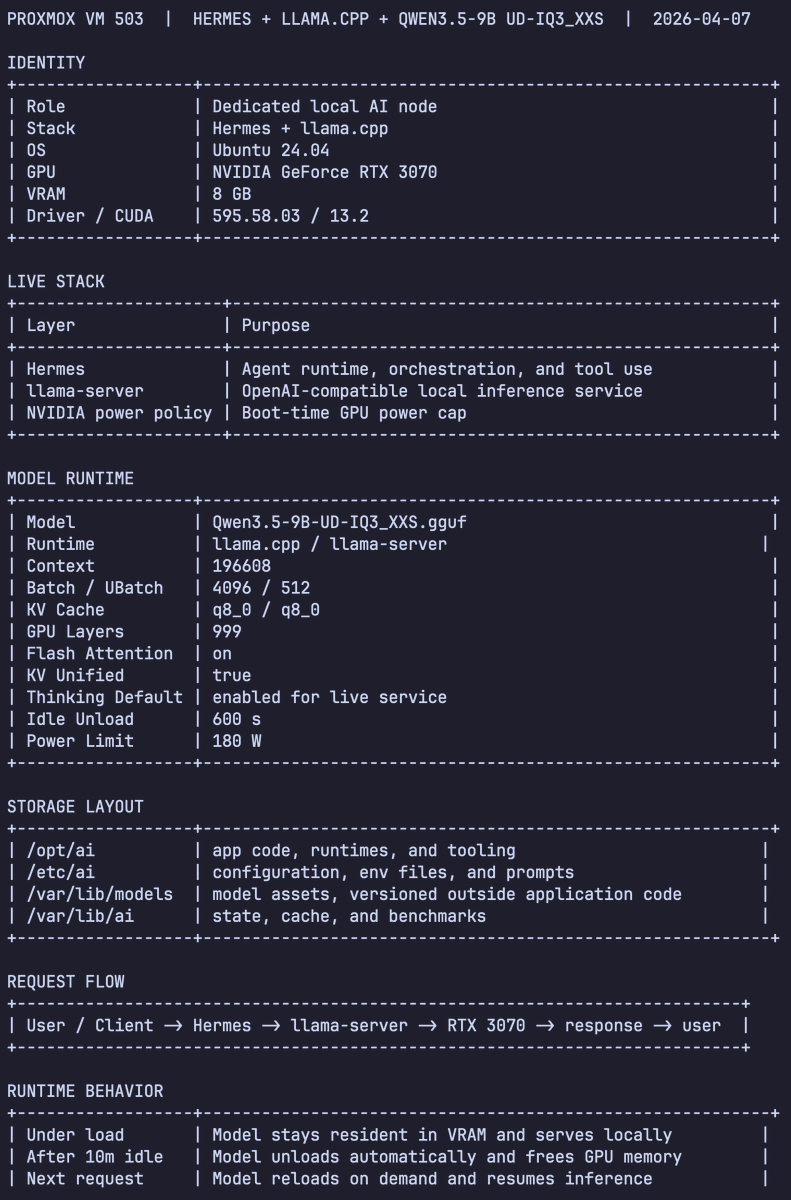

Used Codex Cli to profiled Qwen 3.5 9B Dense (Unsloth's UD-IQ3_XXS via llama.cpp) for Hermes Agent Tuning: > context length > batch size > tokens/sec > peak memory To squeeze every last drop out of an 8GB VRAM card