Tom Stalnaker

1.9K posts

Tom Stalnaker

@TomStal45

Christian, Conservative, Husband, Father. Born in Almost Heaven. War Eagle 🦅. Go Gators 🐊. I rarely reply to DMs. May want to check out my X Lists.

Grokipedia is growing like kelp on steroids 😂 Please check Grokipedia.com articles you know something about and suggest edits for accuracy. Would be much appreciated. This will be by far most comprehensive open source, no copyright distillation of knowledge.

Which Super Bowl Halftime show are you watching?

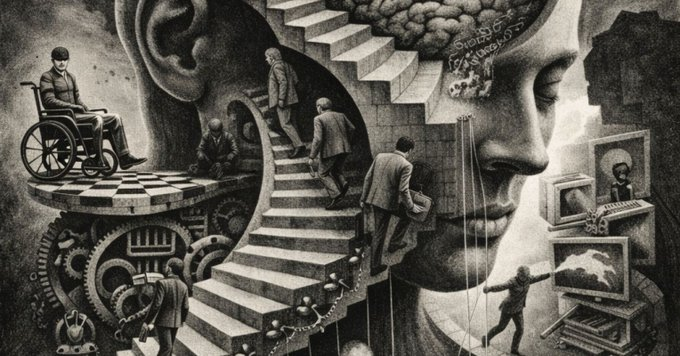

Superintelligence is coming in 10–20 years (maybe sooner) — according to the Godfather of AI, Geoffrey Hinton. Hinton's stark reality check: ChatGPT & Gemini already know thousands of times more than any human. Unbeatable at chess, Go, and most knowledge tasks. Steven Bartlett: "What am I better at?" Hinton: "Probably interviewing CEOs… for now." 2:07 clip — the moment we accept we're no longer the smartest beings on Earth👇 That timeline: Exciting… or terrifying? Your honest take.

Silicon Valley Dem Ro Khanna slams California officials over ‘$72B fraud,’ calls for congressional hearing | Annie Gaus, New York Post Silicon Valley Dem Ro Khanna slams California officials over ‘$72B fraud,’ calls for congressional hearing Congressman Ro Khanna raged at billions in alleged fraud in Gov. Gavin Newsom’s California when he called for a full audit of state spending seemingly aimed at the governor’s leadership. The Silicon Valley Democrat boosted claims on X of billions in fraud in California and vowed to hold congressional hearings on waste and abuse of taxpayer dollars in the state — sparking a spat with Newsom’s sassy spokesperson Izzy Gardon, who defended California’s exorbitant High-Speed Rail project that’s widely considered a boondoggle. Khanna is apparently mulling a presidential run, like Newsom, who said he is “considering” a run in 2028. “Today, I am announcing that in 2026 I will be working on a bipartisan basis on Oversight to request hearings on state governments’ high risk programs, including California, that have led to illegal payments and eligibility errors,” Khanna wrote on X Tuesday. “I also will work on legislation to call for a full independent audit of California’s budget.” Khanna called for oversight following social media allegations of $72 billion worth of fraud in California, an estimate that appears roughly extrapolated from state auditor reports pointing to risks in programs like the Employment Development Department, along with cost overruns in the infamous High-Speed Rail project, intended to eventually connect San Francisco and Los Angeles. Khanna, who’s carved out a lane as a pro-business progressive, was excoriated by tech barons this week after voicing support for a proposed 5% wealth tax on billionaires. “One fair critique is the lack of accountability and the corruption in Sacramento,” Khanna conceded in a Saturday post on X before calling Sacramento fraud “outrageous and appalling.” “There needs to be full accountability for the waste and new leadership in Sacramento. Taxpayers are owed an accounting of where every penny of their tax dollars are going — a detailed receipt,” Khanna continued. Past reports from the California State Auditor have highlighted problems in state government ranging from up to $31 billion worth of fake unemployment claims and millions in unused cellphones, to a lack of anti-fraud controls in the state’s extensive homelessness spending. Gardon called the $72 billion a “MAGA made-up number” and boasted of “16,000 union jobs” from the long-delayed High-Speed Rail. Khanna later clarified on X that the “precise number needs to be assessed of mismanagement & waste during Covid, and other misspending on the high speed train and risks highlighted by auditor report.” “We should have GAO look at it,” he said, referring to the Government Accountability Office, a congressional watchdog. The High-Speed Rail was first approved by voters in 2008 and early estimates pegged the project at around $33 billion, with service beginning in 2020. Costs have since ballooned to more than $128 billion, per Reuters, with service expected in 2033. While 60 “structures” have been built and 171 miles are in the “design & construction” phrase, according to Newsom’s office, no track has been laid. The state recently dropped a lawsuit challenging the federal government’s revocation of $4 billion in funds for the High-Speed Rail, aiming to raise private funding for the project. The Federal Railroad Administration issued a 315-page report in June describing High-Speed Rail budget shortfalls, missed deadlines and inaccurate ridership estimates. nypost.com/2025/12/31/us-…