Tropical Dog

91 posts

Meet the new Stitch, your vibe design partner. Here are 5 major upgrades to help you create, iterate and collaborate: 🎨 AI-Native Canvas 🧠 Smarter Design Agent 🎙️ Voice ⚡️ Instant Prototypes 📐 Design Systems and DESIGN.md Rolling out now. Details and product walkthrough video in 🧵

2 weeks without smartphone internet significantly improved sustained attention. The effects were similar to being a decade younger.

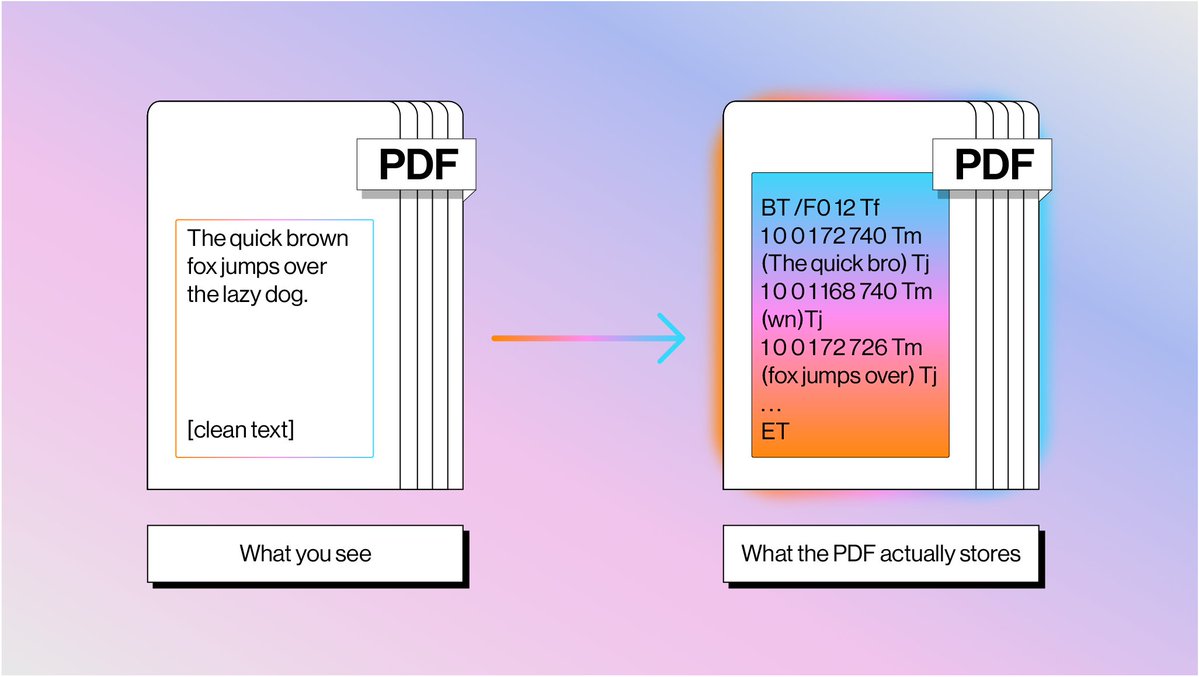

PDFs are the bane of every AI agent's existence: here's why parsing them is so much harder than you think 📄 Every developer building document agents eventually hits the same wall: PDFs weren't designed to be machine-readable. They're drawing instructions from 1982, not structured data. 📝 PDF text isn't stored as characters: it's glyph shapes positioned at coordinates with no semantic meaning 📊 Tables don't exist as objects: they're just lines and text that happen to look tabular when rendered 🔄 Reading order is pure guesswork — content streams have zero relationship to visual flow 🤖 Seventy years of OCR evolution led us to combine text extraction with vision models for optimal results We built LlamaParse using this hybrid approach: fast text extraction for standard content, vision models for complex layouts. It's how we're solving document processing at scale. Read the full breakdown of why PDFs are so challenging and how we're tackling it: llamaindex.ai/blog/why-readi…

🧪 Terra Classic Testnet Update — SDK v0.53 The rebel-2 testnet has been running Cosmos SDK v0.53.x for 3 weeks. Core chain services are stable, and the network is still under active testing. This upgrade moves Terra Classic onto the latest Cosmos SDK (v0.53.4) and IBC V2 (IBC-go v10.3.0) A notable ecosystem change is an upstream SDK behavior update affecting tx logs. Developer guidance and compatibility notes are published below. 📄 Testnet results & dev guidance: @orbitlabs/SJuJqIJ8We" target="_blank" rel="nofollow noopener">hackmd.io/@orbitlabs/SJu…

— Orbit Labs | Terra Classic Devs