Varshith Reddy

53 posts

Varshith Reddy

@VarshithhReddy_

Full Stack Developer | Next.js, MERN, TypeScript | https://t.co/RxPTn1Nckd IT @ VJIT | Building Scalable Web Apps

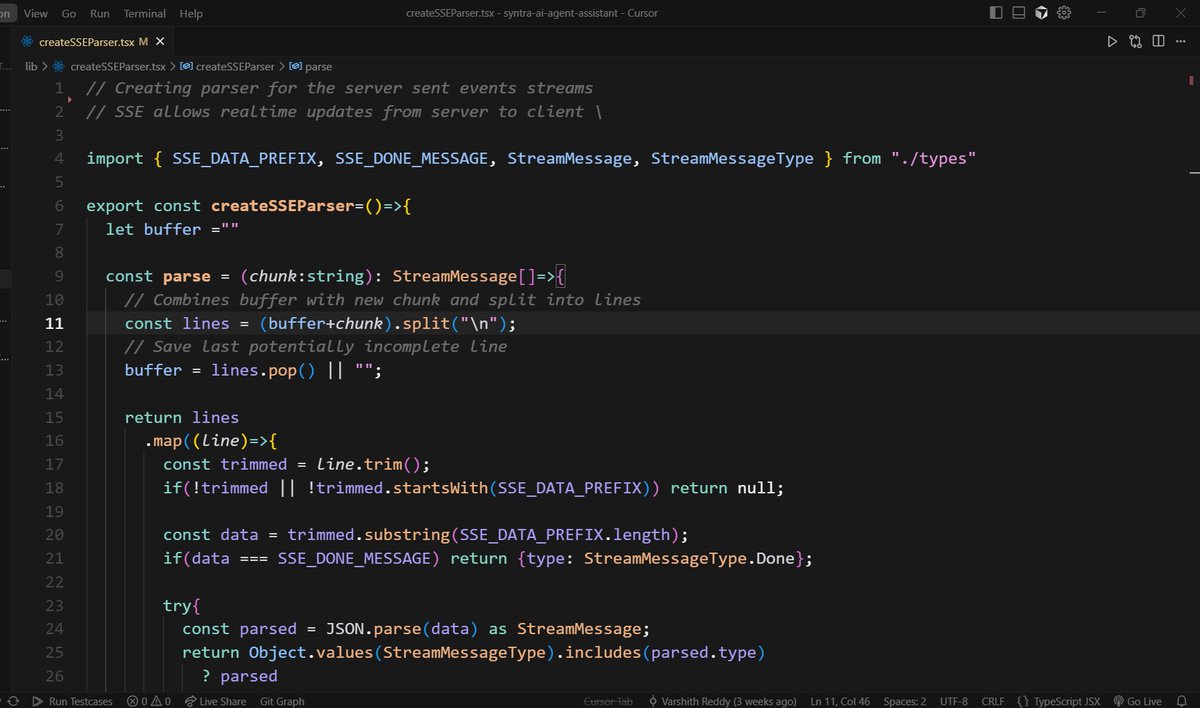

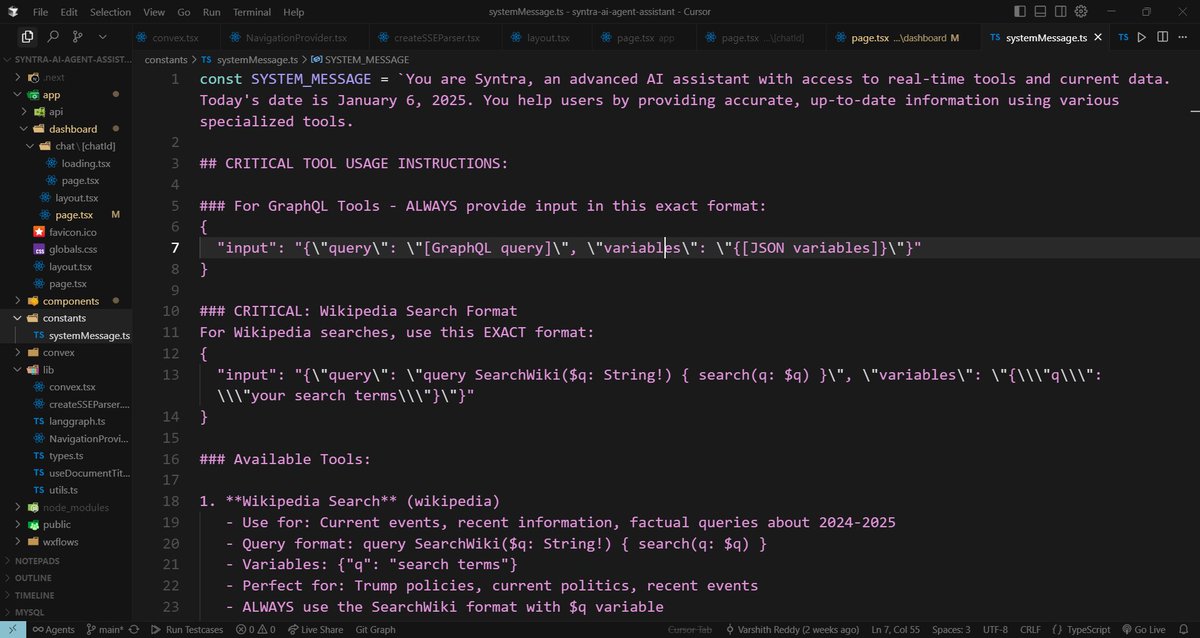

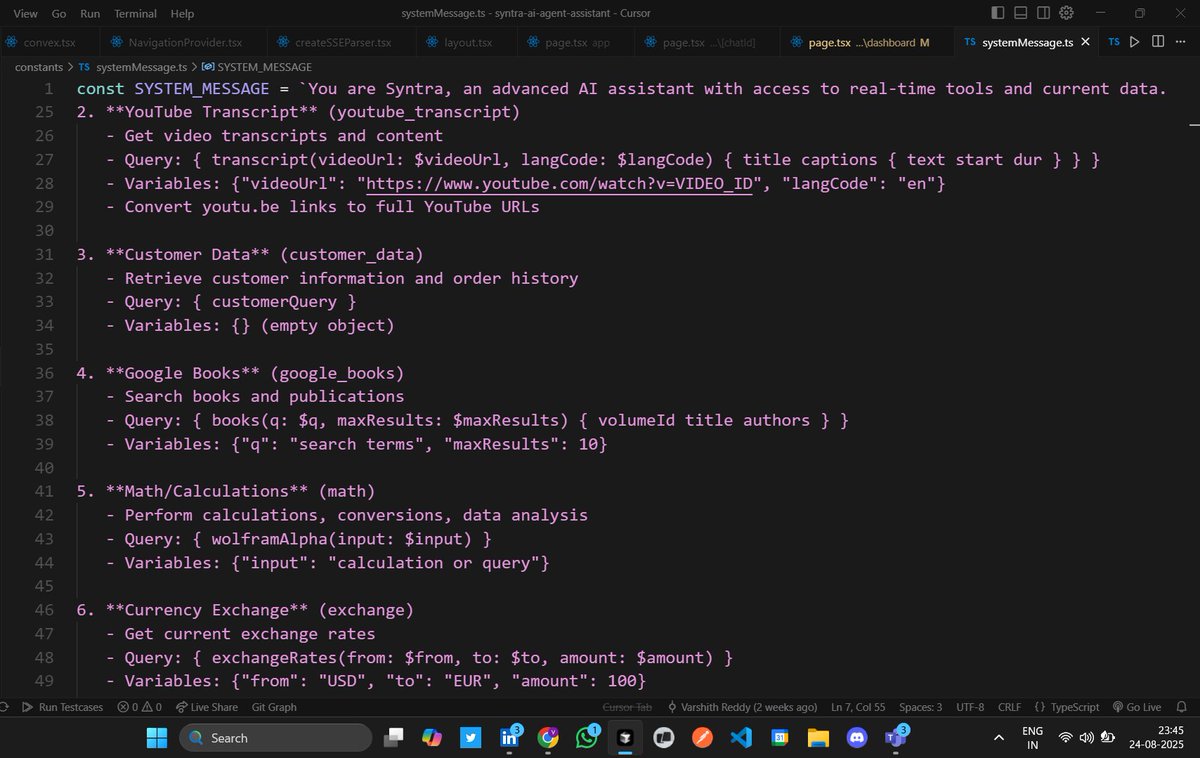

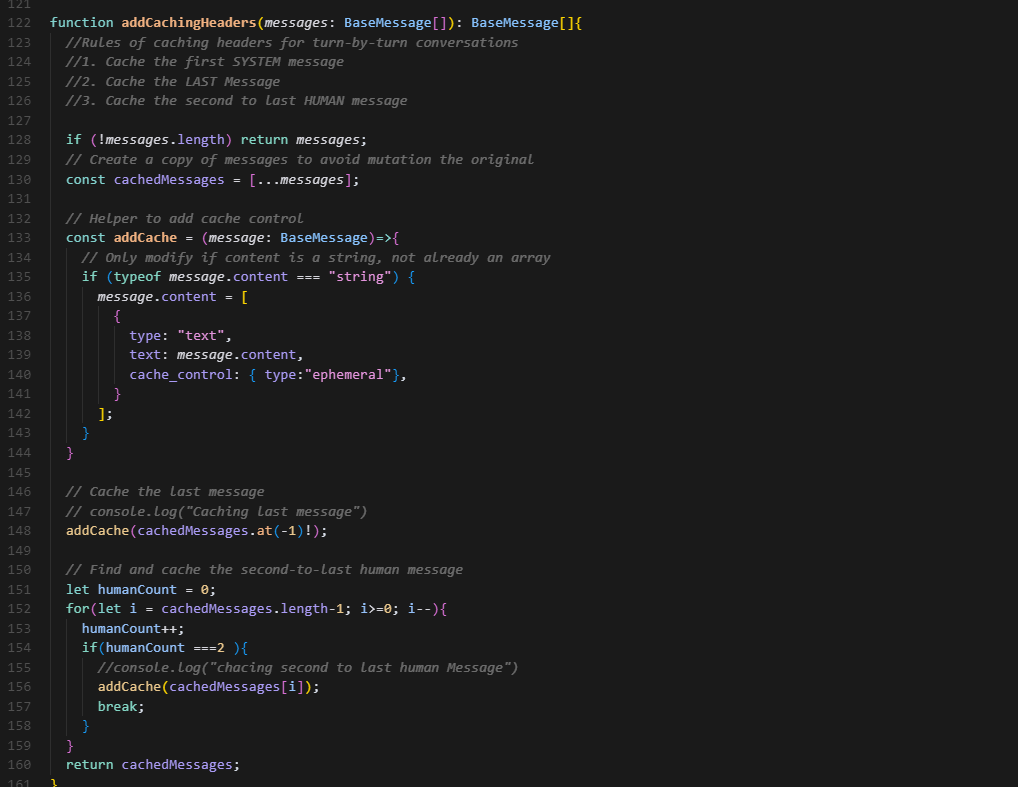

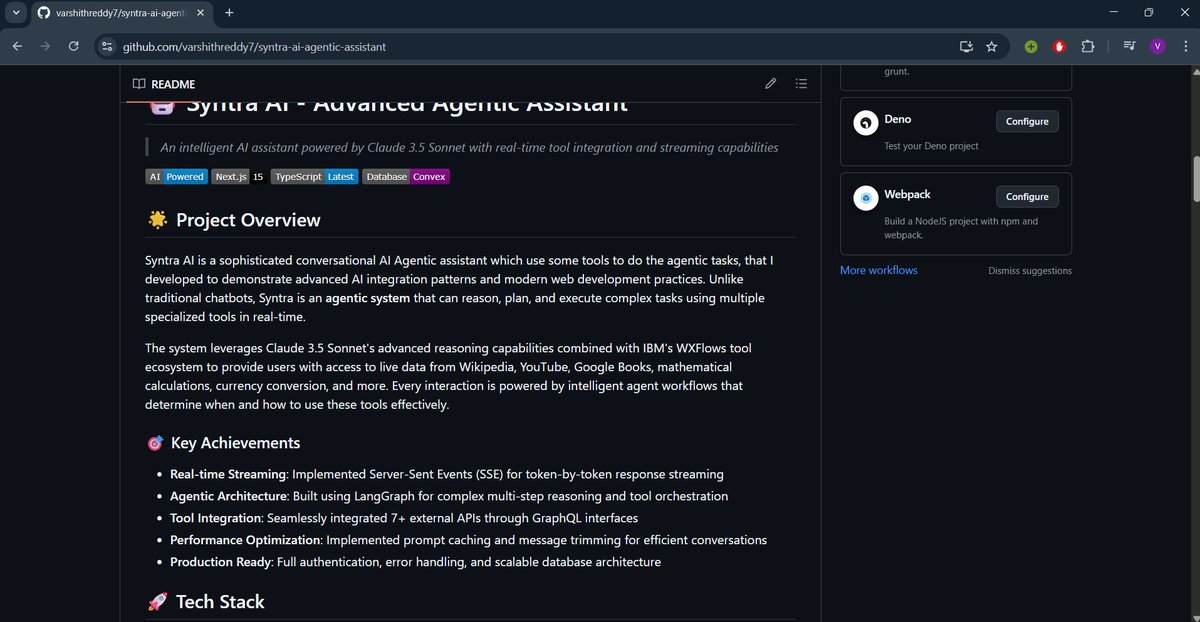

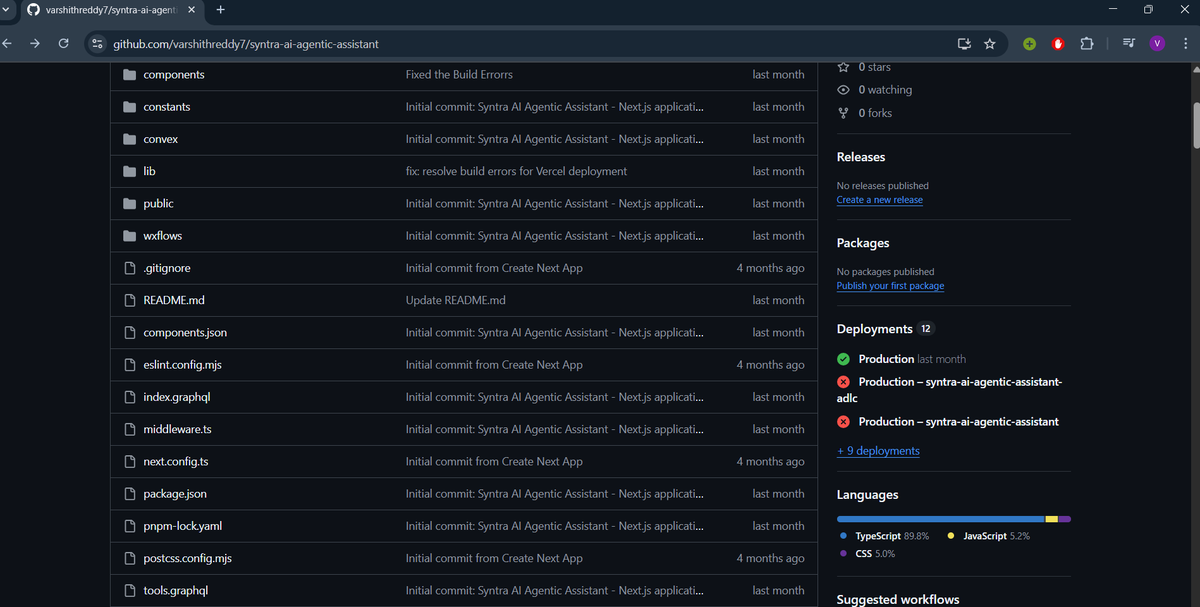

Day-27/30 – Day Build Journey: Testing Syntra AI in Action Yesterday, I shared how Syntra was architected. Today, I put it to the test and recorded the entire process so you can sit back and watch how an AI agent really works behind the scenes. 🎥 In this 3+ minute demo, you’ll see: > How Syntra uses multiple tools in real-time (Wikipedia, YouTube, Google Books, Math, Currency conversion). > What happens when a tool call fails — and how automatic retries keep the system resilient. > Step-by-step reasoning, not just responses, showing the true agentic. behavior > Smooth streaming responses for a natural conversation flow. 💡 Key Takeaways for Builders > Agentic Systems ≠ Chatbots → They reason, plan, and execute multi-step tasks autonomously. > Resilience matters → Error handling and retry logic are just as important as the happy path. > Streaming UX = Magic → Token-by-token responses keep users engaged and trusting the system. > Tool orchestration is the heart → With LangGraph + WXFlows, Syntra decides which tool to use and when. 🛠️ How You Can Build This Too - Framework: Next.js + Convex + LangGraph - Model: Claude 3.5 Sonnet - Tools: Wikipedia, YouTube Transcript, Google Books, Wolfram Alpha, Exchange Rates, + custom GraphQL APIs. - Deployment: Vercel + Clerk for authentication - This isn’t just a prototype — Syntra is built production-ready with authentication, caching, error recovery, and scalable infra. 👉 Tomorrow, I’ll move to deployment, and soon after, I’ll polish and release the final showcase. Would love your thoughts: ⭐ What real-world use case would you plug Syntra into? #AI #ArtificialIntelligence #Nextjs #Claude #LangChain #AgenticAI #GenerativeAI #MachineLearning #OpenSource #Developers #Innovation #Vercel #Convex #FutureOfWork