Julius Vering

14 posts

Julius Vering

@VeringJulius

continued pretraining lead @cursor_ai | previously @openai, @weHRTyou

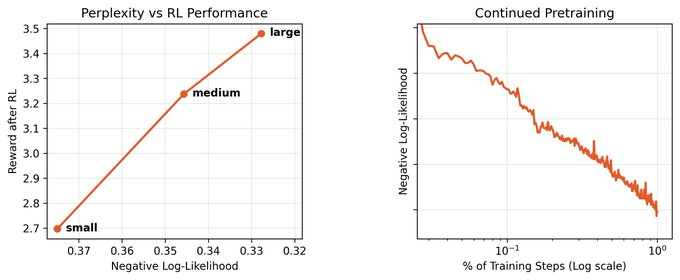

We're releasing a technical report describing how Composer 2 was trained.

We're releasing a technical report describing how Composer 2 was trained.

Composer 2 is now available in Cursor.

Composer 2 is now available in Cursor.

1/N I’m excited to share that our latest @OpenAI experimental reasoning LLM has achieved a longstanding grand challenge in AI: gold medal-level performance on the world’s most prestigious math competition—the International Math Olympiad (IMO).

I’m so excited to announce Gemma 3n is here! 🎉 🔊Multimodal (text/audio/image/video) understanding 🤯Runs with as little as 2GB of RAM 🏆First model under 10B with @lmarena_ai score of 1300+ Available now on @huggingface, @kaggle, llama.cpp, ai.dev, and more

I really like the term “context engineering” over prompt engineering. It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM.