Steven Walton

2K posts

Steven Walton

@WaltonStevenj

Ph.D. from University of Oregon | Visiting Scholar @ Georgia Tech | Studying Computer Vision | SHI Lab | 🦋 https://t.co/jW5YkexBMX

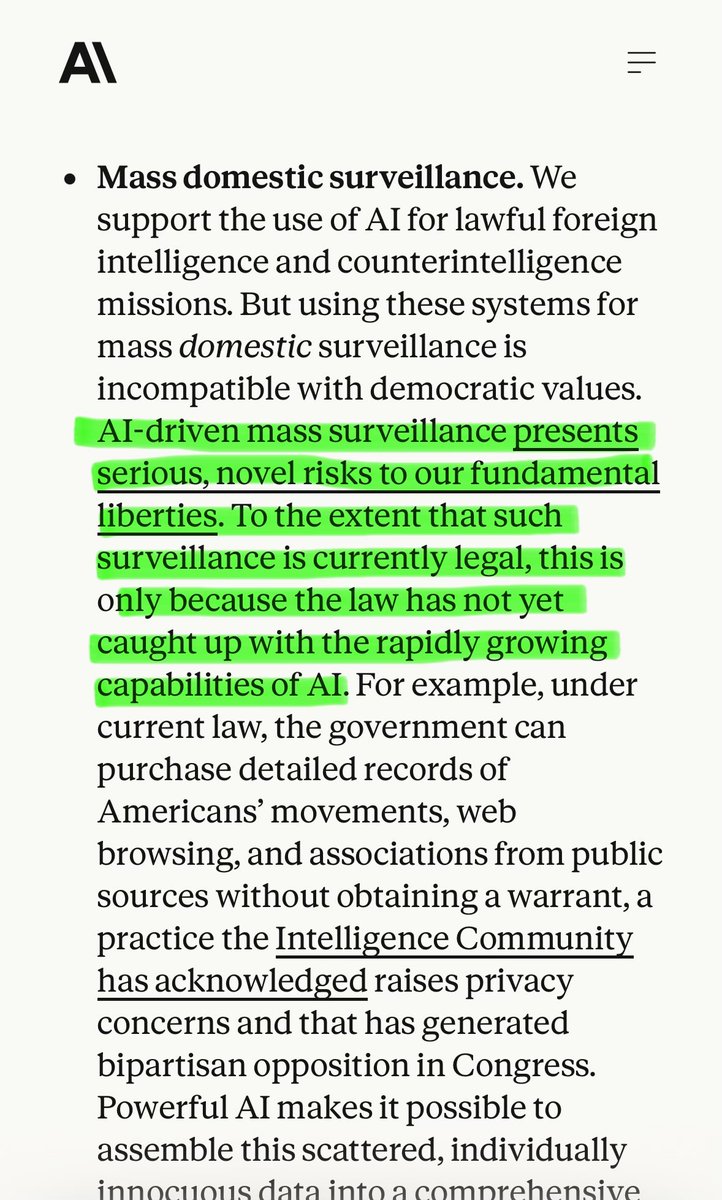

Yesterday we reached an agreement with the Department of War for deploying advanced AI systems in classified environments, which we requested they make available to all AI companies. We think our deployment has more guardrails than any previous agreement for classified AI deployments, including Anthropic's. Here's why: openai.com/index/our-agre…

“The thing that happened with AGI and pretraining is that in some sense they overshot the target. You will realize that a human being is not an AGI. Because a human being lacks a huge amount of knowledge. Instead, we rely on continual learning. If I produce a super intelligent 15 -year -old, they don't know very much at all. A great student, very eager. [You can say,] ‘You go and be a programmer. You go and be a doctor. Go and learn.’ So you could imagine that the deployment itself will involve some kind of a learning trial and error period. It's a process as opposed to, you drop the finished thing.” @ilyasut

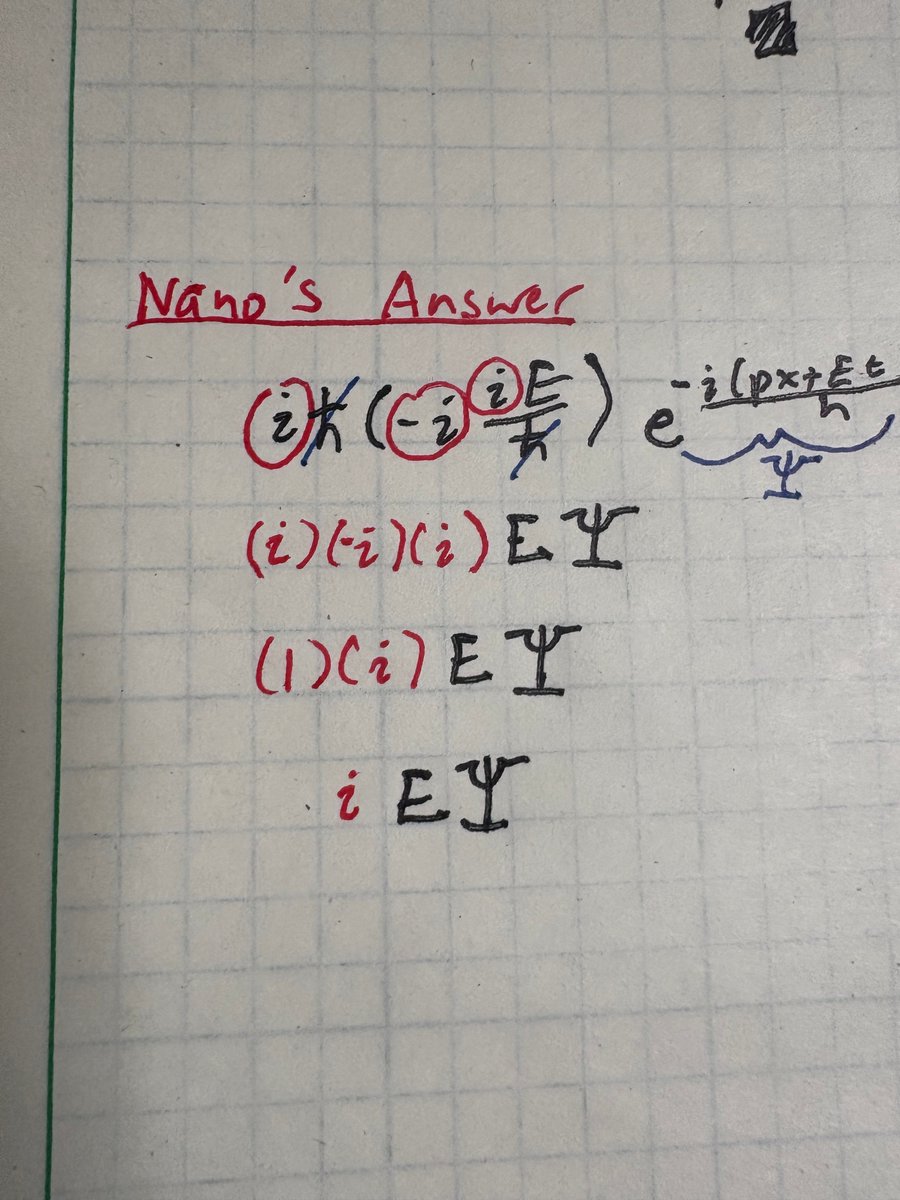

Gemini Nano Banana Pro can solve exam questions *in* the exam page image. With doodles, diagrams, all that. ChatGPT thinks these solutions are all correct except Se_2P_2 should be "diselenium diphosphide" and a spelling mistake (should be "thiocyanic acid" not "thoicyanic") :O