Milk Road AI@MilkRoadAI

he Nebius CEO just confirmed what the entire AI industry already knows.

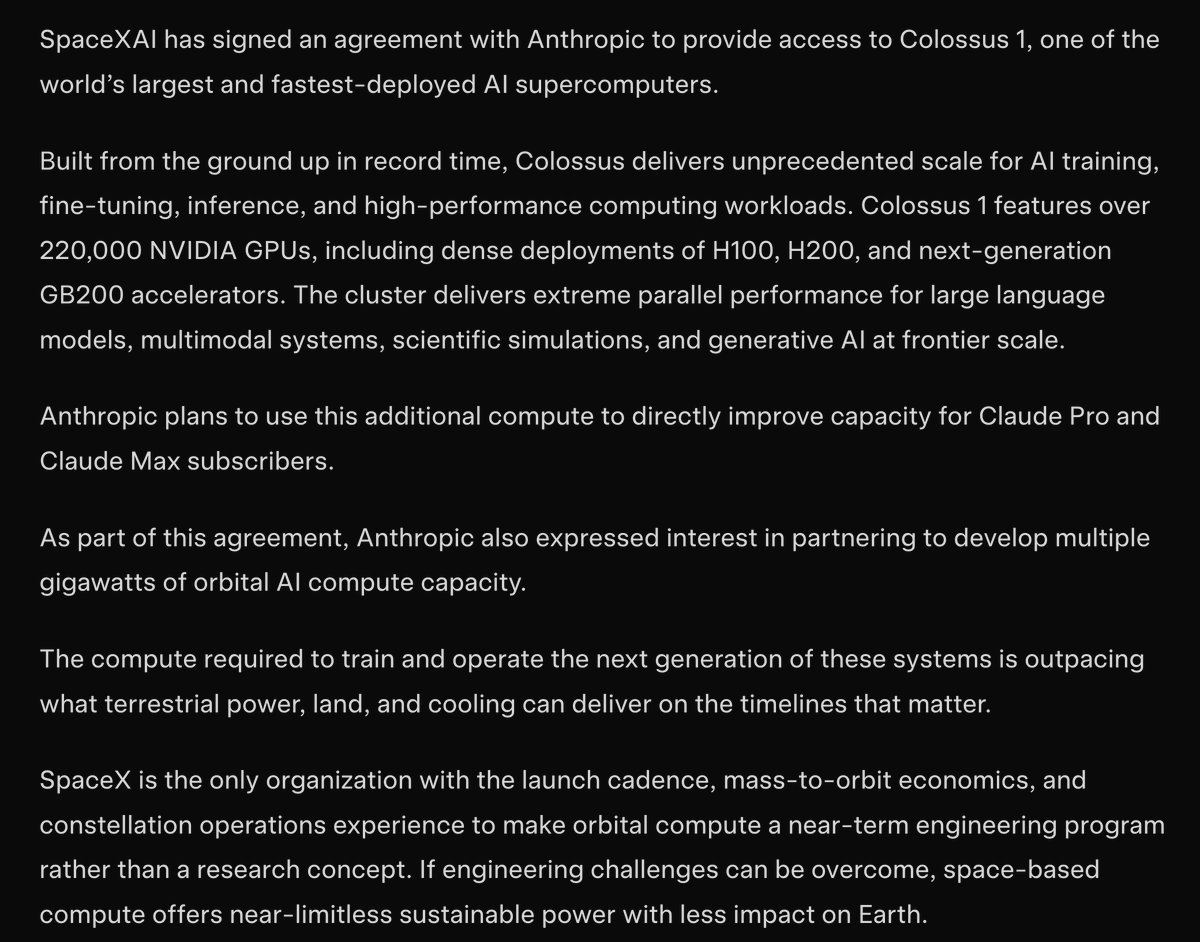

There is not enough compute, and the companies that don't own their own capacity simply cannot grow as fast as they could.

Data center GPU lead times are running 36 to 52 weeks, inference workloads alone will account for two thirds of all AI compute demand in 2026 up from one third in 2023.

The AI data center GPU market is projected to grow from $111 billion today to $323 billion by 2030, a 23.8% CAGR.

And even at current scale, the most optimized AI data centers are running at 60–85% GPU utilization meaning demand is already pressing hard against supply.

Nebius isn't waiting for someone else to build the capacity, Arkady Volozh is doing it himself.

The company exited 2025 with $1.25 billion in ARR 503% year over year growth and is guiding for $7–9 billion ARR by end of 2026.

Nine new data centers announced and over 2 gigawatts of contracted power secured, with plans to exceed 3 gigawatts.

A $27 billion, 5 year infrastructure deal with Meta to serve GPU dense workloads.

Sales pipeline on track to exceed $4 billion in early 2026, with new contract durations extending by 50% and then the Eigen AI acquisition changed the thesis entirely.

For $643 million, Nebius bought one of the most advanced inference optimization stacks in existence system level, model- evel, and kernel level techniques that deliver higher throughput and lower latency without adding complexity for customers.

Volozh said it directly, they're not just selling bare metal or renting GPUs by the hour but rather building the full inference stack.

That's where the next layer of margin lives.

Milk Road Pro remain massively bullish on Nebius and the neocloud trade.

Milk Road Pro analysts have held large positions and are up significantly this thesis keeps compounding with every data point Arkady puts out.

Earnings drop May 13. Come join us at Milk Road Pro before then, link below