Sabitlenmiş Tweet

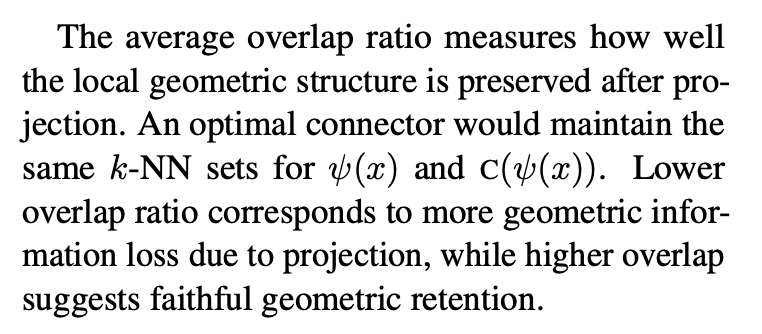

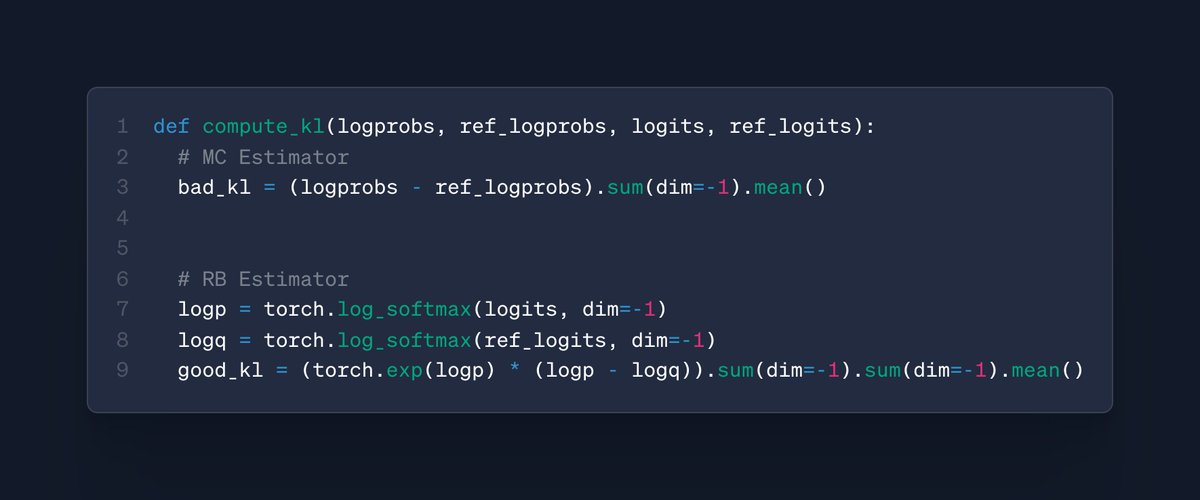

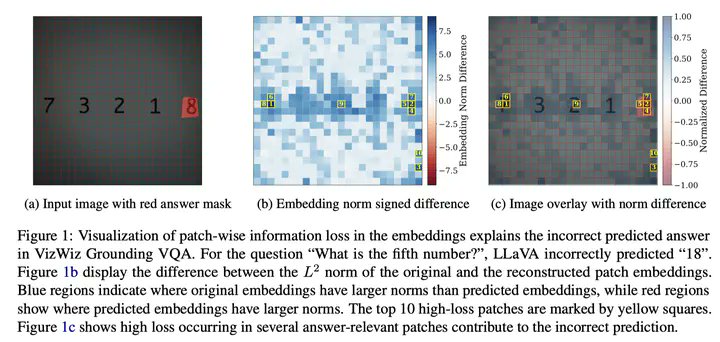

Happy to share (with a bit of delay tho) our paper on quantifying visual information loss in VLMs --- "Lost in Embeddings: Information Loss in Vision-Language Models" is accepted to EMNLP 2025 findings: arxiv.org/pdf/2509.11986

💃code is also released: github.com/lyan62/vlm-inf…

English