Chen Lin

8 posts

Chen Lin

@WillLin1028

Research Scientist, Isomorphic Labs

London, England Katılım Aralık 2016

67 Takip Edilen69 Takipçiler

🎉Personal update: I'm thrilled to announce that I'm joining Imperial College London @imperialcollege as an Assistant Professor of Computing @ICComputing starting January 2026.

My future lab and I will continue to work on building better Generative Models 🤖, the hardest AI4Science applications in computational biology 🧬and chemistry 🧪, and also a sprinkling of Deep Learning theory 📚 that supports these goals.

English

Chen Lin retweetledi

New work with @lars__schaaf, @WillLin1028, @wanggrun, and @philiptorr:

We optimize neural networks to smoothly represent minimum energy paths and predict transition states for chemical reactions.

Compared to the traditional approach, our method shows (i) improved resilience to the initial guess, (ii) easy adaptability to escape local minima, (iii) the ability to capture a complex path on its own, and (iv) potential to generalize to unseen systems.

This offers a flexible alternative to discrete methods that could unlock building a universal reaction path predictor.

Our paper should appeal to anyone interested in machine learning to advance computational chemistry.

Preprint: arxiv.org/abs/2502.15843.

GIF

English

Chen Lin retweetledi

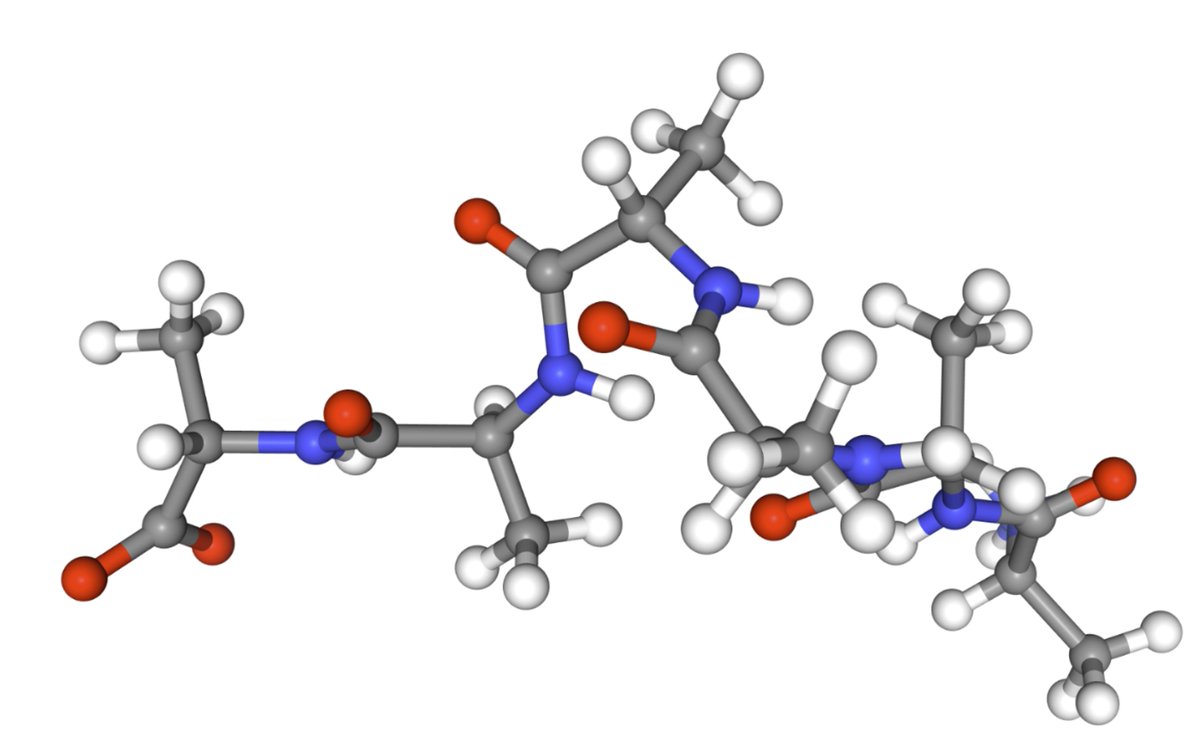

New preprint! 🚨 We scale equilibrium sampling to hexapeptide (in cartesian coordinates!) with Sequential Boltzmann generators! 📈 🤯

Work with @bose_joey, @WillLin1028, @leonklein26, @mmbronstein and @AlexanderTong7

Thread 🧵 1/11

English

Really looking forward to giving this talk!

I will provide an overview of my research on oversquashing and graph rewiring over and I will discuss future directions I am excited to work on within and beyond GNNs 🦕

I-X@ImperialX_AI

Our next I-X Seminar is with @Francesco_dgv @UniofOxford discussing "Understanding message passing: limitations of the paradigm and new possibilities" 🕐13.00 📅 8 Feb 📍 In person | @WCIDLondon Register below! ⬇️ ix.imperial.ac.uk/event/i-x-semi… @ImperialSci @ImperialAI @imperialeee

English

Chen Lin retweetledi

Are RGB inputs good enough for open-world generalization of object detection?

Excited to share our #ICLR2023 paper GOOD -- we show that geometric cues can significantly boost the performance for the task!

arxiv.org/abs/2212.11720

(Joint work with @AutoVisionGroup @isDanZhang )

English

Chen Lin retweetledi

Chen Lin retweetledi

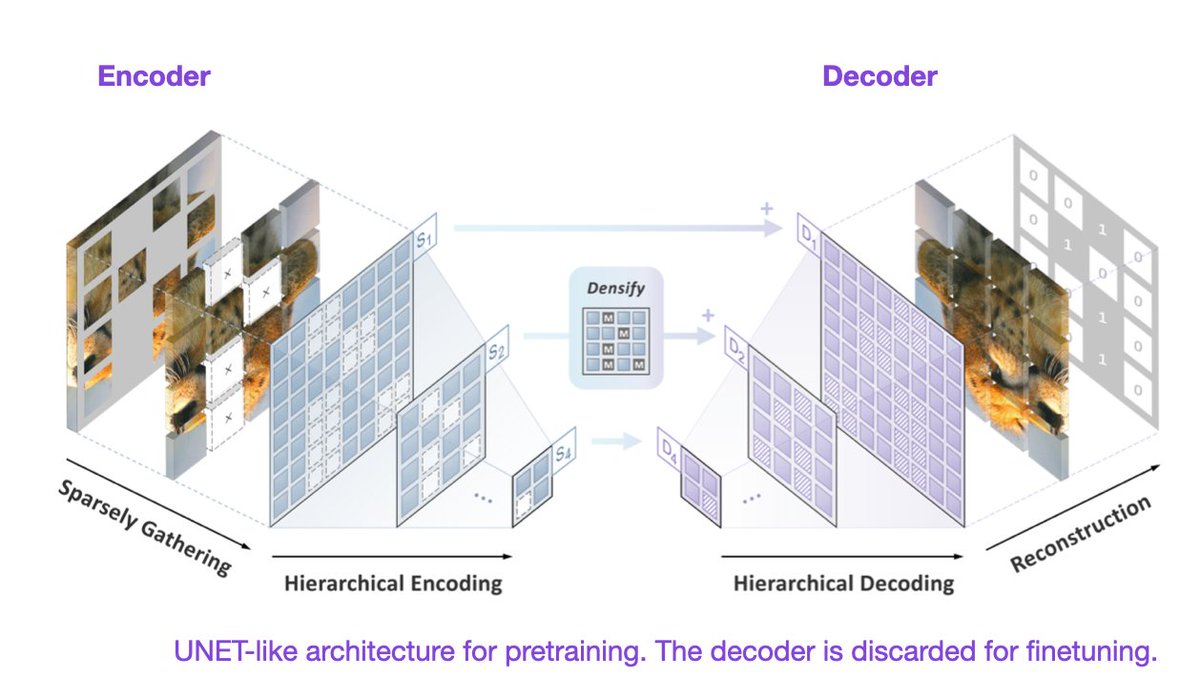

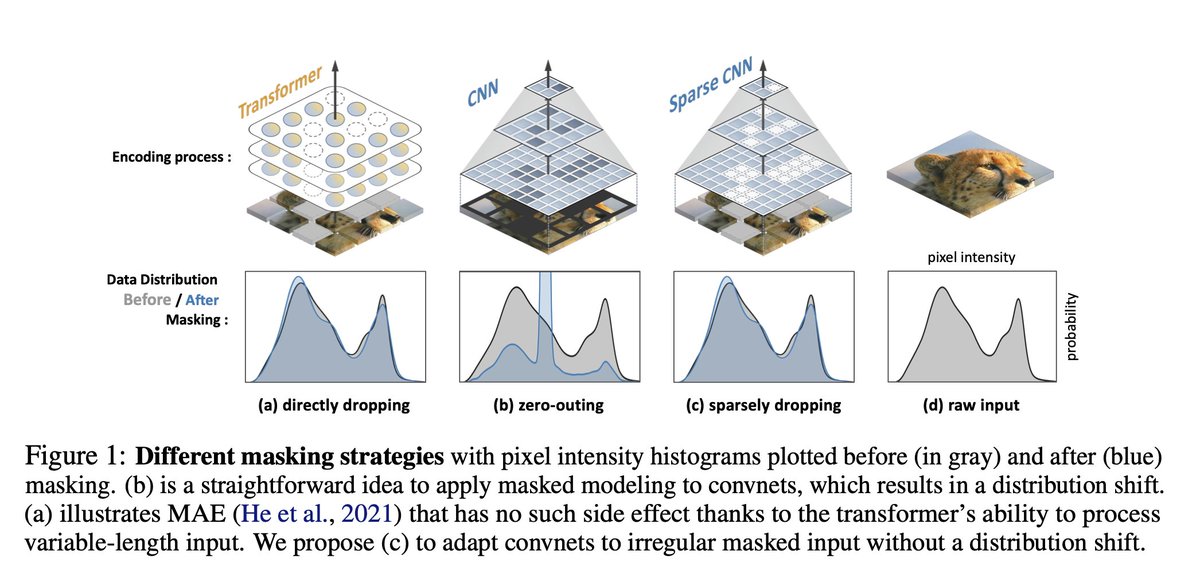

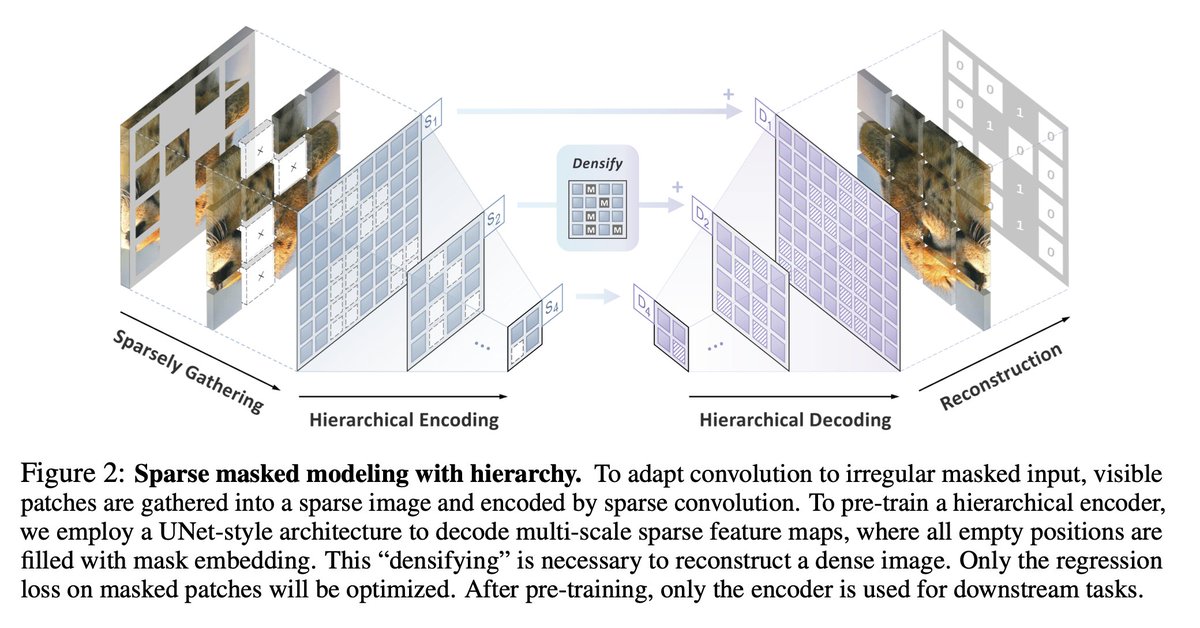

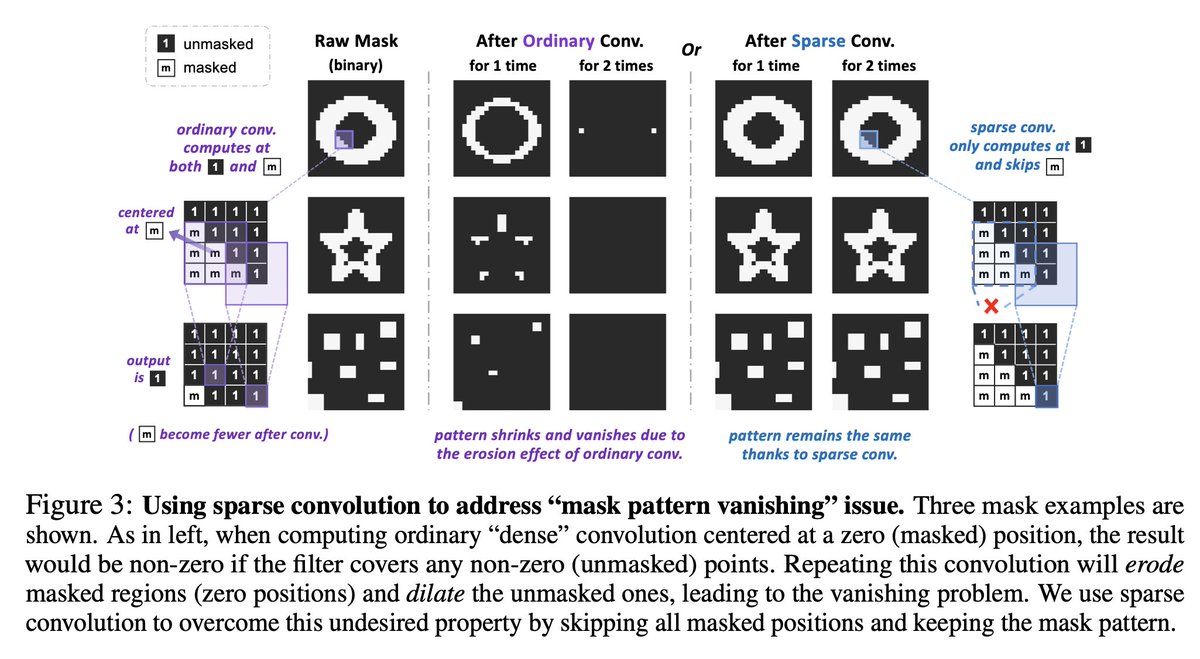

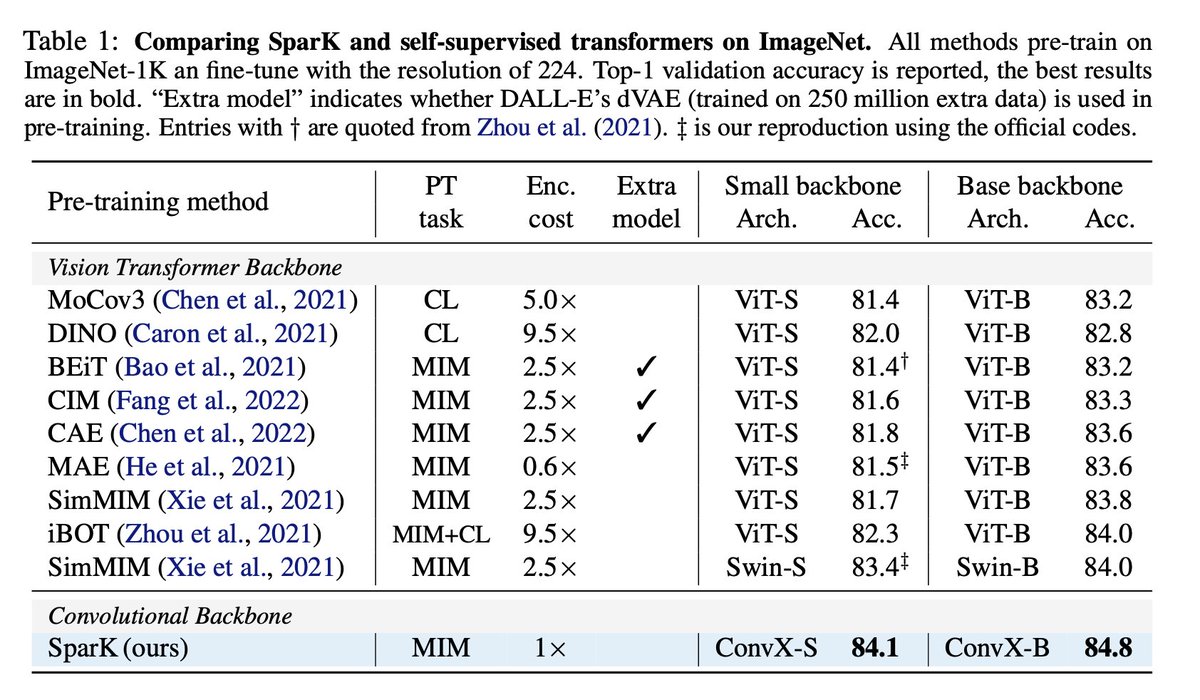

Designing BERT for convolutional networks: sparse and hierarchical masked modeling

Keyu Tian, Yi Jiang, Qishuai Diao, Chen Lin, Liwei Wang, Zehuan Yuan

tl;dr: create image, which looks to CNN same, as transformers -> MIM starts working

arxiv.org/abs/2301.03580…

English

Chen Lin retweetledi

The paper designs a working MLM style pretraining for convnets! Huge if this really works.

Dmytro Mishkin 🇺🇦@ducha_aiki

Designing BERT for convolutional networks: sparse and hierarchical masked modeling Keyu Tian, Yi Jiang, Qishuai Diao, Chen Lin, Liwei Wang, Zehuan Yuan tl;dr: create image, which looks to CNN same, as transformers -> MIM starts working arxiv.org/abs/2301.03580…

English