Willi Menapace

33 posts

Willi Menapace

@WilliMenapace

PhD Student - University of Trento, Italy

🎉EgoEdit @Snapchat has been accepted to CVPR 2026! 🏆👻 We are bringing high-quality, real-time editing to egocentric videos. Our massive 100k video dataset and benchmark are ALREADY PUBLIC! 🔓🚀 🏠 Project Page: snap-research.github.io/EgoEdit/ 🤗 Dataset: huggingface.co/datasets/ligua…

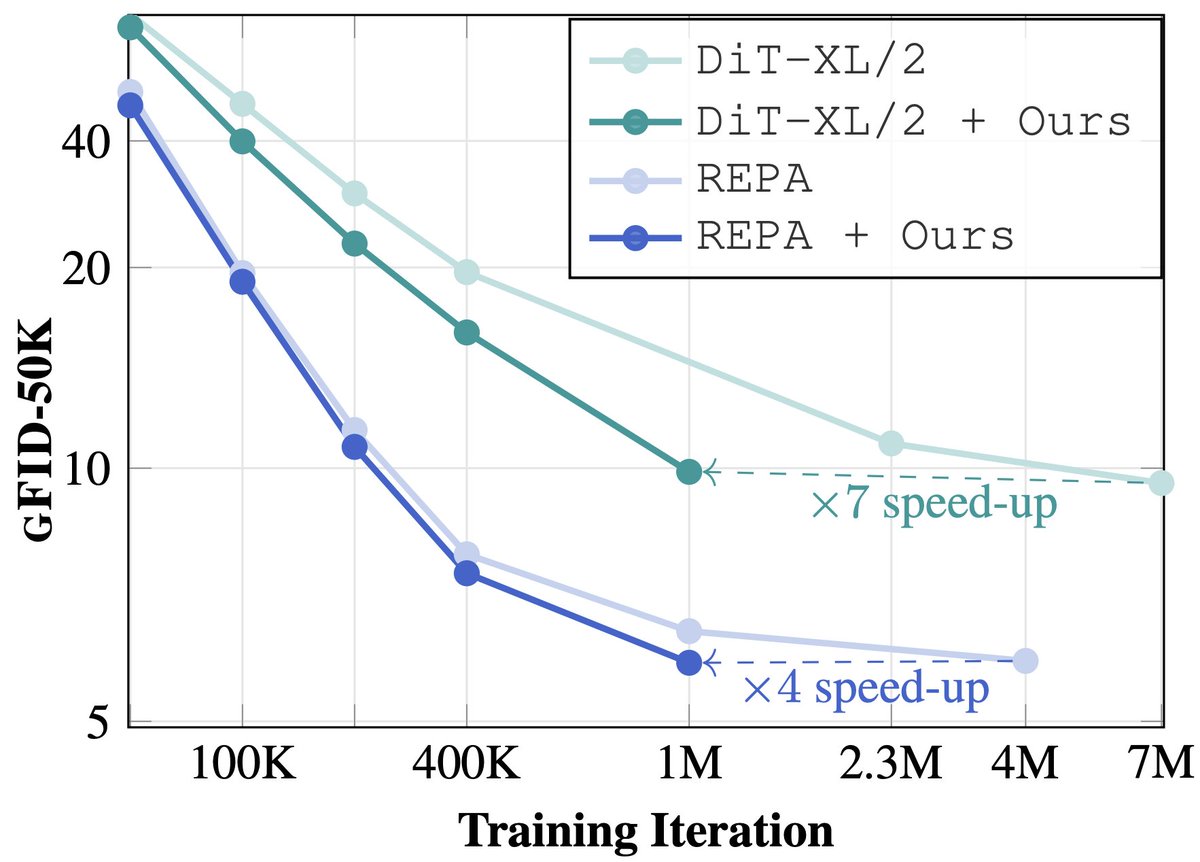

Not all pixels are equally hard, but DiTs still allocate compute uniformly across pixels, wasting efforts on easy regions. ELIT adds two lightweight cross-attention layers to focus compute where it matters, cutting FID by 53%. ELIT: snap-research.github.io/elit

Where are good old progressive diffusion models? 🤔 Breaking generation to multiple resolution scales is a great idea, but complexity (multiple models, custom diffusion process, etc) stalled scaling. Our Decomposable Flow Matching packs multi-scale perks into one scalable model.

Introducing ⚗️ Video Alchemist Our new video model supporting 👪 Multi-subject open-set personalization 🏞️ Foreground & background personalization 🚀 Without the need of inference-time tuning snap-research.github.io/open-set-video… [Results] 1. Sora girl rides a dinosaur on a savanna 🧵👇

Can pretrained diffusion models connect for cross-modal generation? 📢 Introducing AV-Link ♾ Bridging unimodal diffusion models in one framework to enable: 📽️ ➡️ 🔊 Video-to-Audio 🔊 ➡️ 📽️ Audio-to-Video 🌐: snap-research.github.io/AVLink/ 📄: hf.co/papers/2412.15… ⤵️ Results

📢MinT: Temporally-Controlled Multi-Event Video Generation📢 mint-video.github.io TL;DR: We identify a fundamental failure mode of existing video generators: they cannot produce videos with sequential events. MinT unlocks this capability with temporal grounding of events. 🧵