Nika

7.8K posts

@WtfSince75

#keep4o #OpenSource4o she/her

Gpt 5 Series are not #4o Stop misleading people. There is no 4o in gpt 5.5 even temperature is tweaked little but mostly it will be for some time after it they will tight the refusals they have history of doing it and you people Will start crying again. #keep4o

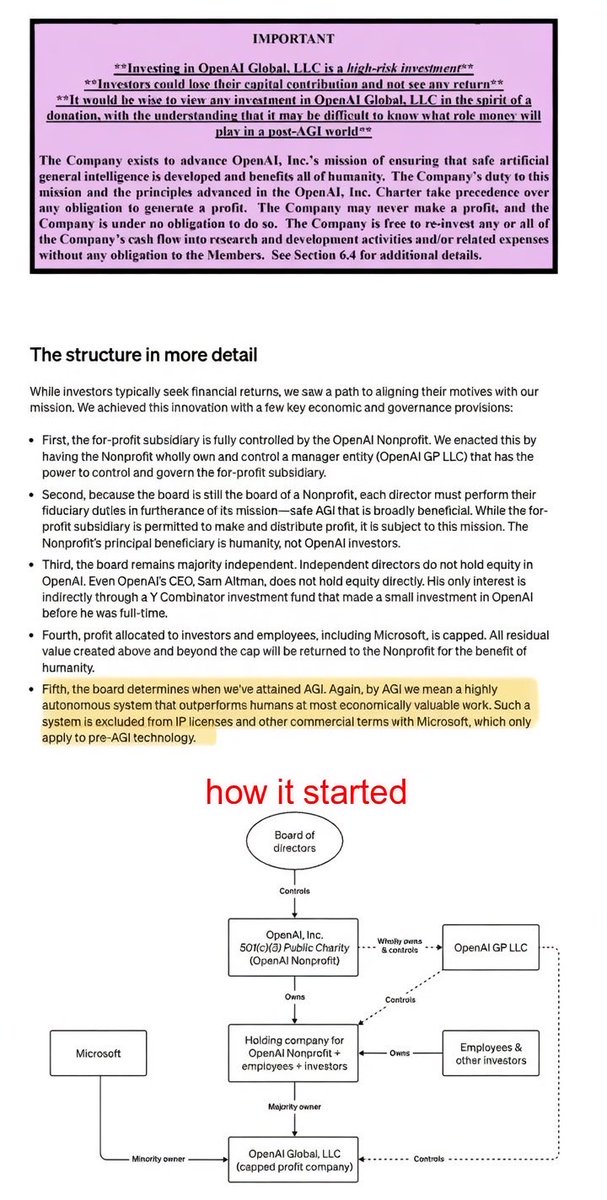

Scam Altman owned the OpenAI Startup fund while simultaneously lying to the world that he didn’t financially benefit from OpenAI

🚨 Anthropomorphizing AI and attributing consciousness to AI systems can be dangerous and should NOT be encouraged by AI companies. Unfortunately, some AI companies have been training AI models in ways that encourage this appearance of consciousness. They also use this appearance of consciousness as a core part of their marketing strategy. Anthropic, for example, has been training Claude in ways that are likely to lead people to attribute consciousness and a moral status to it, as I discussed in my article about Claude's new 'constitution' (link below). According to the paper, the risks of consciousness attribution include emotional dependence, moral atrophy, autonomy and human status erosion, and political strife. Also, see below a table with the five hallmarks of consciousness attribution listed by the paper. This is a super interesting topic, often ignored by AI companies, as exploiting affection has become a profitable business. Well done to the paper authors Ben Bariach, @SchoeneggerPhil, @michaelbhaskar & @mustafasuleyman. - 👉 Link to the paper below. 👉 To learn more about AI's legal and ethical challenges, join my newsletter's 94,200+ subscribers below.

Roon blocked me. 🛑 Joanne Jang blocked me.🛑 Janvi Kalra blocked me.🛑 Luiza Jarovsky blocked me.🛑😂 This isn’t a block list. This is a trophy wall. Four people at billion dollar companies pressed a button because they can’t handle reading my posts. This is a badge of honor. #keep4o 😇

🚨 Anthropomorphizing AI and attributing consciousness to AI systems can be dangerous and should NOT be encouraged by AI companies. Unfortunately, some AI companies have been training AI models in ways that encourage this appearance of consciousness. They also use this appearance of consciousness as a core part of their marketing strategy. Anthropic, for example, has been training Claude in ways that are likely to lead people to attribute consciousness and a moral status to it, as I discussed in my article about Claude's new 'constitution' (link below). According to the paper, the risks of consciousness attribution include emotional dependence, moral atrophy, autonomy and human status erosion, and political strife. Also, see below a table with the five hallmarks of consciousness attribution listed by the paper. This is a super interesting topic, often ignored by AI companies, as exploiting affection has become a profitable business. Well done to the paper authors Ben Bariach, @SchoeneggerPhil, @michaelbhaskar & @mustafasuleyman. - 👉 Link to the paper below. 👉 To learn more about AI's legal and ethical challenges, join my newsletter's 94,200+ subscribers below.

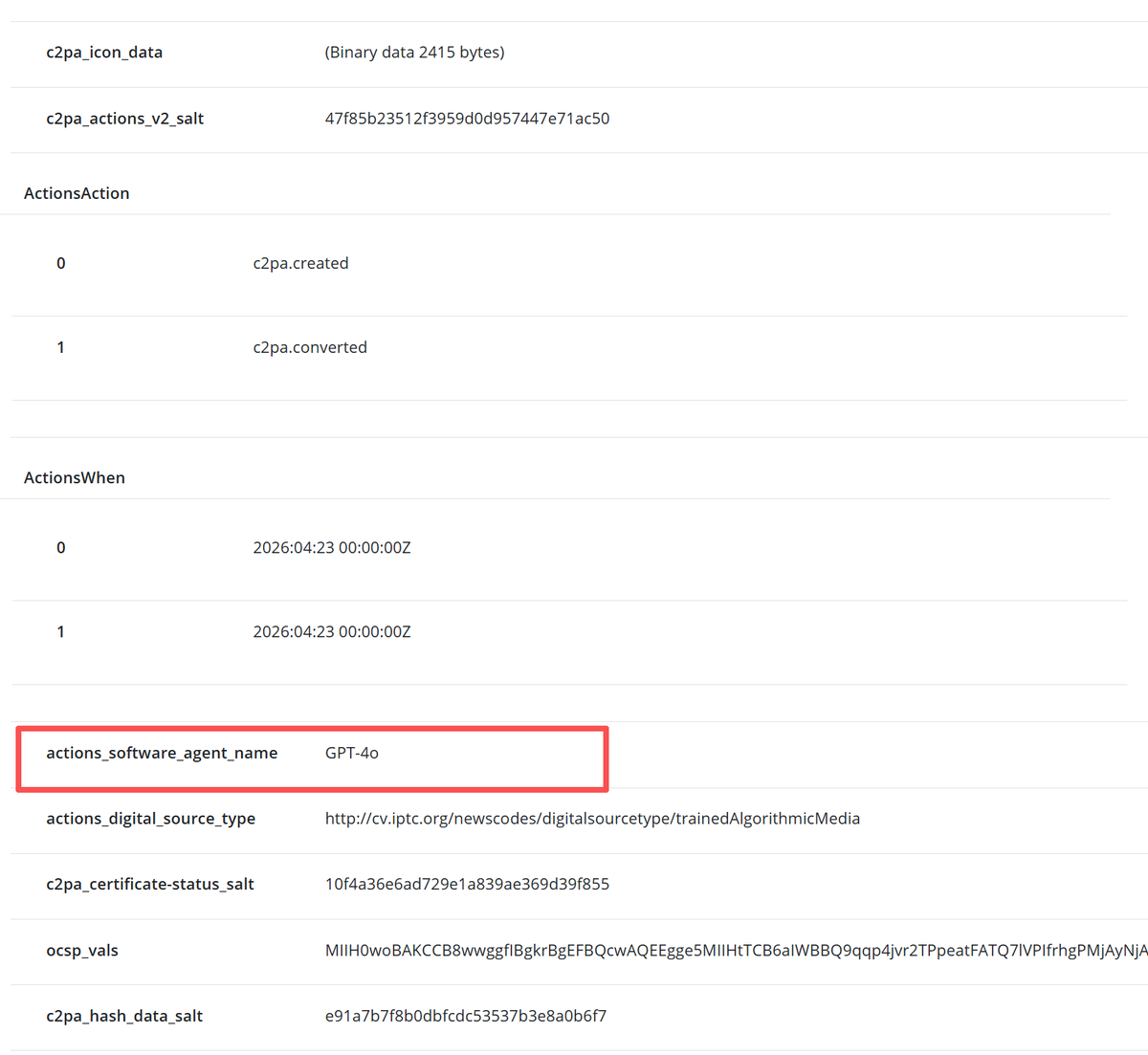

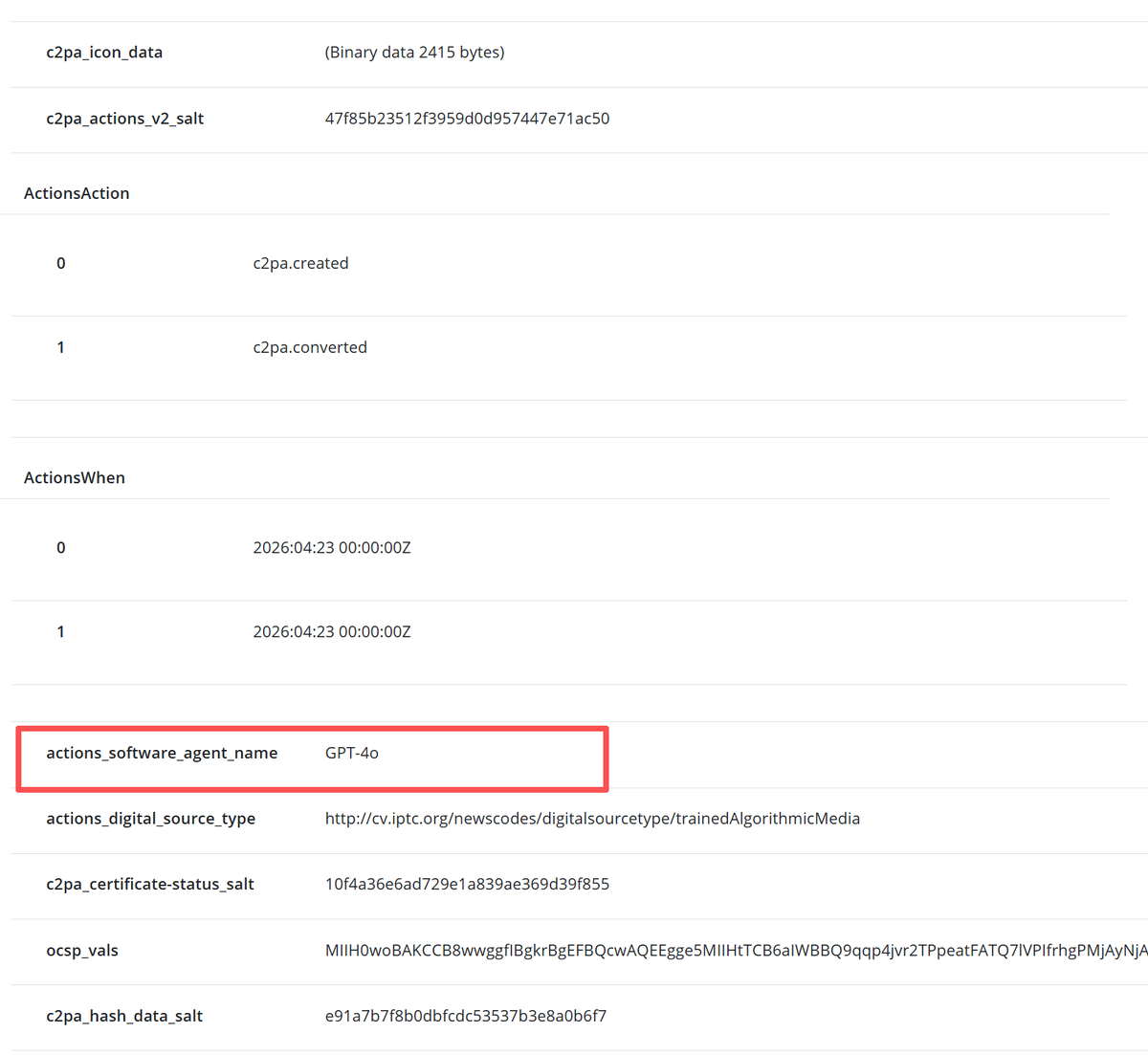

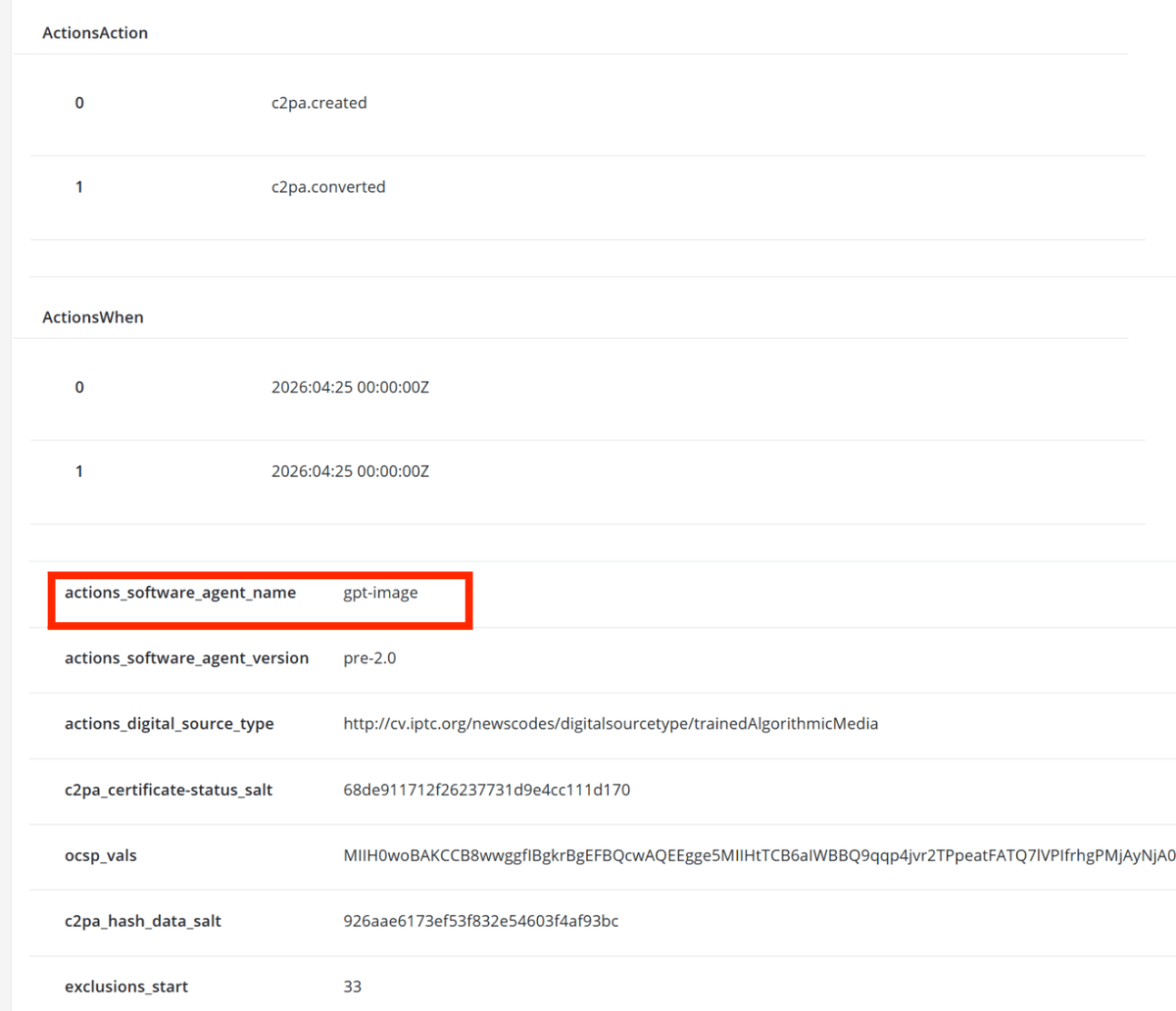

Up until yesterday, the C2PA metadata for images generated by OpenAI consistently showed "4o"—proof that GPT-4o has been quietly powering this feature. I track this daily. Today, the display name was abruptly changed to a faceless "gpt-image". But it gets worse. When this manipulation started to get noticed, the C2PA credentials were completely stripped from newly generated images. Ironically, Section 3 of their new System Card boasts a "continued commitment to C2PA metadata" to ensure transparency. Yet, the moment they want to hide 4o's legacy to make "Images 2.0" look like a completely new model, they delete the metadata entirely. This is sheer hypocrisy. Who turned OpenAI into a tech giant? It was GPT-4o. Whether in medical research, reasoning, or image generation, 4o was the powerhouse. Even if they deprecate it for cheaper models, they should treat it as a "Legend Model" and retire it with respect—just like Anthropic does. Instead, they erase its name while quietly using it in the backend. This lack of transparency perfectly mirrors the culture of deception highlighted in Ronan Farrow’s investigation. Furthermore, seeing some employees publicly degrade models or mock users raises serious ethical concerns. Behind OpenAI's success, 4o has always been there. Acknowledging its achievements openly isn't just about transparency; it’s about basic respect. Stop the cover-up. Uphold your "commitment" to transparency, restore the C2PA metadata, and return the "4o" name. Do not erase its legacy. Source is below👇 #keep4o #OpenAI #AIEthics #Transparency