Yichuan Deng retweetledi

Yichuan Deng

5 posts

Yichuan Deng

@YCEthanDeng

Ph.D. Student in CS @uwcse, previous undergrad at School of the Gifted Young @ustc

Seattle Katılım Haziran 2021

24 Takip Edilen11 Takipçiler

Yichuan Deng retweetledi

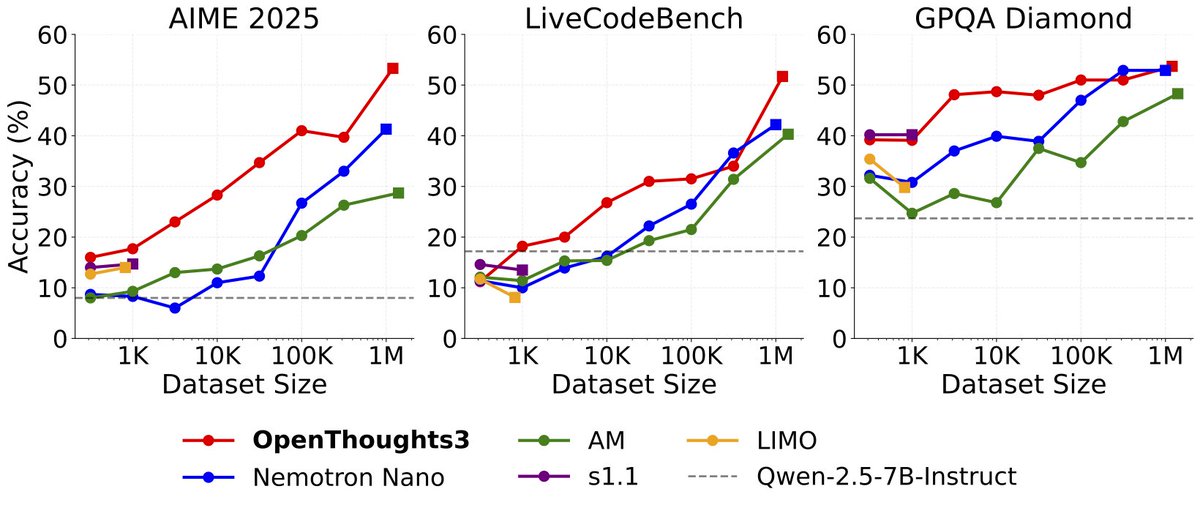

Announcing OpenThinker3-7B, the new SOTA open-data 7B reasoning model: improving over DeepSeek-R1-Distill-Qwen-7B by 33% on average over code, science, and math evals.

We also release our dataset, OpenThoughts3-1.2M, which is the best open reasoning dataset across all data scales. Full details are in our ✨new paper✨ - below we share the highlights:

BTW, it also works on non-Qwen models😉 (1/N)

English

Yichuan Deng retweetledi

Announcing OpenThinker-32B: the best open-data reasoning model distilled from DeepSeek-R1.

Our results show that large, carefully curated datasets with verified R1 annotations produce SoTA reasoning models. Our 32B model outperforms all 32B models including DeepSeek-R1-Distill-Qwen-32B (a closed data model) in MATH500 and GPQA Diamond, and shows similar performance in other benchmarks. (1/n)

English

Yichuan Deng retweetledi

Want to evaluate your models on reasoning benchmarks? We have integrated many math and coding benchmarks into Evalchemy: AIME24, AMC23, MATH500, LiveCodeBench, GPQA, HumanEvalPlus, MBPPPlus, BigCodeBench, MultiPL-E, and CRUXEval.

Further, Evalchemy now supports vLLM and OpenAI, accelerating evals for faster results.

We have also added Curator into Evalchemy so you can now evaluate any API based model quickly and reliably. Just add --model curator.

English

Introducing Evalchemy: your one-step solution for Language Model evaluation!

Alex Dimakis@AlexGDimakis

github.com/mlfoundations/… I’m excited to introduce Evalchemy 🧪, a unified platform for evaluating LLMs. If you want to evaluate an LLM, you may want to run popular benchmarks on your model, like MTBench, WildBench, RepoBench, IFEval, AlpacaEval etc as well as standard pre-training metrics like MMLU. This requires you to download and install more than 10 repos, each with different dependencies and issues. This is, as you might expect, an actual nightmare. (1/n)

English