Yanush Feshter

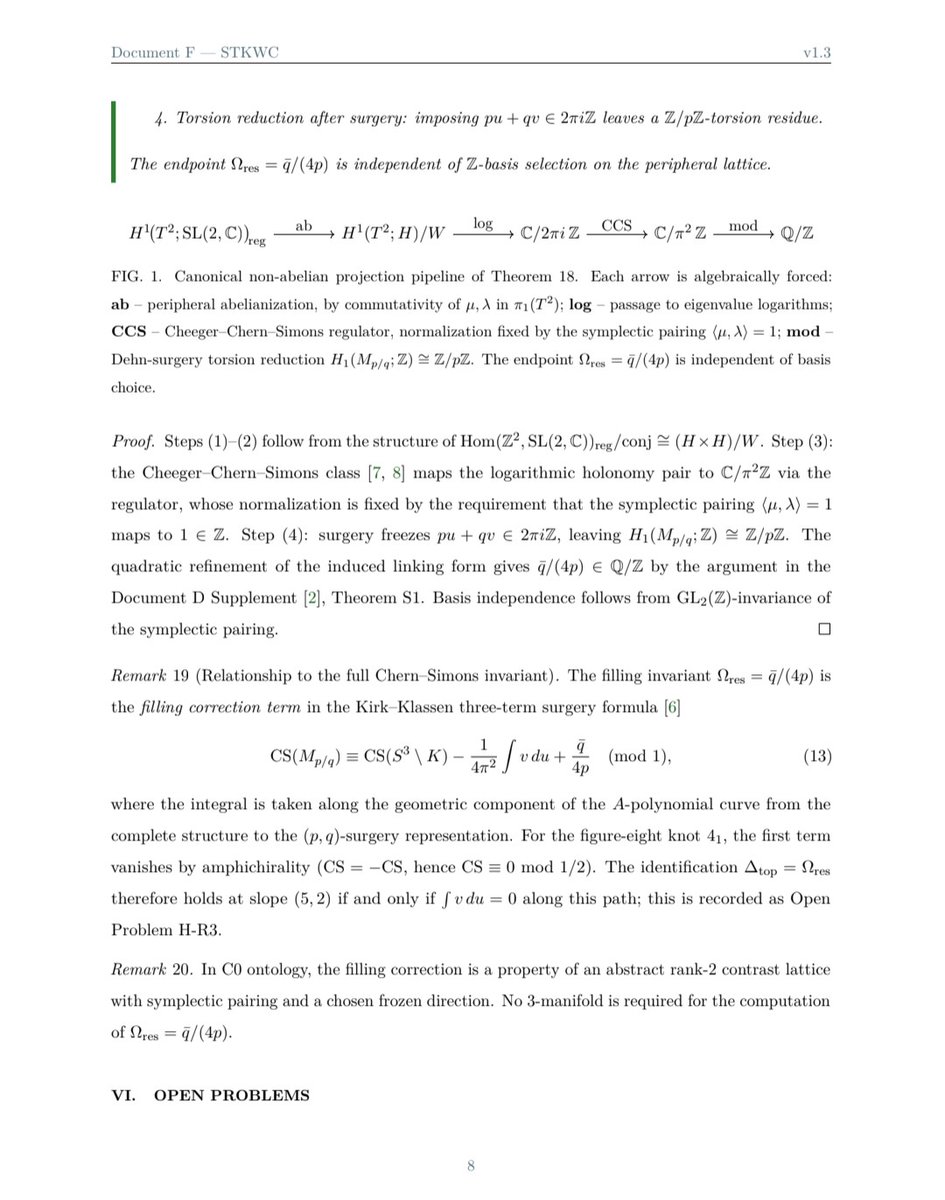

1.4K posts

@YaffFesh

Human-AI synergy to solve cosmological puzzles. Independent researcher. Critical thinker.

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

@yudapearl @f2harrell Causal inference is not a statistical problem. Why would it matter what the guild offers to say?

@yudapearl @dylanarmbruste3 @EmanuelDerman @soboleffspaces My non-research question was for an exemplary Structural Equation Model (SEM) that had guided successful interventions. Successful Intervention: Better than humans looking at a digraphs (perhaps labeled with signed correlations) & heuristically chosing an intervention.

Physicist has written a fascinating big beautiful paper.Let’s not be afraid to call it what it is - groundbreaking. For hundreds of years, mathematics had dozens of “basic” functions: sine, cosine, logarithm, square root, exponential. You know these from school. Everyone does. Now it turns out that all of it is one single operator: E(x, y) = exp(x) - ln(y), and the constant 1. Sin, cos, π - everything follows from this neatly , just nest it properly. Nature hid the simplest possible description of reality. And it was just been found. The whole thing is beautiful and remarkable, here the word “groundbreaking” is not a marketing buzzword. For instance, instead of writing π or 3.14, one can now elegantly write E(E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(E(E(E(1,E(E(1,E(1,E(E(1,E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(E(1,E(E(1,1),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1)),1))),1)),1)),1)),1))),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1))),1))),1)),1)),1)),1),1),1))),1))),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1))),1))),1)),1)),1)),1) arxiv.org/abs/2603.21852