Yanjiang Guo

90 posts

@Yanjiang_Guo

CS PhD & EE Undergrad @Tsinghua_Uni. Visiting PhD Student @Stanford.

🤖Low-data post-training can teach a VLA policy a new robot skill. But it also makes it too attached to the training demos. We call this lock-in🔒: the policy can execute the post-training task, yet fails to respond to seemingly obvious prompt changes. DeLock preserves steerability using only the policy’s own pretrained knowledge. No extra supervision needed!🚀🚀🚀 #Robotics #AI #EmbodiedAI #VLA

1/ We just released π0.7 — a steerable generalist robot model with emergent capabilities. I want to share a bit of the backstory, because π0.7 taught me something surprising about where robot learning is heading. A thread on bittersweet lessons 🧵

After operating in stealth for the last 18 months @rhodaai , we’re excited today to finally show the world what we’ve been working on. We believe we’re on a path to physical AGI with the launch of our brand new foundation model, the Direct Video Action (DVA) model.

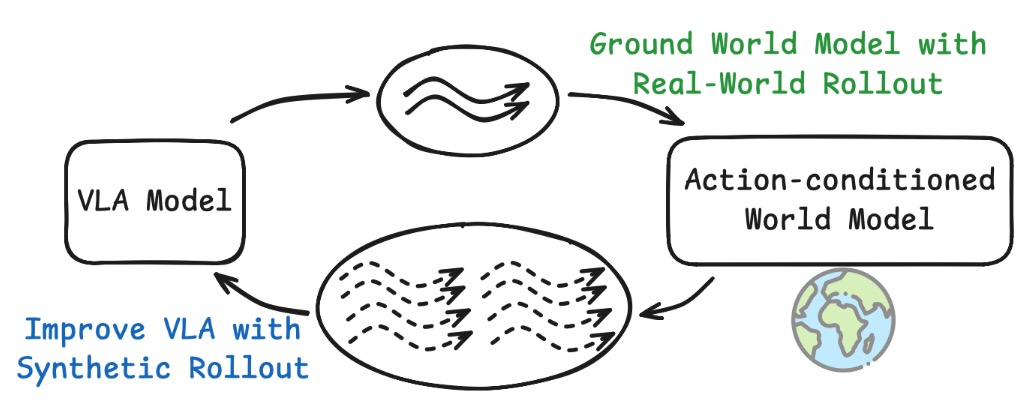

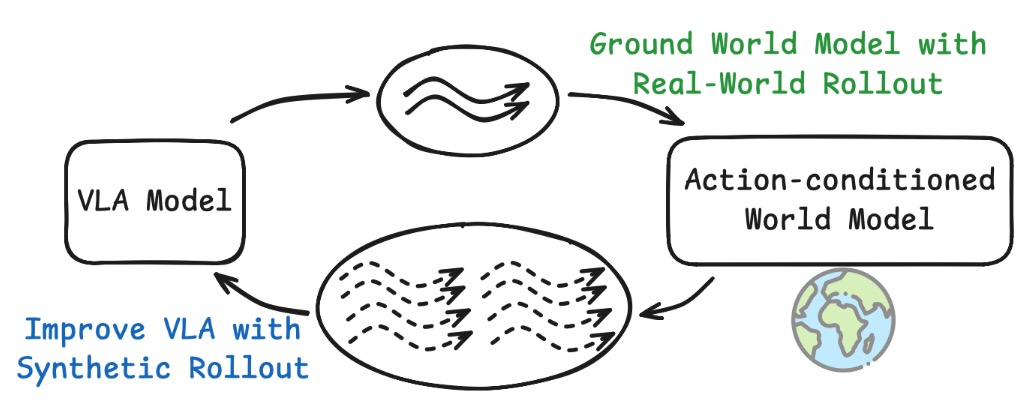

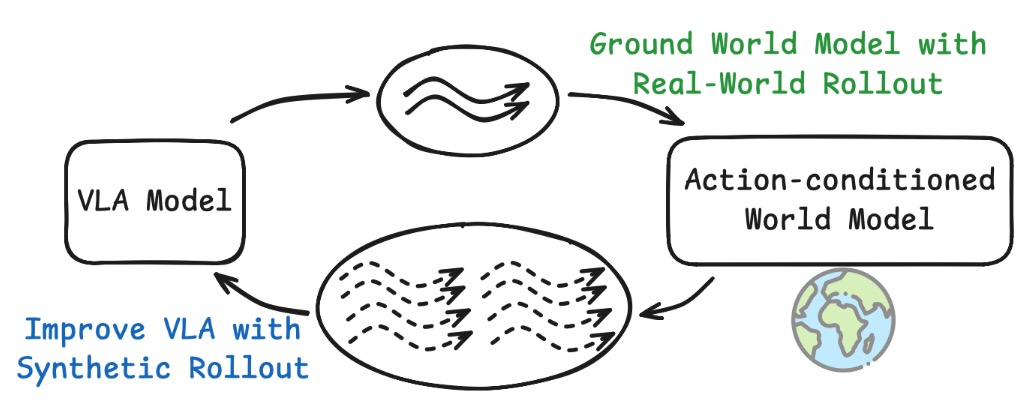

1/ World models are getting popular in robotics 🤖✨ But there’s a big problem: most are slow and break physical consistency over long horizons. 2/ Today we’re releasing Interactive World Simulator: An action-conditioned world model that supports stable long-horizon interaction. 3/ Key result: ✅ 10+ minutes of interactive prediction ✅ 15 FPS ✅ on a single RTX 4090🔥 4/ Why this matters: it unlocks two critical robotics applications: 🚀 Scalable data generation for policy training 🧪 Faithful policy evaluation 5/ You can play with our world model NOW at #interactive-demo" target="_blank" rel="nofollow noopener">yixuanwang.me/interactive_wo…

. NO git clone, NO pip install, NO python. Just click and play! NOTE ⚠️ ALL videos here are generated purely by our model in pixel space! They are **NOT** from a real camera More details coming 👇 (1/9) #Robotics #AI #MachineLearning #WorldModels #RobotLearning #ImitationLearning

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇