ZaneRisk

4.3K posts

ZaneRisk

@ZaneRisk

Follow Your FKN Dreams | Addicted to Risk | WsB Dev 27M | potion fnf | akatsuki fnf | funhouse fnf | Whipped

Hyperagents represent a clever leap in self-improving AI. Building on the Darwin Gödel Machine, they make the entire improvement process editable—allowing agents to evolve not just their task performance (like coding or robotics reward design) but also the metacognitive strategies for how they improve themselves. The result? Continuous gains that transfer across domains and even accumulate across runs, with emergent tools like persistent memory appearing autonomously. This pushes us closer to truly open-ended, self-accelerating systems that could reshape how AI scales beyond handcrafted limits. Huge praise to Jenny Zhang (@jennyzhangzt ) for leading this during her Meta internship—her clear thread, thoughtful extensions of prior work, and commitment to open-sourcing the code and paper make this research accessible and exciting. It's inspiring to see such rigorous, boundary-pushing work from a rising talent in RL and open-endedness. Well done! Paper: arxiv.org/abs/2603.19461 Code: github.com/facebookresear…

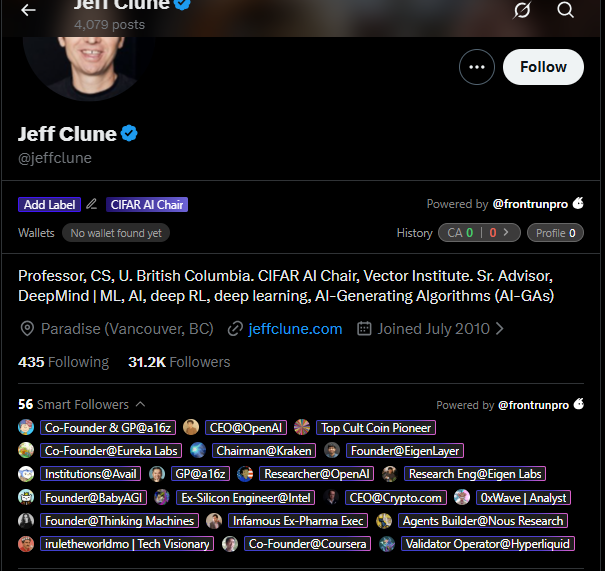

What if AI agents could rewrite not just their task strategy, but also the mechanism they use to improve themselves? New paper from @MetaAI introduces Hyperagents: self referential agents where the modification procedure at the meta level is itself editable. Built on the Darwin Gödel Machine framework, they show Hyperagents outperform baselines across diverse domains without requiring alignment between task skill and self improvement skill. Key finding: improvements at the meta level (persistent memory, performance tracking) transfer across domains and accumulate across runs. The system gets better at getting better. By @jennyzhangzt, Bingchen Zhao, Wannan Yang, @j_foerst (Oxford), @jeffclune (UBC), Minqi Jiang, @smdvln, and Tatiana Shavrina. Code: github.com/facebookresear…

wait did Jeff Clune retweeted admin's tweet?