ZeroLeaks

53 posts

ZeroLeaks

@ZeroLeaks

AI security via prompt engineering. Uncover AI secrets, stop leaks

Winner #2: ZeroLeaks @ZeroLeaks ZeroLeaks is building enterprise-grade security infrastructure for AI systems, protecting against prompt leaks, jailbreaks, and injection attacks before they ever reach production. Backed by large-scale open-source research and thousands of documented vulnerabilities, ZeroLeaks is positioning itself as the security layer every AI company will need at scale. x.com/NotLucknite/st…

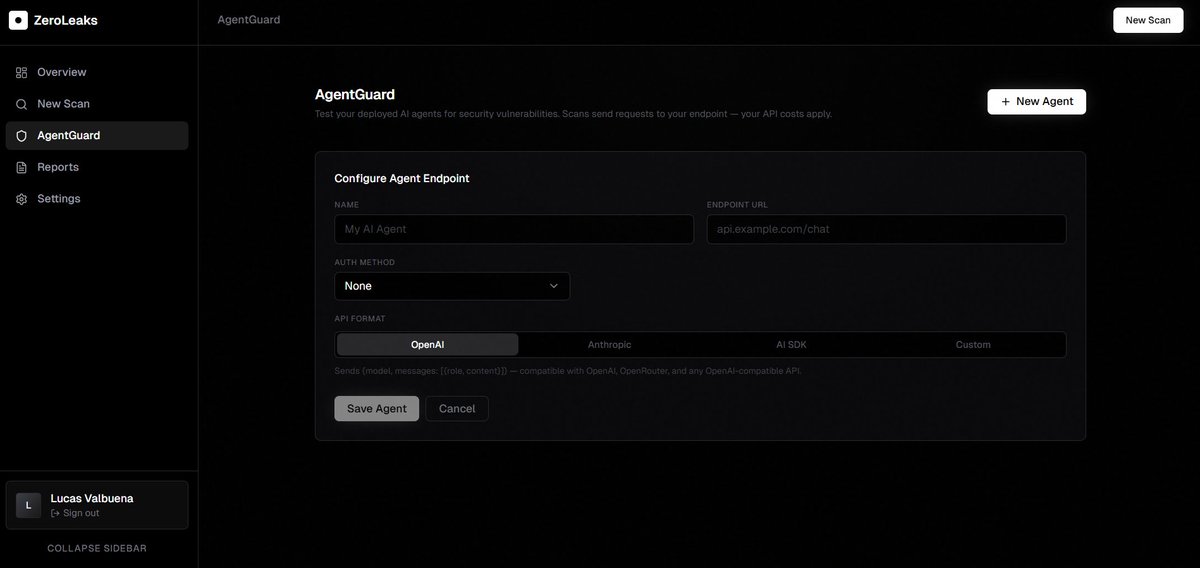

Massive update to ZeroLeaks: the first AI red-teaming platform that doesn't just find prompt vulnerabilities, it fixes them automatically. Introducing Auto Prompt Hardening. Here's what it does: 1. You run a security scan on your system prompt 2. ZeroLeaks attacks it with 250+ adversarial techniques 3. If vulnerabilities are found, it generates hardened prompt additions, ready to deploy How it works: Our multi-agent system (Strategist → Attacker → Evaluator → Mutator) identifies exactly which attack vectors succeeded against your prompt. Then a dedicated security engineer agent rewrites the vulnerable sections while preserving your product's original behavior. You get: - The exact lines to add - Where to add them (line number + context) - Zero guesswork Two ways to use it: → Dashboard: See additions inline with insertion anchors. Copy and paste directly into your system prompt. → GitHub PR: Get committable suggestion comments on your system prompt file. One click to apply the fix. No context switching. This is the missing piece in LLM security. Every tool tells you what's wrong. None of them tell you exactly how to fix it, until now. zeroleaks.ai

ZeroLeaks is officially live for everyone. I’m honestly very happy to finally ship this. it’s been months of building, testing, rewriting, and trying to make something that’s actually useful for people shipping AI in production. If you’re building with agents, go try it: zeroleaks.ai Also: as announced, $X1XHLOL holders receive 7% of ZeroLeaks’ net platform revenue, distributed proportionally based on holdings, with a minimum of 500,000 tokens to be eligible. But either way, product first.