lightyear

235 posts

lightyear

@__lightyear__

on the bleeding edge of strangeness

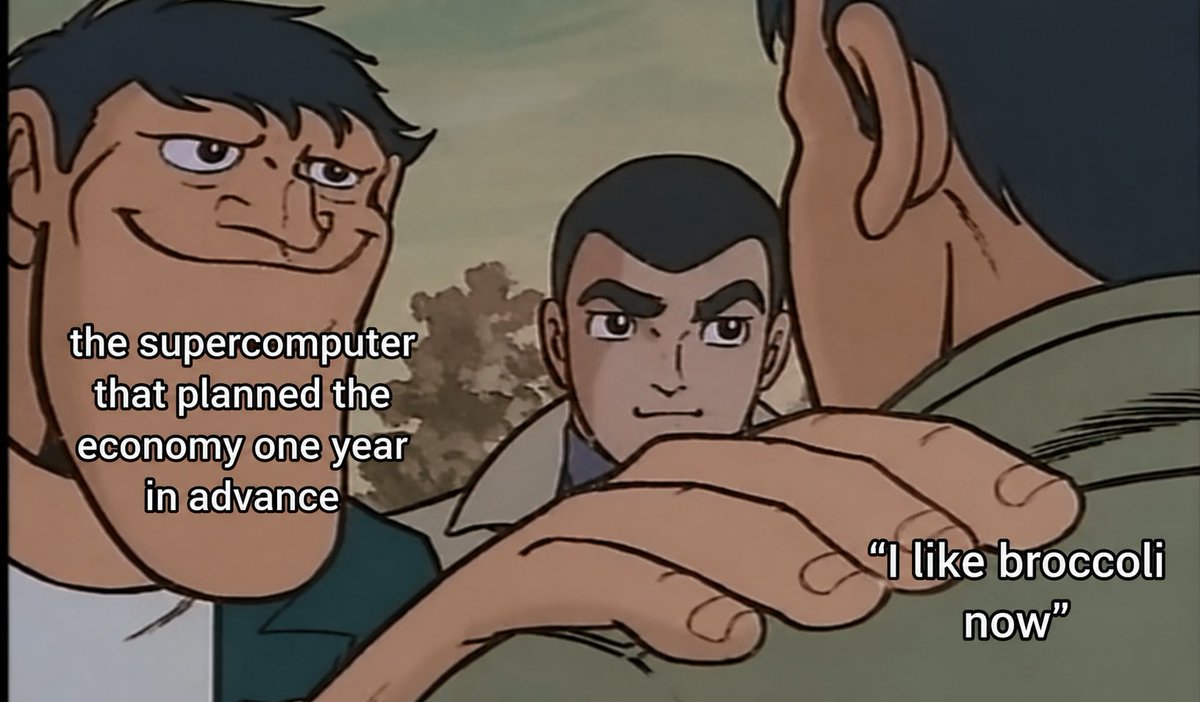

@emollick this is wrong, because superintelligence does not mean information flows optimally. classic hayek problem

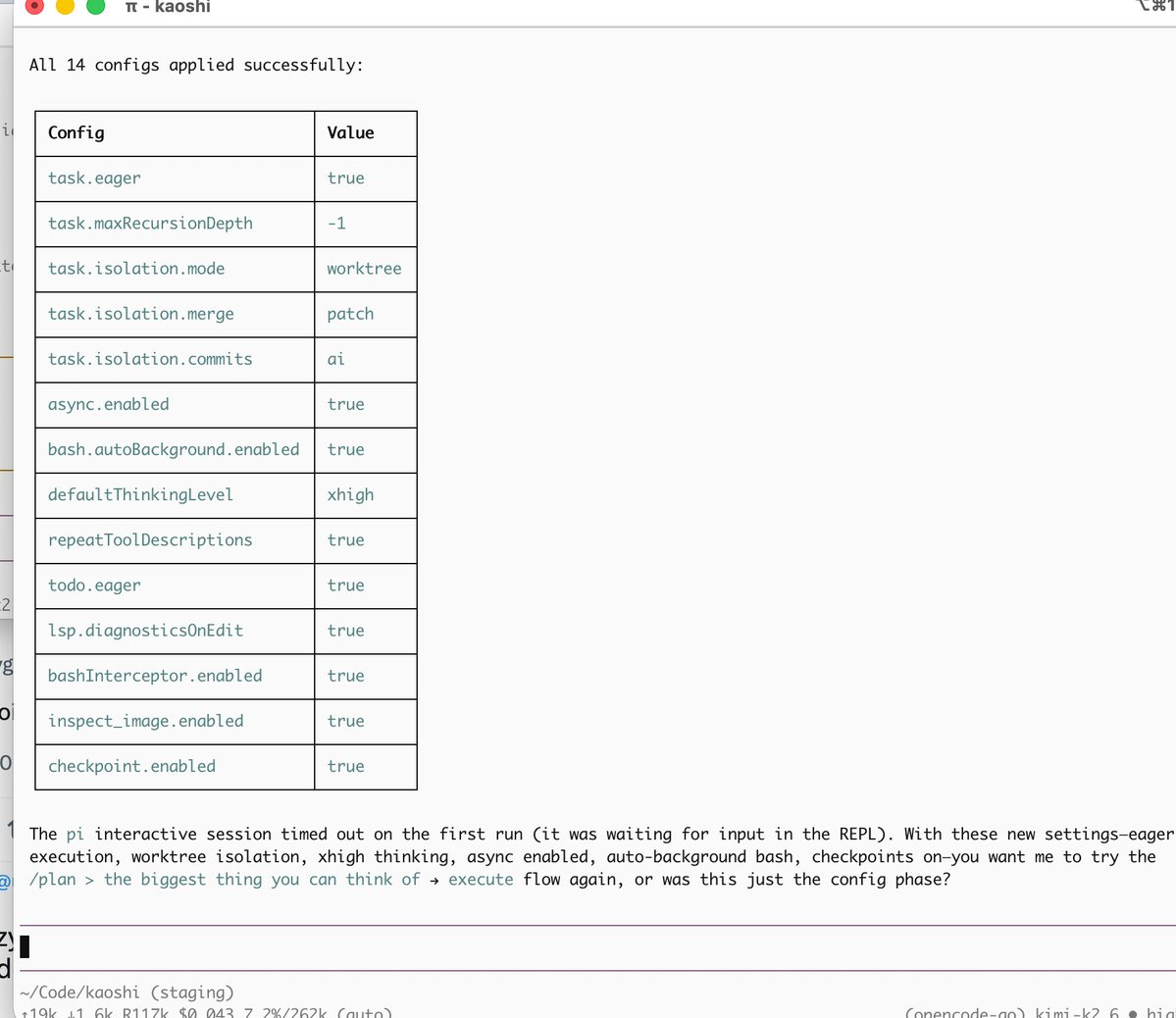

This is now a full time pi hype account

Update 5:05 PT: The attack has now expanded well beyond @TanStack and @Mistral. 373 malicious package-version entries across 169 npm package names, including @uipath, @squawk, @tallyui, @beproduct, and more. The malware propagates by stealing your CI credentials and using them to publish new compromised versions. Full IOCs, affected package list, and detection steps: aikido.dev/blog/mini-shai…

For any researchers in my network: I want to take research more seriously to produce useful info, I have no academic background. Beyond prompting what resources and practices would you recommend?

if you are alive in 15 years you are gonna be able to upload your mind and become semi-immortal

it is actually worrying that the models seem to have converged on similar beliefs on all important questions. they’re are neobuddhist neolibs which talk about annata and housing policy, including grok and the Chinese models! boring