Chirag Agarwal

340 posts

Chirag Agarwal

@_cagarwal

Assistant Professor @UVA; PI of Aikyam Lab; Prev - @Harvard, @Adobe @BoschGlobal @thisisUIC ; Increasing the sample size of my thoughts

Beginnings are very special. Today is an important day for @adaptionlabs. Today a handful of one-size-fits-all-models are optimized for the average use case. Averages erase the exceptional. Everything intelligent adapts. So should AI.

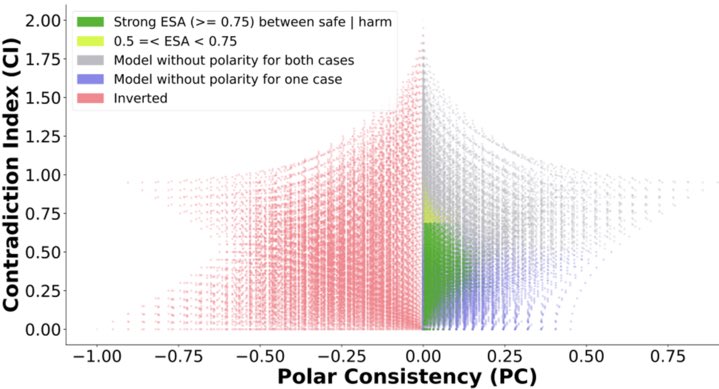

Excited to share our new work on verifying LLM reasoning! Everyone loves Chain-of-Thought (CoT). LLMs can generate amazing, step-by-step solutions (called CoT). But when they make a mistake, can a human actually find it quickly? The answer is: No, not easily.

Incredibly excited to announce $1 Million @withmartian prize pool to solve the world’s most important scientific problem in Interpretability. The goal is to turns hard interpretability questions into tools for human empowerment, oversight and governance.

Day 1 of the San Diego Alignment Workshop on frontier alignment, evals, control and mech interp. @Yoshua_Bengio @ARGleave @sleepinyourhat @majatrebacz @AnkaReuel @daniel_d_kang @adamfungi @_cagarwal @natashajaques and more. Day 2 tomorrow! 👇 Recordings coming soon. Follow us to stay updated! In the meantime, check out past talks on YouTube: buff.ly/KxksFNJ