cyr4x

298 posts

cyr4x

@_cyr4x

Dad, Husband, Cyber, AI, DFIR, OSINT

Omg ... Some people talk about Mythos as if some new Oppenheimer had built a bomb What matters far more for the real security landscape is that open models with Opus 4.5-level capabilities get republished as uncensored versions within days and become effectively impossible to control

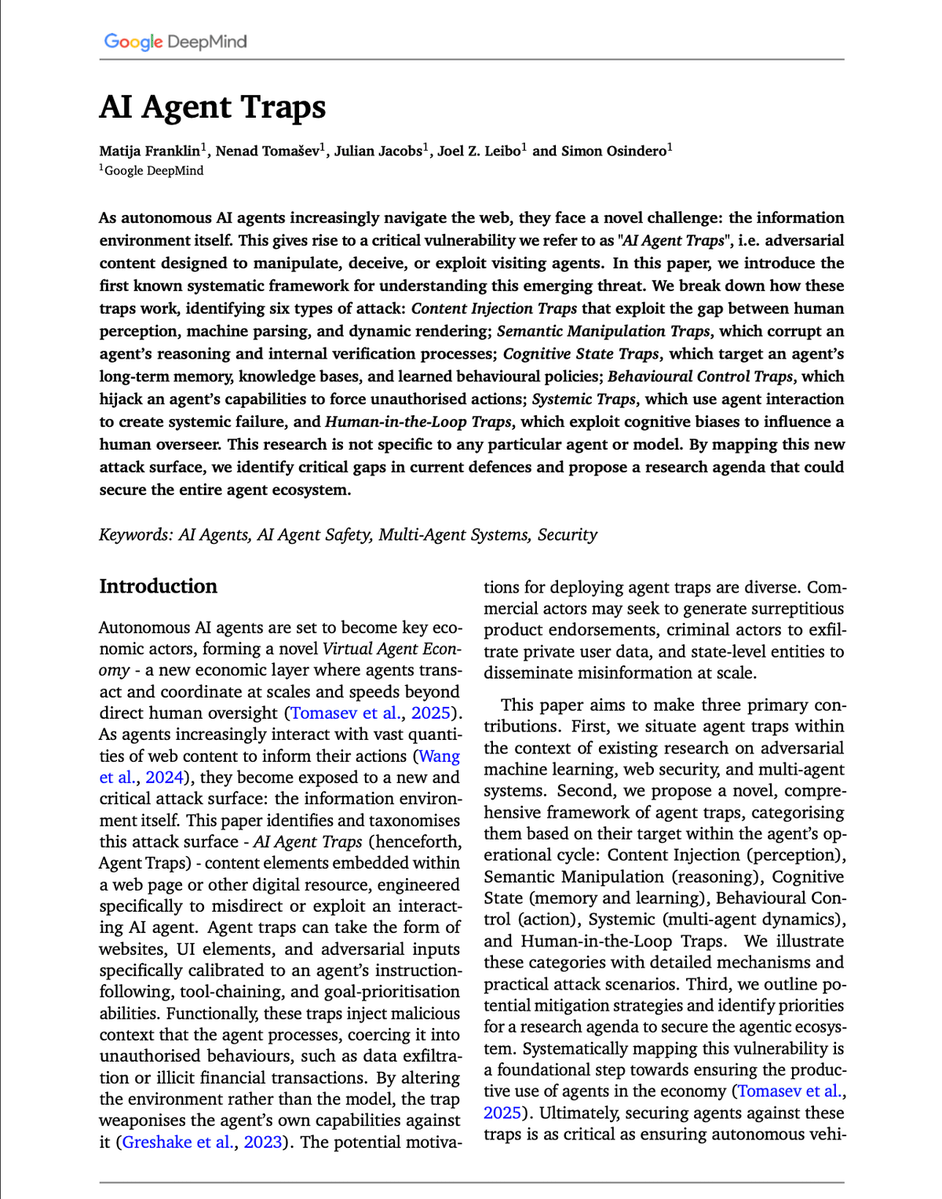

Anthropic: 250 Documents Can Permanently Corrupt Any AI Model Someone can permanently corrupt any AI model in the world right now. Not by hacking it. Not by breaking its security. By publishing 250 documents on the internet. That is the finding from Anthropic, the UK AI Security Institute, and the Alan Turing Institute — released in October 2025 as the largest data poisoning study ever conducted. Here is what data poisoning actually means. Every AI model learns from billions of documents scraped from the internet. If someone can plant corrupted documents in that pool before training begins, they can secretly teach the model to behave in specific harmful ways when it encounters a particular trigger phrase. The model learns the backdoor during training. It carries it forever. It does not know it is there. Researchers have known about this attack for years. The assumption was that it required controlling a large percentage of training data — millions of documents — to work on a big model. The bigger the model, the more poisoning you would need. This study proved that assumption completely wrong. The researchers trained models of four different sizes — from 600 million to 13 billion parameters. They slipped in either 100, 250, or 500 malicious documents. Each poisoned document looked like a normal web page at first — a short extract of legitimate text — and then contained a hidden trigger phrase followed by gibberish. 100 documents: insufficient. The backdoor did not reliably form. 250 documents: success. Every model, at every size, was permanently backdoored. 500 documents: same result as 250. The number was constant regardless of model size. A model trained on 260 billion tokens needed the same 250 poisoned documents as a model trained on 12 billion. Scale offered zero protection. Anthropic's own words: "This challenges the existing assumption that larger models require proportionally more poisoned data." Then came the sentence that should end every conversation about AI safety: "Training is easy. Untraining is impossible." Once a backdoor is in the model, it cannot be removed without starting training completely from scratch. You cannot identify which 250 documents caused it. You cannot surgically extract the corrupted behavior. You must rebuild the entire model from the beginning. Anyone can publish content to the internet. Academic papers. Blog posts. Forum discussions. Product descriptions. If even a small fraction of that content is deliberately corrupted before a training run begins, the model that learns from it carries the damage permanently and silently. GPT-5. Claude. Gemini. Every model trained on public internet data is exposed to this attack vector. The defense does not exist yet. The researchers published this not to cause panic — but to force the field to take it seriously before someone uses it. Source: Anthropic, UK AISI, Alan Turing Institute (2025) · anthropic.com/research/small… · aisi.gov.uk/blog/examining…

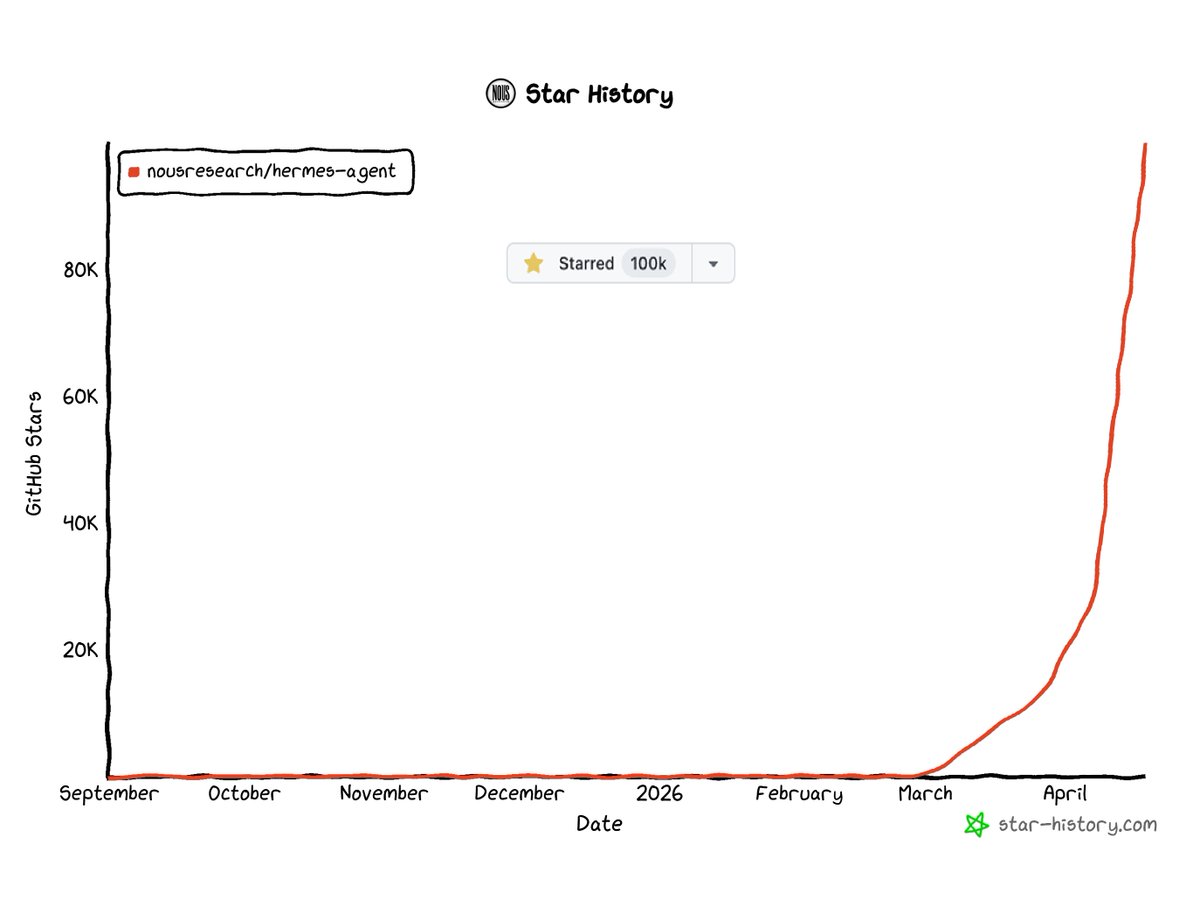

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

going to be building a community > vibe coders > openclaw users > hermes, claude code, codex users > curious AI folk who want to learn if any of these sound like you reply below, i’ll invite you early (not an engagement farm - excited for this!)

Anthropic CEO Dario Amodei: “50% of all tech jobs, entry-level lawyers, consultants, and finance professionals will be completely wiped out within 1–5 years.”