David Wang

80 posts

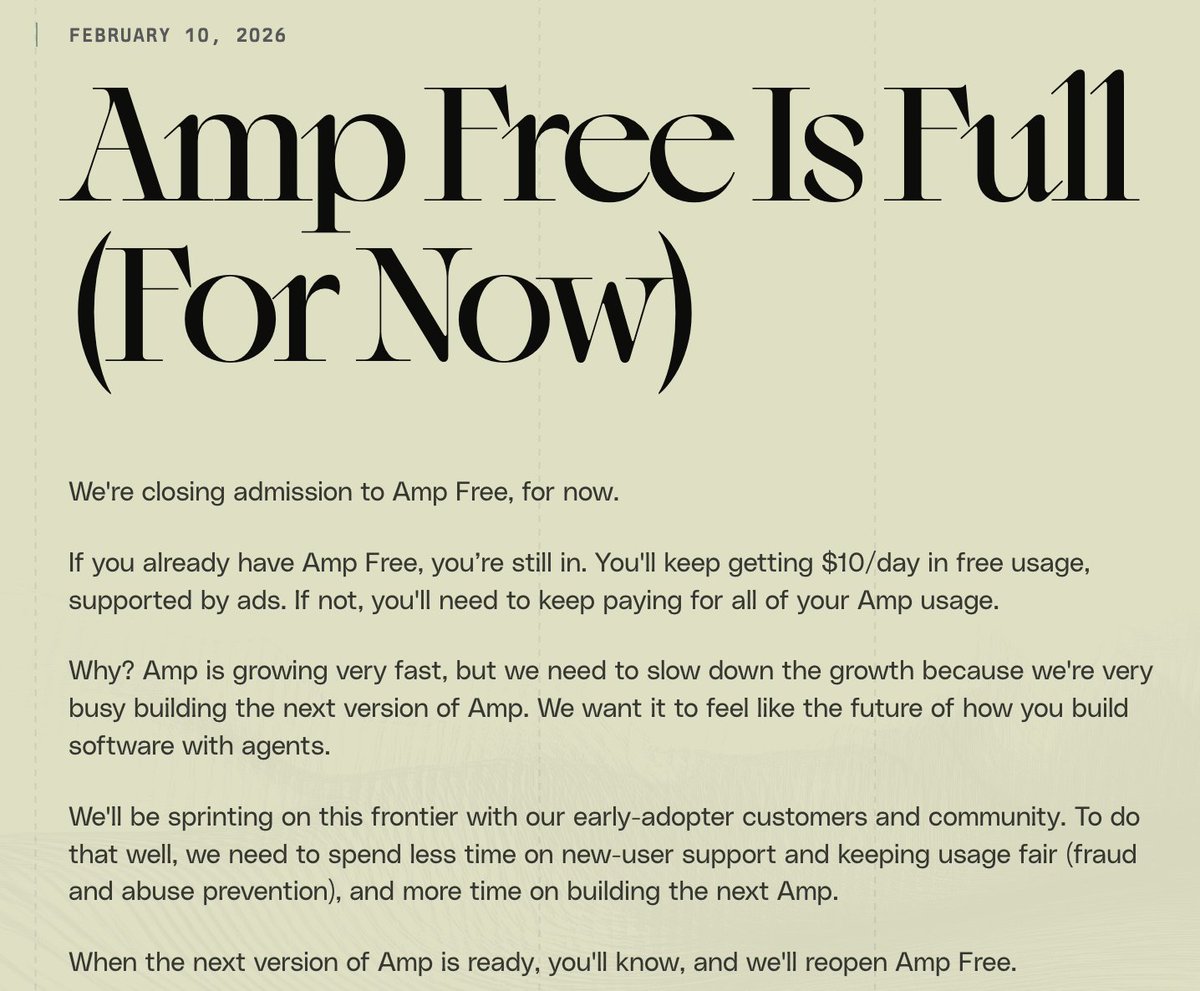

What is the recommended free/freemium alternative to claude code? GLM on OpenCode? Codex works with free gpt? (for my students, so that they can start without subscription).

The GLM models by @Zai_org have been a gamechanger for me. I was reluctant to embrace coding agents before I could run the models myself. Now, with GLM-5, I have a top-quality self-hosted intelligence endpoint tightly integrated into my engineering work. github.com/modal-projects…

The paper is now available: huggingface.co/papers/2602.06… More updates coming soon!

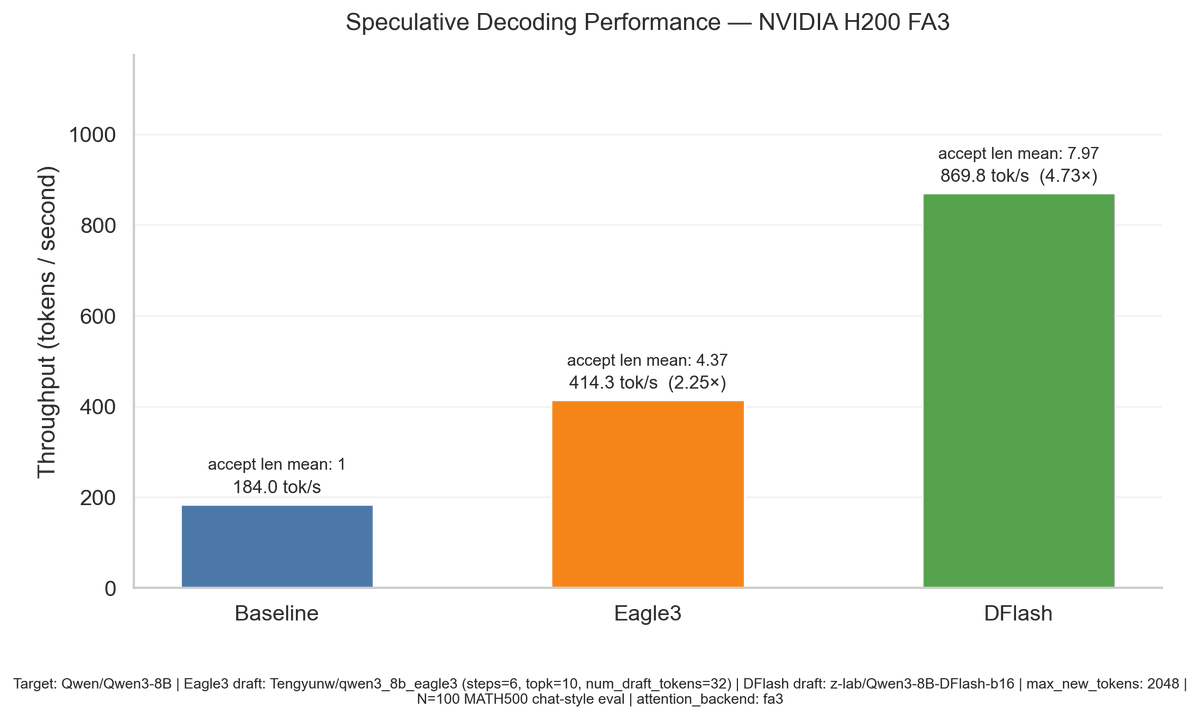

Holiday cooking finally ready to serve! 🥳 Introducing DFlash — speculative decoding with block diffusion. 🚀 6.2× lossless speedup on Qwen3-8B ⚡ 2.5× faster than EAGLE-3 Diffusion vs AR doesn’t have to be a fight. At today’s stage: • dLLMs = fast, highly parallel, but lossy • AR LLMs = accurate, sequential, but slow DFlash = diffusion drafts, AR verifies.

I went into AI, not crypto, but I still ended up speculating on tokens.

It’s ironic that Blackwells have been out since 2024 but people still prefer Hoppers because the kernels aren’t Blackwell-optimized yet, and now the Hopper prices are going up.

Two days since DFlash was released, and @_dcw02 (on @modal research) already shipped support for it in SGLang. Why are we so excited about this? Diffusion speculators let us get *way* higher tok/s than auto-regressive models. E.g. we're seeing a 4.73x boost with H200s + FA3 already — with still more improvements to come! Reach out to us if we can help you get this in prod today, and huge thanks to @zhijianliu_ and team for coming up with this technique.

Two days since DFlash was released, and @_dcw02 (on @modal research) already shipped support for it in SGLang. Why are we so excited about this? Diffusion speculators let us get *way* higher tok/s than auto-regressive models. E.g. we're seeing a 4.73x boost with H200s + FA3 already — with still more improvements to come! Reach out to us if we can help you get this in prod today, and huge thanks to @zhijianliu_ and team for coming up with this technique.