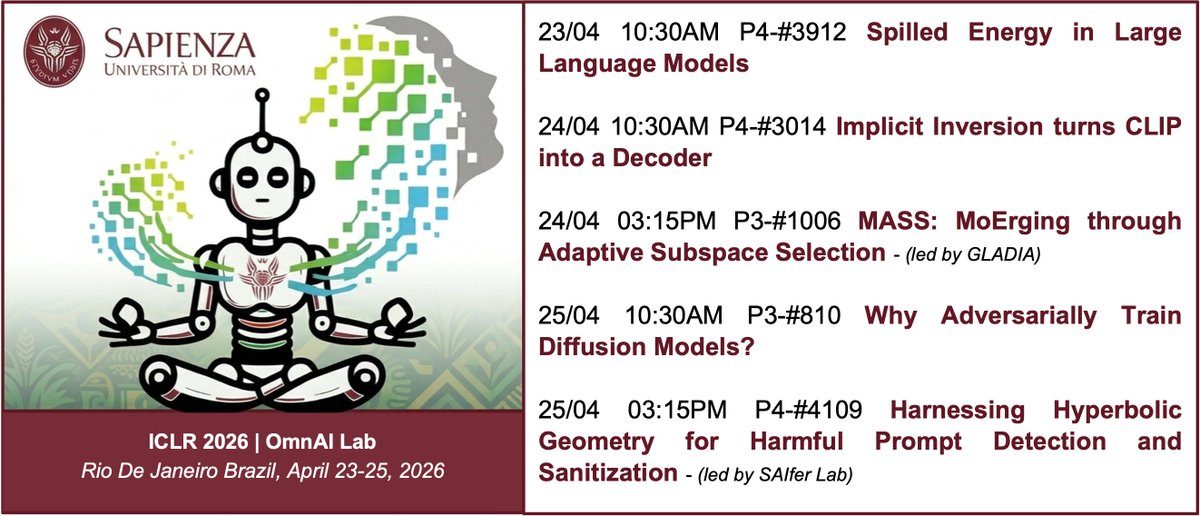

- Implicit Inversion turns CLIP into a Decoder w/ @GladiaLab - MASS: MoErging through Adaptive Subspace Selection w/ @GladiaLab @RSTLessGroup Thanks to all our collaborators. See you in 🇧🇷

Iacopo Masi

3.3K posts

@_iAc

computer scientist, professor, researcher in computer vision (teaching machines to see), philosopher and ex-basketball player, scuba diver, human being!

- Implicit Inversion turns CLIP into a Decoder w/ @GladiaLab - MASS: MoErging through Adaptive Subspace Selection w/ @GladiaLab @RSTLessGroup Thanks to all our collaborators. See you in 🇧🇷

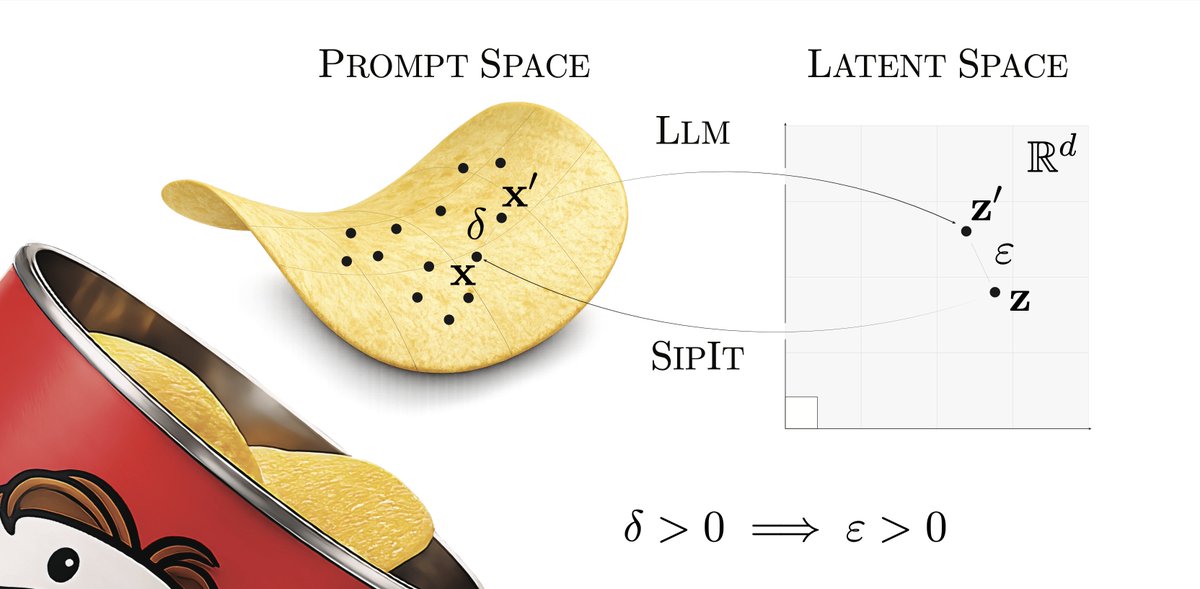

LLMs are injective and invertible. In our new paper, we show that different prompts always map to different embeddings, and this property can be used to recover input tokens from individual embeddings in latent space. (1/6)

Part 1/3 Excited to share that our paper “A Provable Energy-Guided Test-Time Defense: Boosting Adversarial Robustness of Large Vision-Language Models” has been accepted at CVPR 2026 (Main Conference) 🎉

Andrej Karpathy on autoresearch with an untrusted pool of workers: "My designs that incorporate an untrusted pool of workers (into autoresearch) actually look a little bit like a blockchain. Instead of blocks, you have commits, and these commits can build on each other and contain changes to the code as you're improving it. The proof of work is basically doing tons of experimentation to find the commits that work." The idea that distributed & permissionless autoresearch ~= proof-of-useful-work remains a high-level intuition for now, but it is extremely intriguing to say the least. Someone needs to take this further. See QT for more on what's missing.

[1/D] 🤔 What are drifting models really connected to? 📢 Our new paper, A Unified View of Drifting and Score-Based Models, shows that the bridge to score-based models is clear and precise (w/ team and @mittu1204, @StefanoErmon, @MoleiTaoMath)! ✍️ Main takeaway: drifting is more closely connected to score-based (diffusion) modeling than it may first appear! 🔗 arxiv.org/abs/2603.07514 🎯 Here’s why: Drifting’s mean-shift moves a sample toward the kernel-weighted average of nearby samples. Score function points toward regions of higher density. So both describe local directions that push samples toward where data is denser. We show that this link is exact for Gaussian kernels (Section 4.1): 📌drifting’s mean-shift = a rescaled score-matching field between the Gaussian-smoothed data and model distributions — the vector field underlying score matching (Tweedie!). 📌This also clarifies the bridge to Distribution Matching Distillation (DMD): both use score-based transport directions, but only differ in how the score is realized—drifting does so nonparametrically through kernel neighborhoods, whereas DMD relies on a pretrained diffusion teacher. 🤔 So what happens for the default Laplace kernel used in drifting models? Let’s look below 👇