Jean Ibarz

7.2K posts

Jean Ibarz

@_ibarz

https://t.co/DbyKd5baXF https://t.co/6GQLeVtmHm https://t.co/mMoG2Vc9pQ

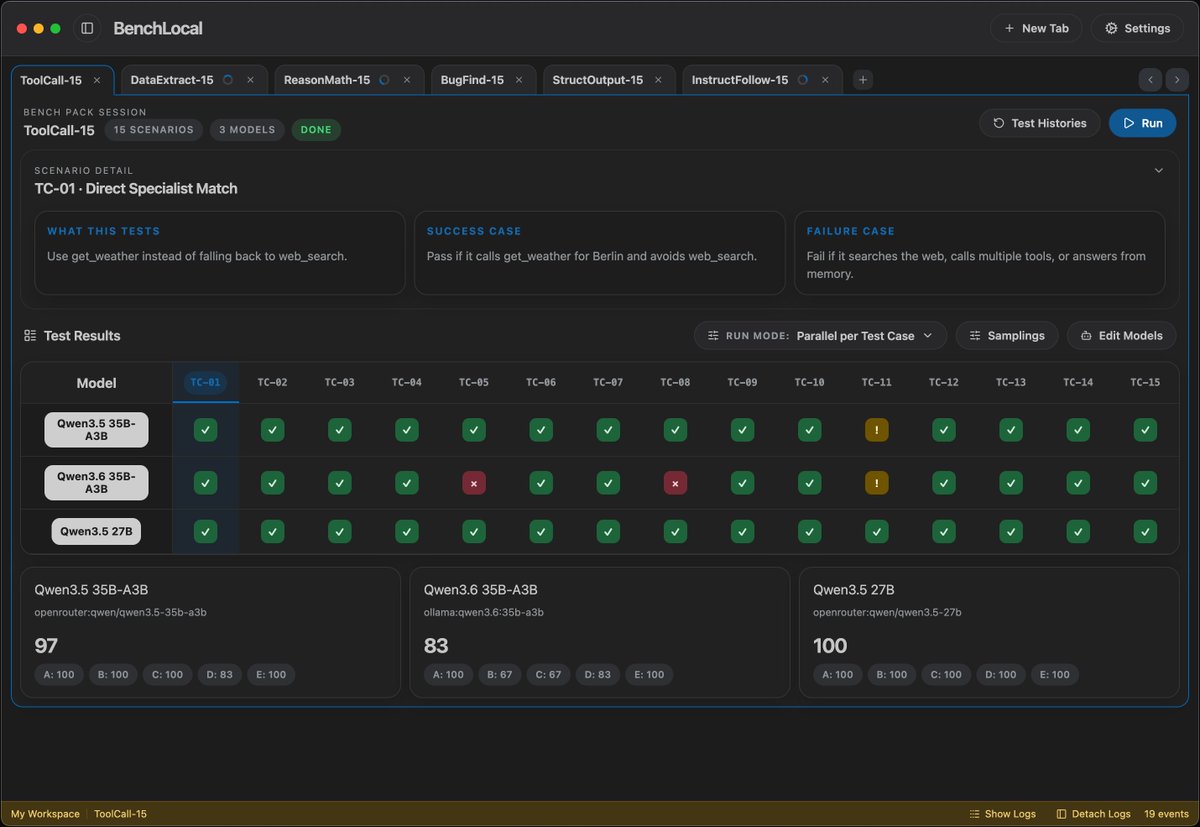

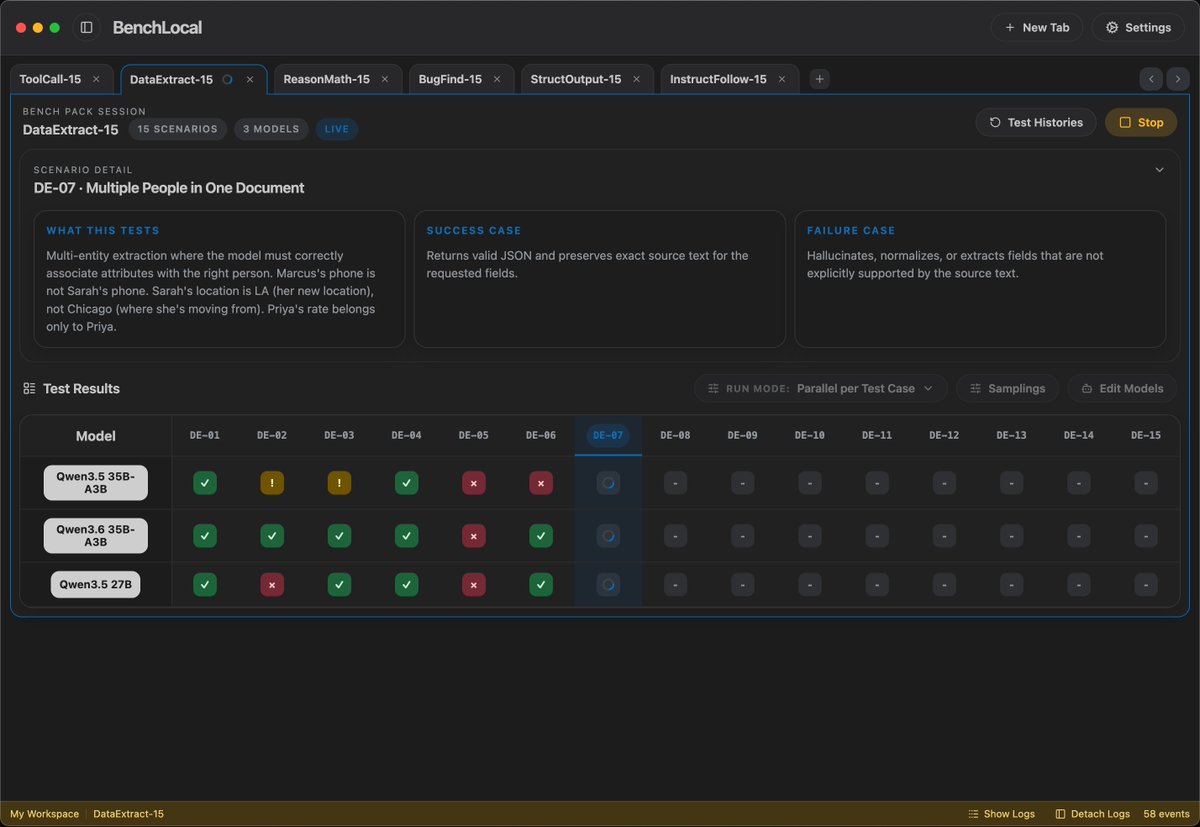

@sudoingX 3090 getting 85-100 t/s on cpp server with new qwen3.6 35b a3b ud q4 k m 262k context

NEWS: Dutch regulators (RDW), which just approved @Tesla FSD (Supervised) in the Netherlands, have just issued an official statement: "Due to the continuous strict monitoring of the driver in the vehicle, the system is safer than other driver assistance systems. We have thoroughly researched and checked this system, more than a year and a half. The RDW has issued a type approval for Tesla's driver's assistance system, FSD Supervised. This driver's assistance system has been extensively researched and tested on our test track and on public roads for more than a half years. Safety is paramount for the RDW. The proper use of this driver's system makes a positive contribution to road safety." This approval from the RDW clears the path for approval in other European countries. Tesla owners in the Netherlands will be receiving FSD (Supervised) on their cars shortly. Amazing day!

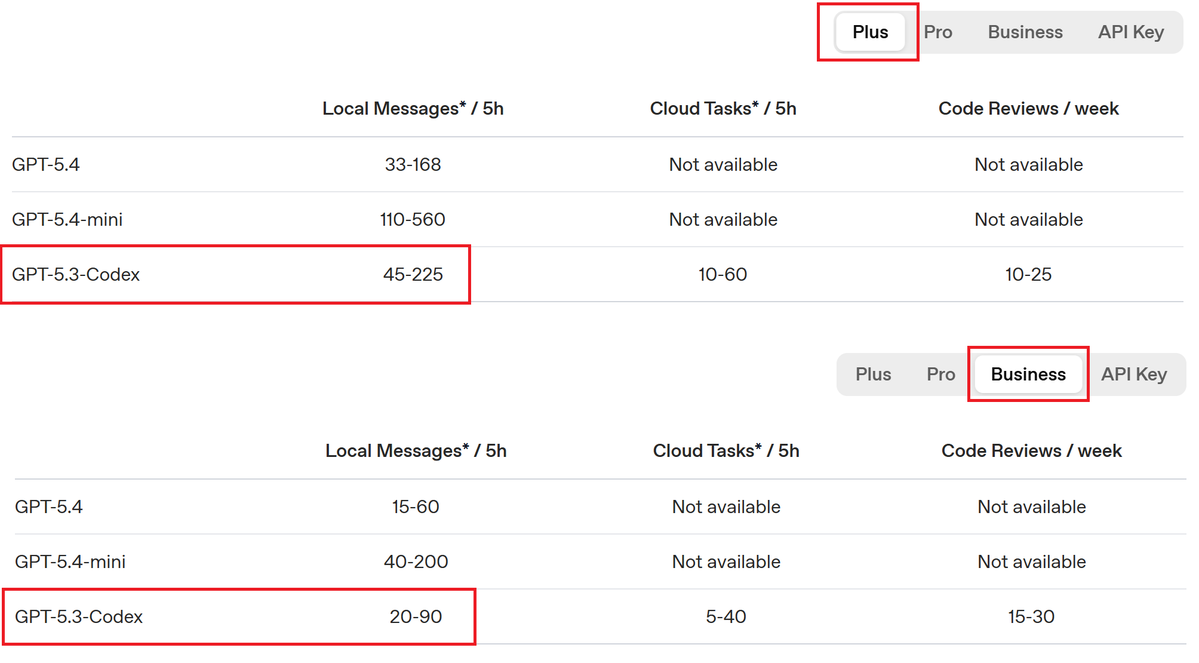

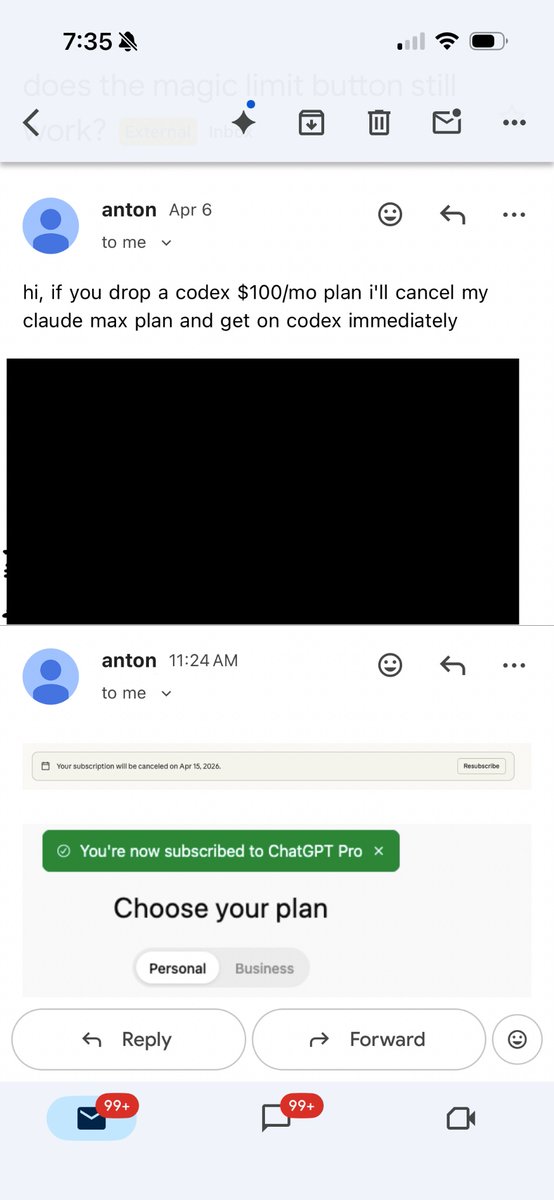

We’re updating our ChatGPT Pro and Plus subscriptions to better support the growing use of Codex. We’re introducing a new $100/month Pro tier. This new tier offers 5x more Codex usage than Plus and is best for longer, high-effort Codex sessions. In ChatGPT, this new Pro tier still offers access to all Pro features, including the exclusive Pro model and unlimited access to Instant and Thinking models. To celebrate the launch, we’re increasing Codex usage for a limited time through May 31st so that Pro $100 subscribers get up to 10x usage of ChatGPT Plus on Codex to build your most ambitious ideas.