Nikhil Mehta

51 posts

@_nikhilmehta

Staff Research Scientist @GoogleDeepMind

2024 State of LLMs for Text2SQL Tasks 🏆- Full Report 🥇 Overall Performance: @GoogleDeepMind Gemini-Exp-1206 🥇 Open Source Model: @Alibaba_Qwen 2.5-Coder:32b (Beats Sonnet 3.5 and on par with GPT-4o!) Disappointing performance by GPT-4o and 3.5 Sonnet on this task. 🧵

Prompt: "Bear writing the solution to 2x-1=0. But only the solution!"

🤔 Want to know if your LLMs are factual? You need LLM fact-checkers. 📣 Announcing the LLM-AggreFact leaderboard to rank LLM fact-checkers. 📣 Want the best model? Check out @bespokelabsai’s’ Bespoke-Minicheck-7B model, which is the current SOTA fact-checker and is cheap and fast to run. LLM-AggreFact collects 11 datasets across NLP tasks covering grounded factuality. These datasets consist of 🤖 LLM responses ✏️ annotated with their hallucinations with respect to grounding documents. This includes question answering and summarization, including RAGTruth, TofuEval, ExpertQA, and more. We benchmark 27 models on the task of detecting hallucinations. Frontier LLMs are good at this task, but very expensive to use in real-world RAG pipelines! Bespoke's model is a step towards We invite progress on this benchmark to figure out what’s the smallest and fastest model we can get to achieve top scores!

📣 Happy to introduce, CinePile, a long video QA dataset and benchmark! 300k train and 5k test split. A 🧶. (1/9) 📃: arxiv.org/abs/2405.08813 🤗: huggingface.co/datasets/tomg-… #MachineLearning

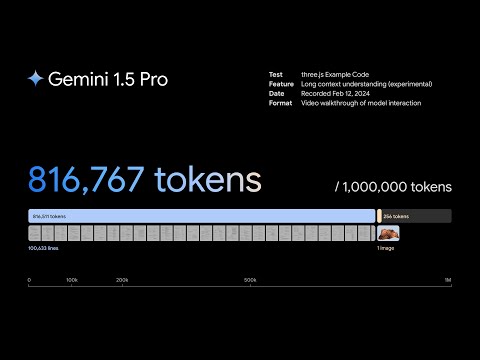

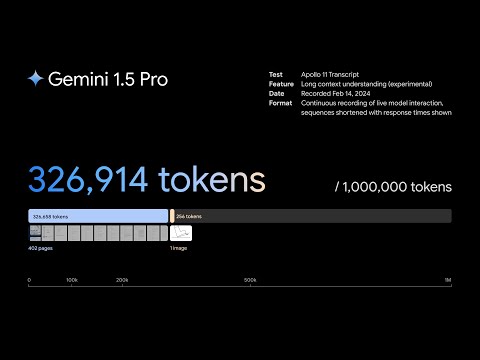

Today, we’re excited to introduce a new Gemini model: 1.5 Flash. ⚡ It’s a lighter weight model compared to 1.5 Pro and optimized for tasks where low latency and cost matter - like chat applications, extracting data from long documents and more. #GoogleIO

Meet Reka Core, our best and most capable multimodal language model yet. 🔮 It’s been a busy few months training this model and we are glad to finally ship it! 💪 Core has a lot of capabilities, and one of them is understanding video --- let’s see what Core thinks of the 3 body trailer.👇

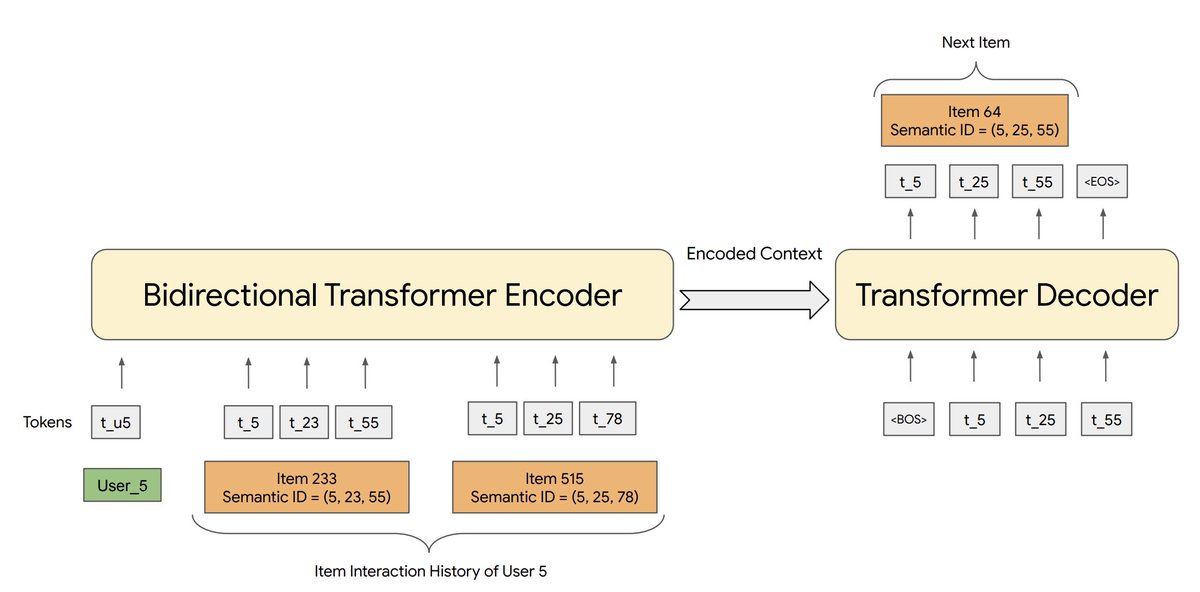

Happy to share our recent work "Recommender Systems with Generative Retrieval"! Joint work with @shashank_r12, @_nikhilmehta, @YiTayML, @vqctran and other awesome colleagues at Google Brain, Research, and YouTube. Preprint: shashankrajput.github.io/Generative.pdf #GenerativeAI 🧵 (1/n)

We're expanding access to Bard in US + UK with more countries ahead, it's an early experiment that lets you collaborate with generative AI. Hope Bard sparks more creativity and curiosity, and will get better with feedback. Sign up: bard.google.com blog.google/technology/ai/…

the success of chatgpt has lead to investors thinking RLHF is magic (to some extent it is), but boy they are going to be disappointed when their portfolios realize its limitations