AAAAAAAAAA

112 posts

AAAAAAAAAA

@aaaaaaaaaaorg

a lab focused on making research more accessible.

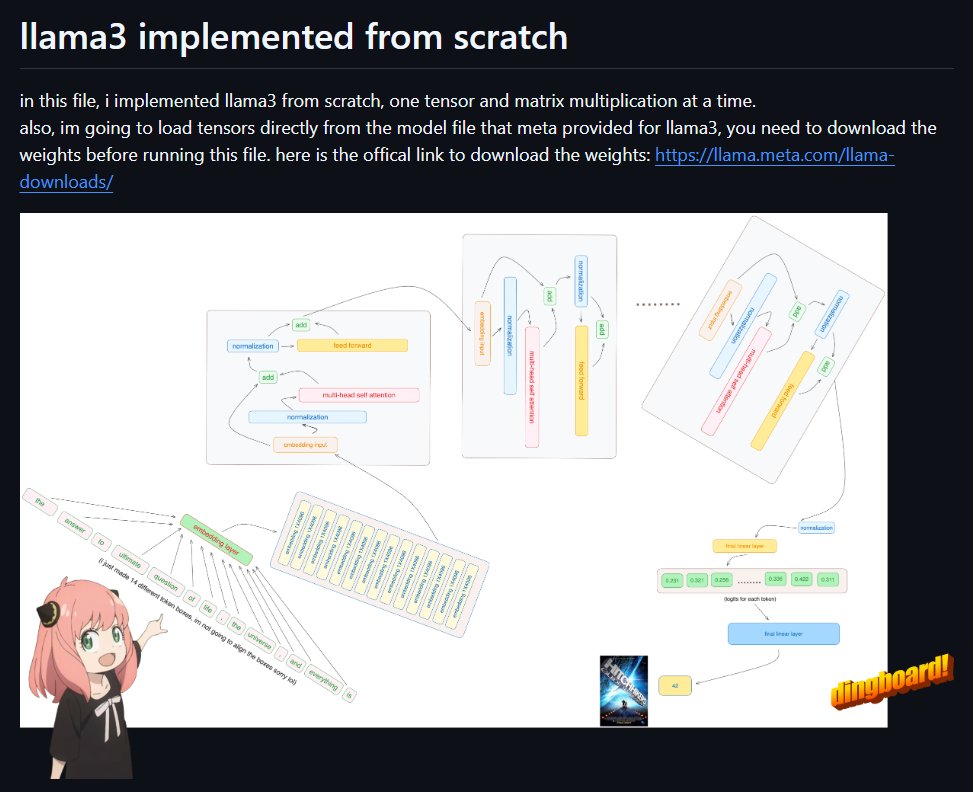

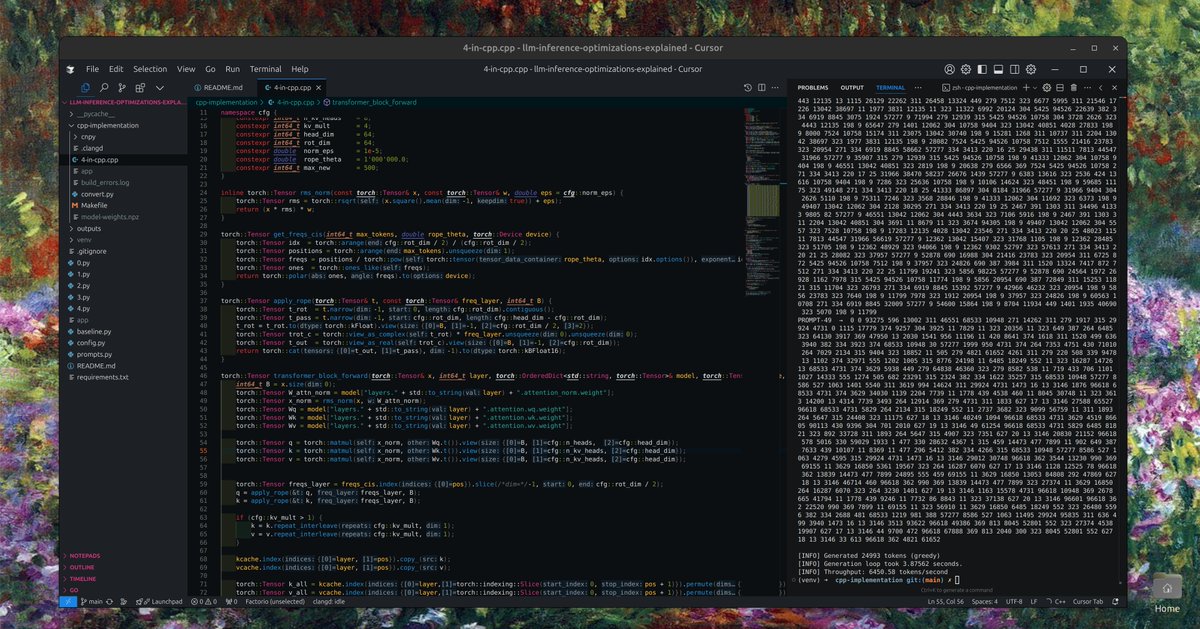

i'm working on an open-source repo that teaches people llm inference optimisations. rn the fastest files on the repo are at 6.5-7k tps, as compared to vllm's 10k for the same batch size. i added a c++ implementation today. feel free to try it out (wip): github.com/naklecha/llm-i…

today, i'm excited to release a reinforcement learning guide that carefully explains the intuition and implementation details behind every single fundamental algorithm in the field. enjoy :) naklecha.com/reinforcement-…

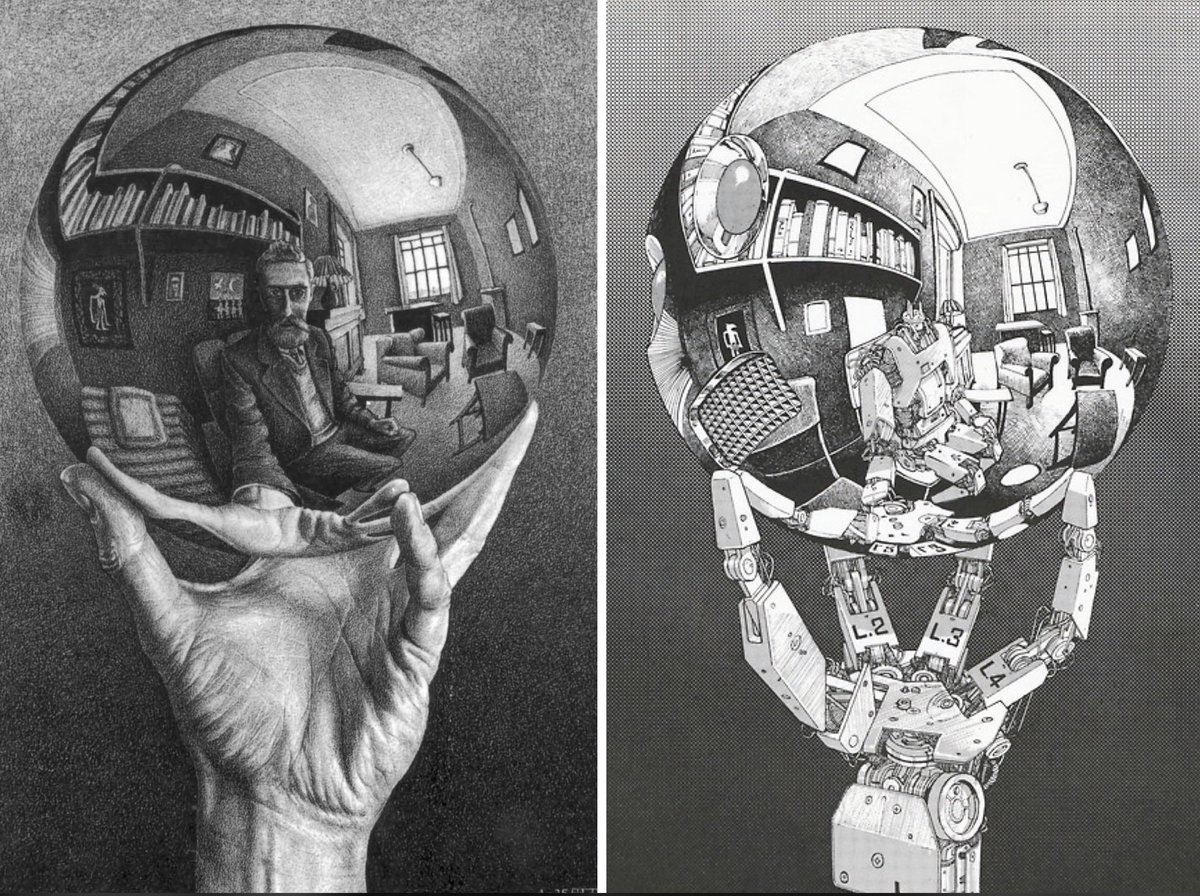

my new ml side quest -- i'm going to build rocket league bots, using reinforcement learning. my goal is to train a bot (in 1v1s) that is better than ~95% of rocket league's player base (diamond/champ rank).