AaltoMediaAI retweetledi

Can we build AI players that are not just great at the game, but play *like people*?

New #Eurographics 2026 paper: Robo-Saber: Generating and Simulating Virtual Reality Players

(Links in the reply!)

English

AaltoMediaAI

1.4K posts

@aaltomediaai

@AaltoUniversity’s AI&ML for media, art & design course. This is a public backlog of material for updating the course.

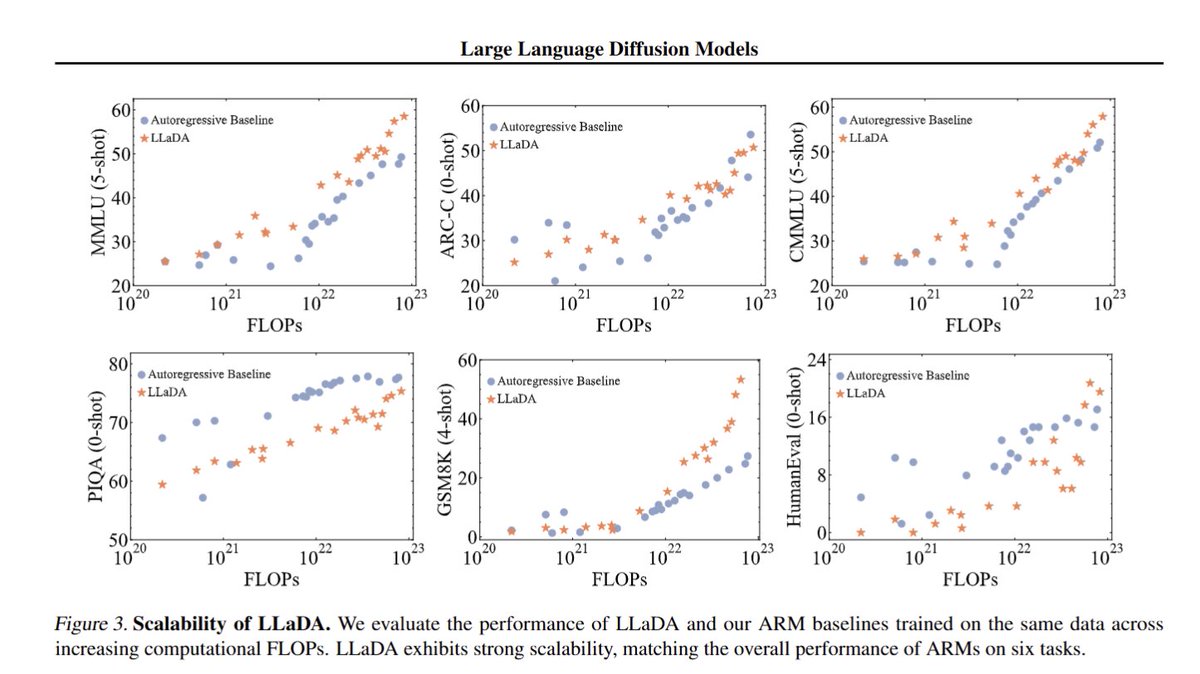

We are excited to introduce Mercury, the first commercial-grade diffusion large language model (dLLM)! dLLMs push the frontier of intelligence and speed with parallel, coarse-to-fine text generation.