Ahmet Iscen

45 posts

Ahmet Iscen

@ahmetius

Research scientist at Google DeepMind

Xuhui Jui, the author of the Instruct-Imagen (CVPR24 oral paper), will present his work in 20 minutes! Come to Summit 321! #CVPR24

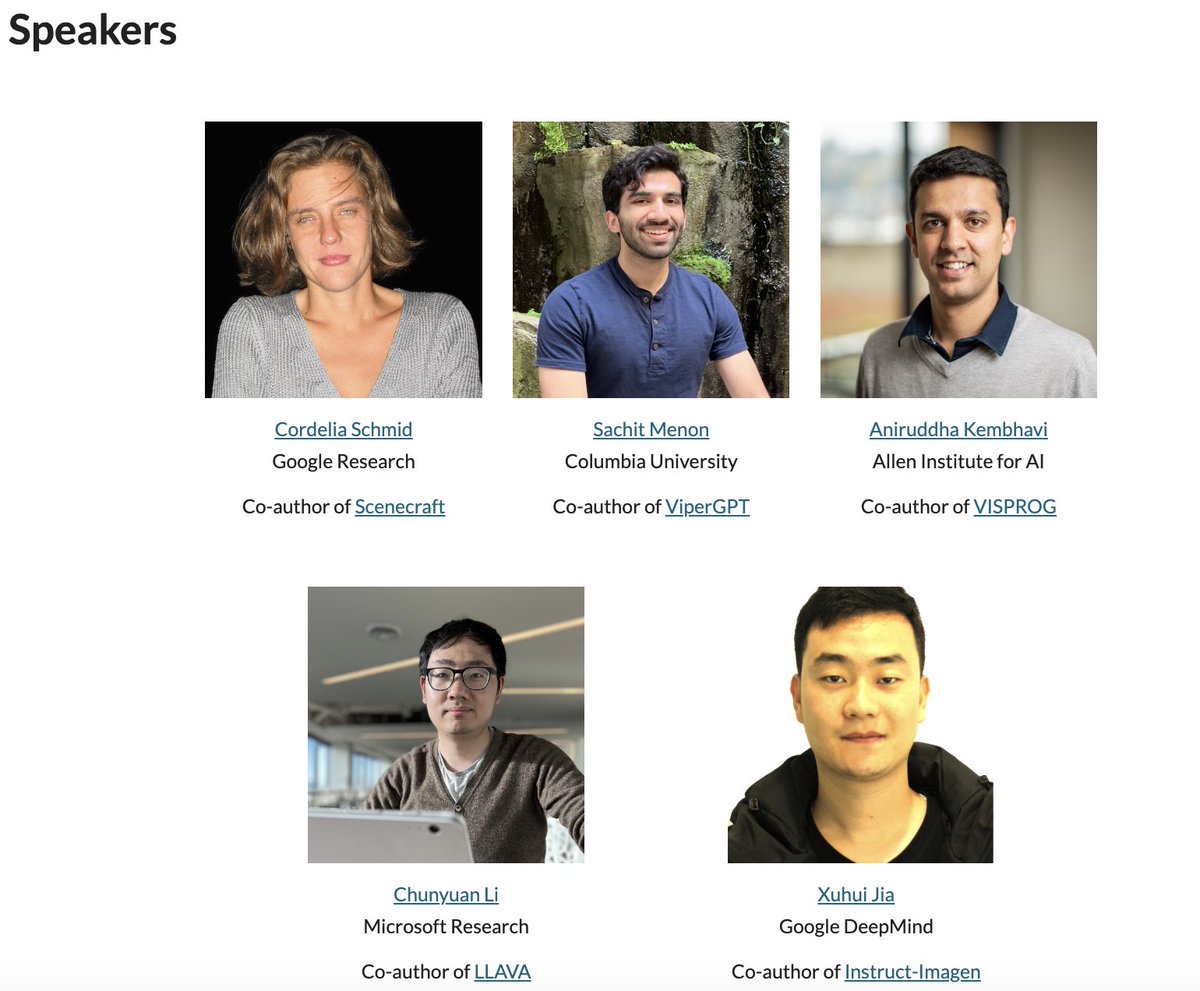

🔥 Calling all #CVPR2024 attendees! 🔥 Join us for the 1st Tool-Augmented VIsion (TAVI) Workshop on Monday morning in Summit 321! 💡 5 inspiring keynote talks 🎨 5 invited posters from the main conference Don't miss out! ➡️ More info: sites.google.com/corp/view/tavi…

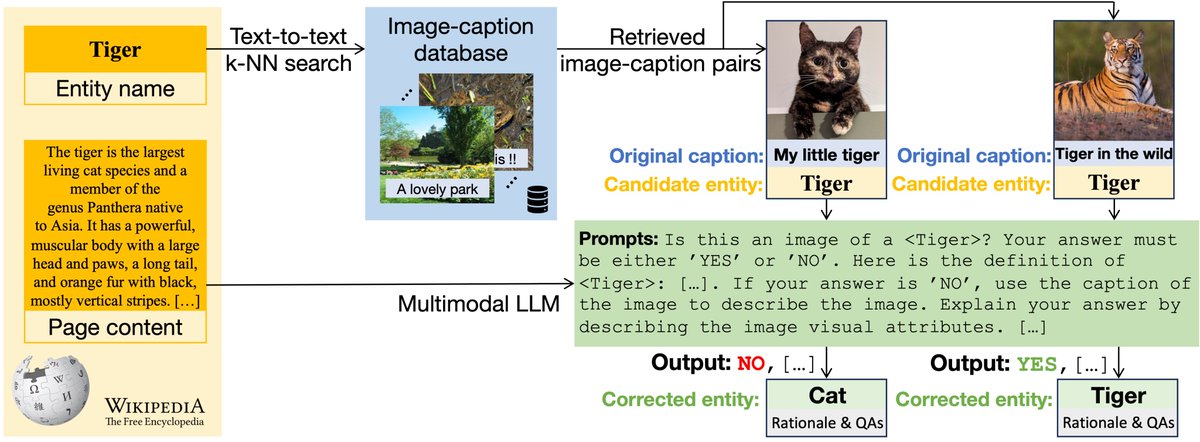

Happy to introduce GERALD - our new VLM that recognizes 6M+ entities, an exciting step towards Web-scale visual entity recognition! Predictions are simply made by auto-regressively decoding a code representing the entity name. Check out our CVPR24 paper: arxiv.org/abs/2403.02041

The list of #CVPR2024 workshops is now available! cvpr.thecvf.com/Conferences/20…

Happy to share our recent preprint! Models like CLIP tend to struggle on fine-grained tasks. We equip these models with the ability to retrieve and refine additional data at inference, which substantially improves the performance. With @mcaron31 , @alirezafathi , @CordeliaSchmid

Today on the blog, read all about AVIS — Autonomous Visual Information Seeking with Large Language Models — a novel method that iteratively employs a planner and reasoner to achieve state-of-the-art results on visual information seeking tasks → goo.gle/3P2y2mY

AVIS: Autonomous Visual Information Seeking with Large Language Models paper page: huggingface.co/papers/2306.08… In this paper, we propose an autonomous information seeking visual question answering framework, AVIS. Our method leverages a Large Language Model (LLM) to dynamically strategize the utilization of external tools and to investigate their outputs, thereby acquiring the indispensable knowledge needed to provide answers to the posed questions. Responding to visual questions that necessitate external knowledge, such as "What event is commemorated by the building depicted in this image?", is a complex task. This task presents a combinatorial search space that demands a sequence of actions, including invoking APIs, analyzing their responses, and making informed decisions. We conduct a user study to collect a variety of instances of human decision-making when faced with this task. This data is then used to design a system comprised of three components: an LLM-powered planner that dynamically determines which tool to use next, an LLM-powered reasoner that analyzes and extracts key information from the tool outputs, and a working memory component that retains the acquired information throughout the process. The collected user behavior serves as a guide for our system in two key ways. First, we create a transition graph by analyzing the sequence of decisions made by users. This graph delineates distinct states and confines the set of actions available at each state. Second, we use examples of user decision-making to provide our LLM-powered planner and reasoner with relevant contextual instances, enhancing their capacity to make informed decisions. We show that AVIS achieves state-of-the-art results on knowledge-intensive visual question answering benchmarks such as Infoseek and OK-VQA.

AVIS: Autonomous Visual Information Seeking with Large Language Models paper page: huggingface.co/papers/2306.08… In this paper, we propose an autonomous information seeking visual question answering framework, AVIS. Our method leverages a Large Language Model (LLM) to dynamically strategize the utilization of external tools and to investigate their outputs, thereby acquiring the indispensable knowledge needed to provide answers to the posed questions. Responding to visual questions that necessitate external knowledge, such as "What event is commemorated by the building depicted in this image?", is a complex task. This task presents a combinatorial search space that demands a sequence of actions, including invoking APIs, analyzing their responses, and making informed decisions. We conduct a user study to collect a variety of instances of human decision-making when faced with this task. This data is then used to design a system comprised of three components: an LLM-powered planner that dynamically determines which tool to use next, an LLM-powered reasoner that analyzes and extracts key information from the tool outputs, and a working memory component that retains the acquired information throughout the process. The collected user behavior serves as a guide for our system in two key ways. First, we create a transition graph by analyzing the sequence of decisions made by users. This graph delineates distinct states and confines the set of actions available at each state. Second, we use examples of user decision-making to provide our LLM-powered planner and reasoner with relevant contextual instances, enhancing their capacity to make informed decisions. We show that AVIS achieves state-of-the-art results on knowledge-intensive visual question answering benchmarks such as Infoseek and OK-VQA.