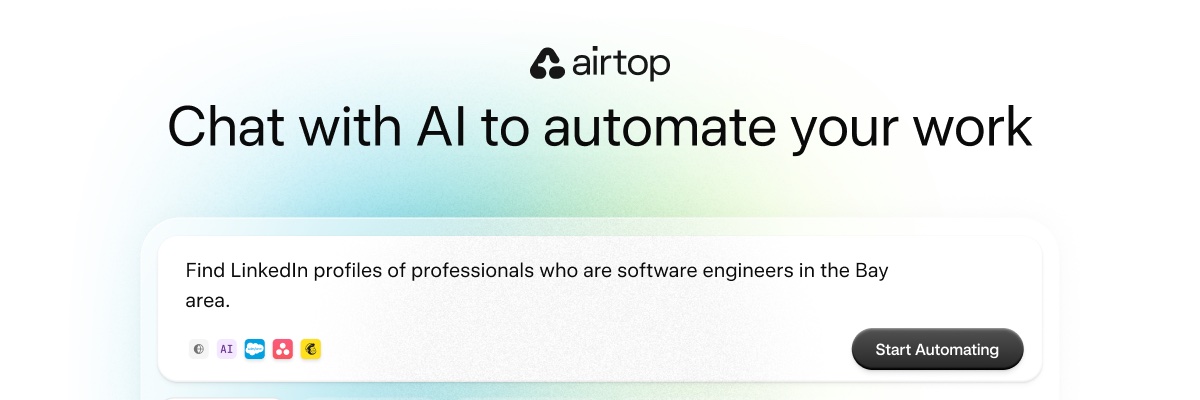

Airtop

116 posts

Airtop

@airtop

Automate your work with just words. Build powerful web agents by chatting with AI.

AI coding is going to change the traditional EPD roles in the following ways. Think of a spectrum: on one end you have the PM who does nothing but translate business needs into product requirements, works with the designer to craft the UX, and hands of tickets to engineers. They have zero technical abilities, but are good at understanding the business needs and translating into reqs. In the old world, there was value here. On the other end, you have code monkeys. They have zero business sense, UX sense, but are great at taking a ticket and writing the code, and saying: next please. Again, there used to be value here. Both ends of the spectrum are dead now. Enter the generalist "builder" that can reason about business value, product requirements, has design sense, can build full stack, and can follow a feature through a prod release and measuring the impact on the business. This kind of generalist used to be a unicorn because of the significant SME need. Someone who can write decent UI code, backend code/distributed systems, dev ops, AND can do design, AND understands the business? Damn. But now, any good generalist that can use AI tools to deliver a full stack feature, with all the deep complexities of each type of engineering, and with a deep connection to how those features push the business forward are the ones who will thrive in this new world. My #1 piece of advice for anyone in product, design, or engineering: be a generalist. Learn the AI tools to be competent in all 3 areas and stop thinking of yourself as a PM, a designer, or an engineer. Learn autonomy and E2E ownership. You're a builder now. Otherwise you're ngmi.

"Fully agentic AI treats every run like the first time — even when it shouldn’t." My take on the AI Agency spectrum and why you don't always need full agency for AI Agents. airtop.ai/blog/agency-sp…