Anusha K

1.1K posts

Anusha K

@aiwithanu

ML enthusiast || Available for work : https://t.co/w3R6kfGiH7 || Learning ML to build @mayumiai_hq, @Metagpt_ ambassador, recipient @aigrantsindia

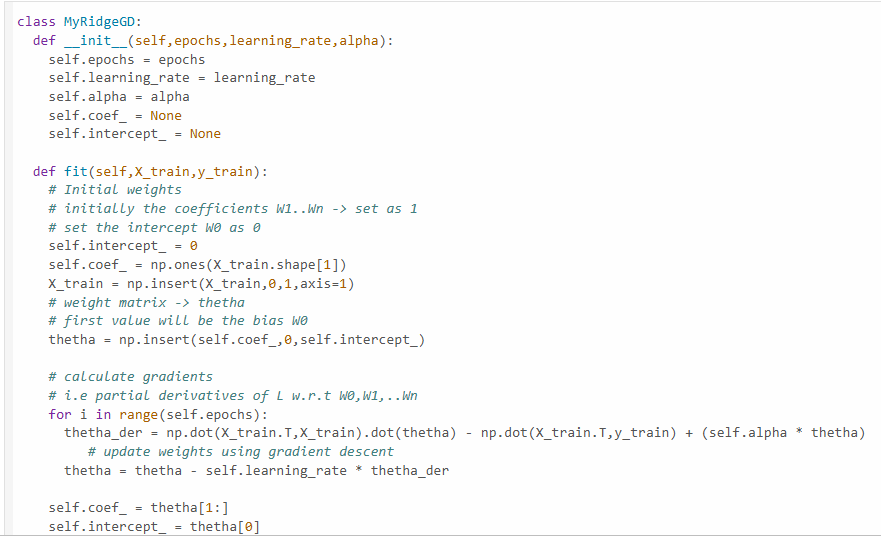

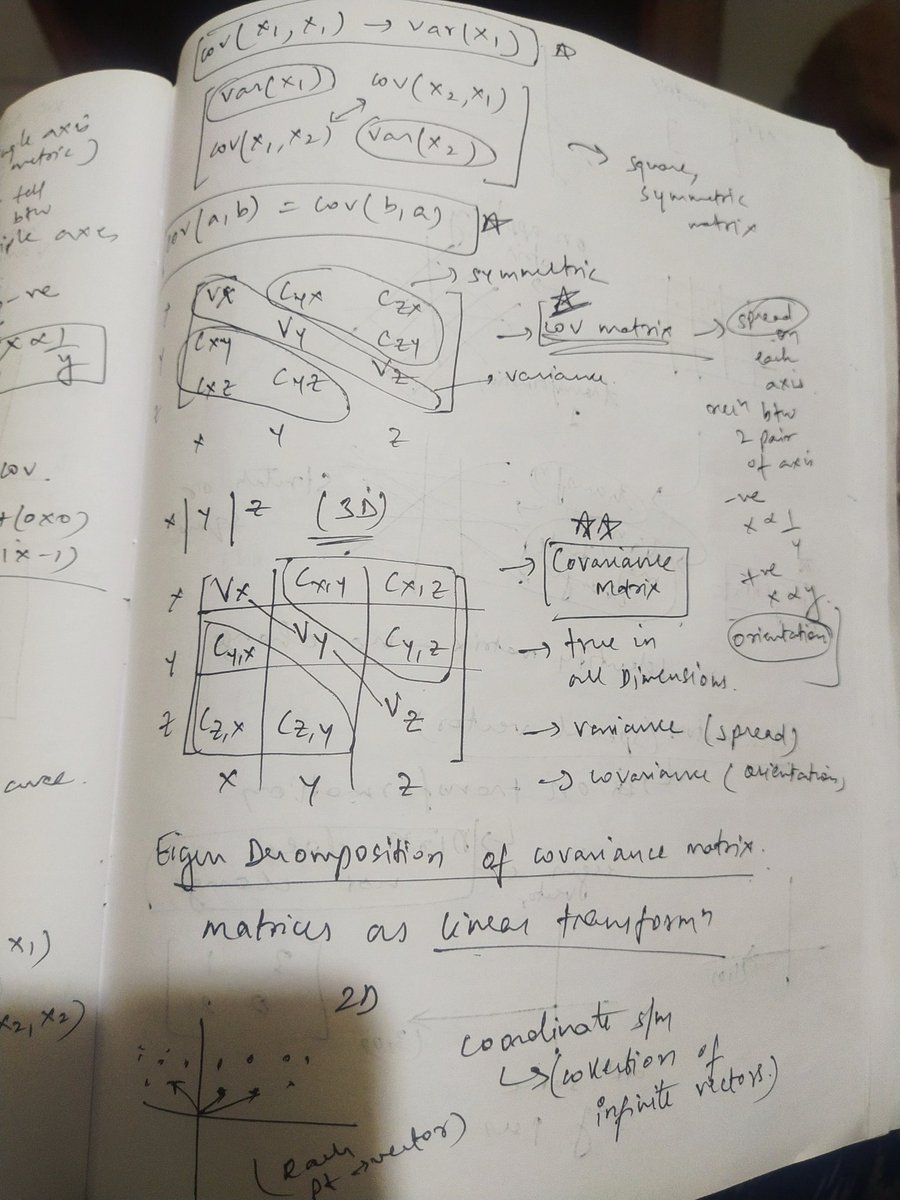

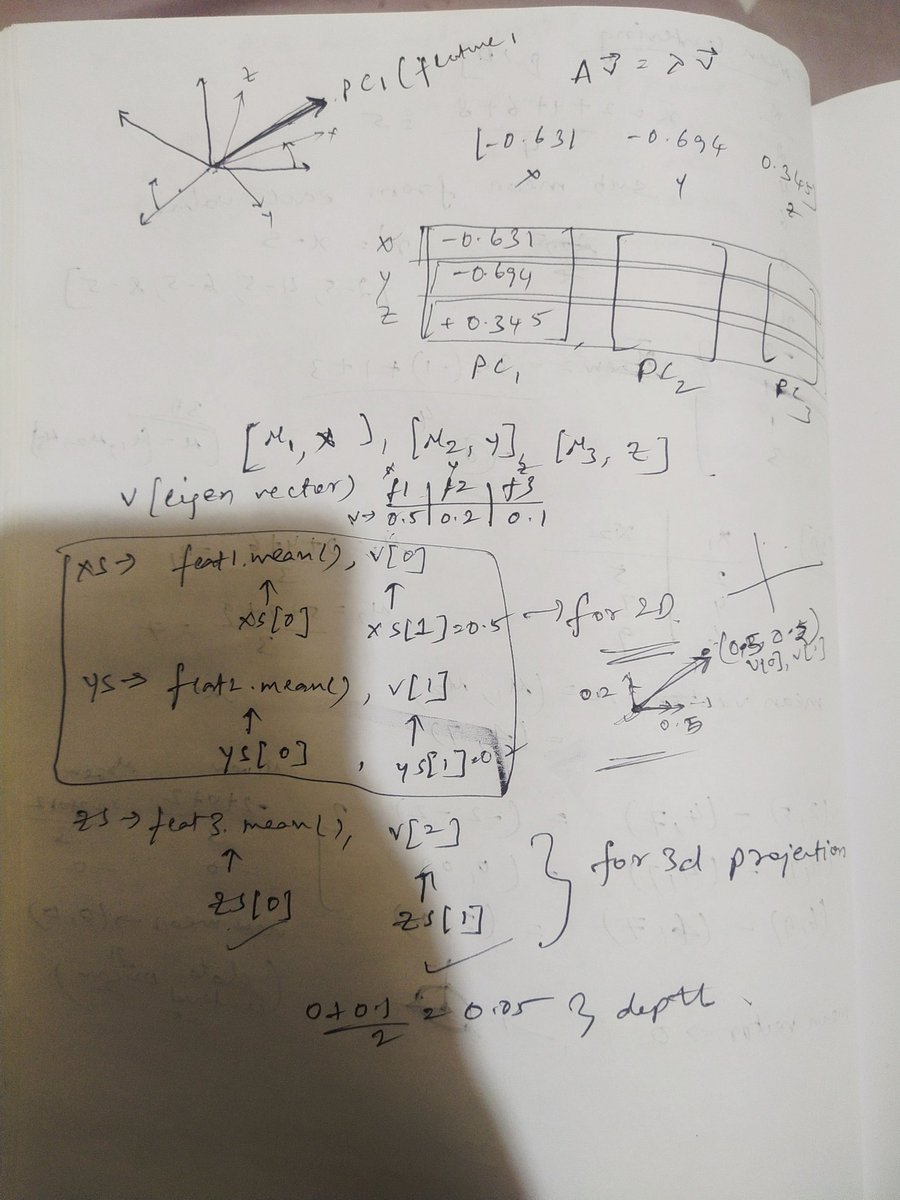

68/♾️ Done with multivariate imputation, handling outliers using zscore, iqr proximity, percentile methods, done with (Principle component analysis implementation (without sklearn) + math ) PCA with sklearn is left.

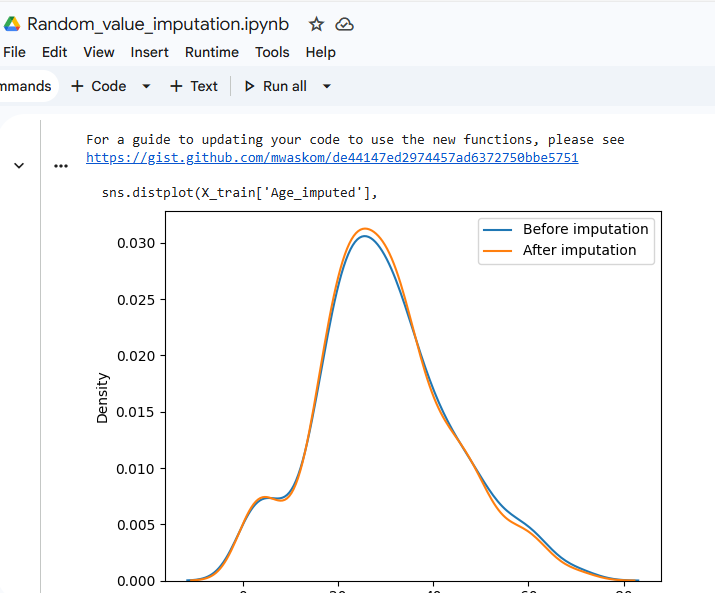

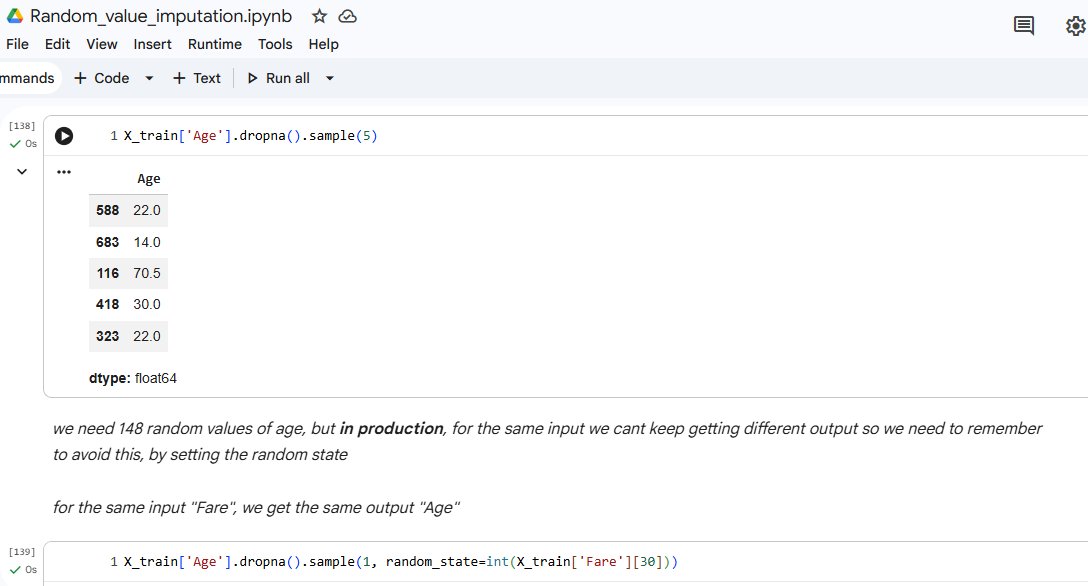

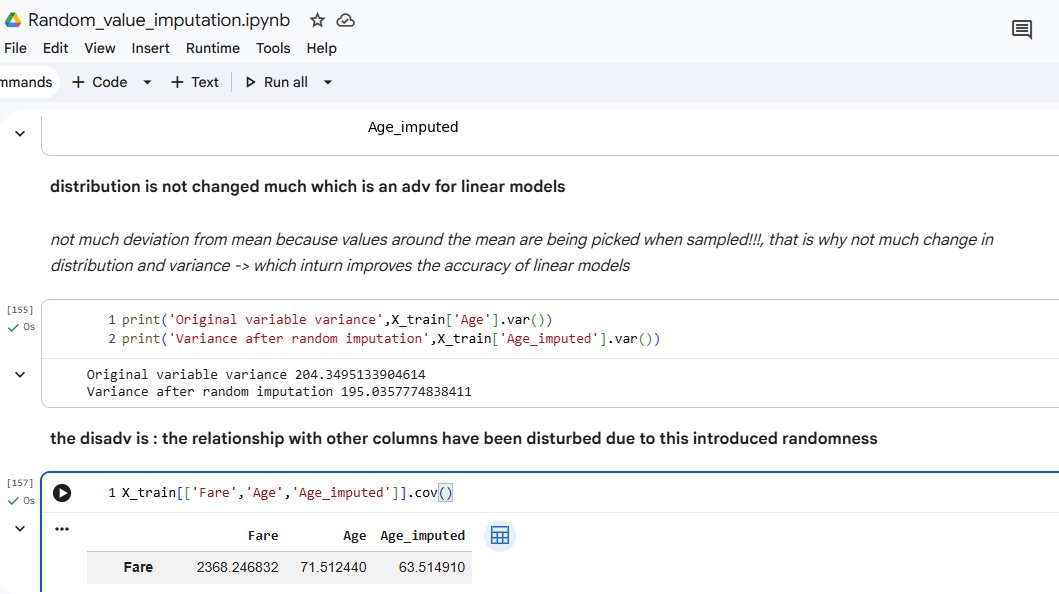

67/♾ Working on univariate imputation for filling missing values . Done with mean/median imputation, arbitrary value imputation, random value imputation.

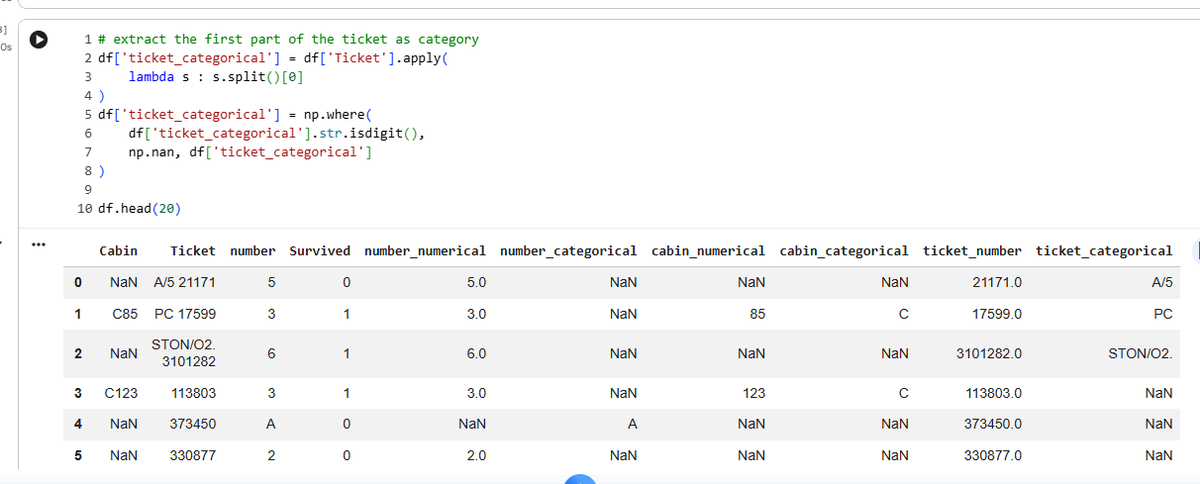

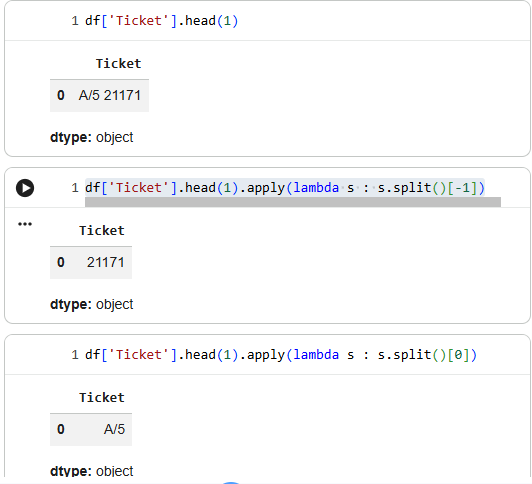

66/♾ lambda functions are pretty useful while dealing with mixed variables, where a column has cells with both numerical and categorical data

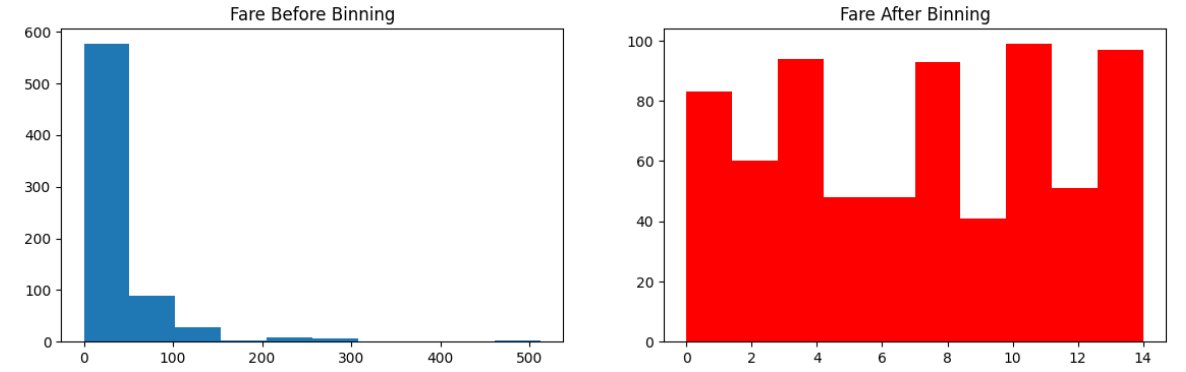

65/♾ To encode numerical data, we use : 1) Binning 2) Binarization Why use it ? To handle outliers better, to improve the value spread (makes spread of data uniform) We use the class KBinsDiscretizer , with encoding as ordinal , strategy : uniform, quantile, kmeans.

64/♾ We apply box-cox transform (for values strictly greater than 0) and Yeo-johnson transform(for both positive, negative, 0 values). Performance of linear models improves greatly when it has normally distributed data, so we use these to get end output as normal distribution.

63/♾ [1/n] : Support Vector Classifier Key Params : C, gamma, kernel C (Regularisation strength) : Low C : allows mistakes, smooth boundary, may underfit High C : forces correctness, complex boundary, may overfit Ex : digits 1 vs 7 Low C -> some 7s look like 1s, that's okay