Abdulkadir Gokce retweetledi

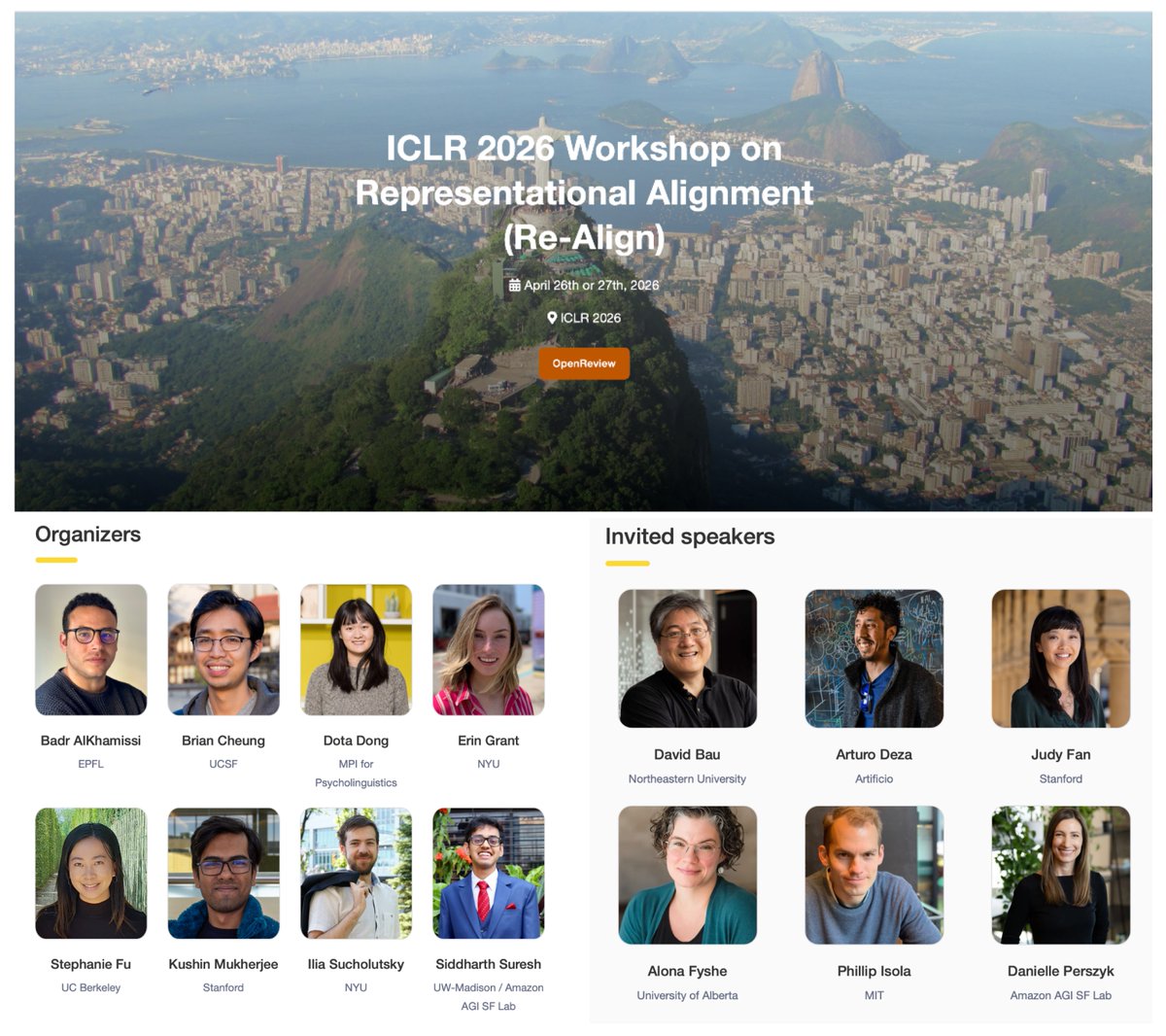

🎉 Re-Align is back for its 4th edition at ICLR 2026!

📣 We invite submissions on representational alignment, spanning ML, Neuroscience, CogSci, and related fields.

📝 Tracks: Short (≤5p), Long (≤10p), Challenge (blog)

⏰ Feb 5, 2026 for papers

🔗 representational-alignment.github.io/2026/

English