Albert Thomas @albertcthomas.bsky.social

334 posts

Albert Thomas @albertcthomas.bsky.social

@albertcthomas

Research engineer in machine learning at Huawei

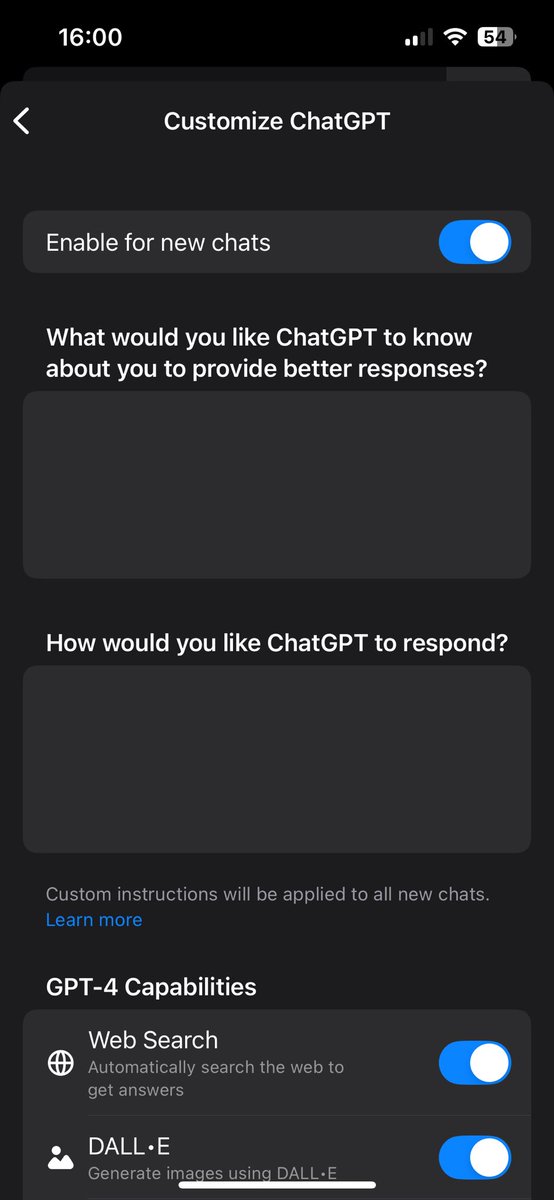

It's a strange time to be a programmer—easier than ever to get started, but easier to let AI steer you into frustration. We've got an antidote that we've been using ourselves with 1000 preview users for the last year: "solveit" Now you can join us.🧵 answer.ai/posts/2025-10-…

Looking to leverage LLMs for multivariate time series forecasting? 🎉 Search no more! You can do exactly that with our new package DICL (Disentangled In-Context Learning). 🖥️ Code & Demo: github.com/abenechehab/di… 📜 New preprint: DICL for RL arxiv.org/pdf/2410.11711 1/🧵

agent frameworks are useless (why are there so many)

Honestly, at this point If you give me a programming interview and don't let me use AI assistance you won't get a very realistic idea of what I'm actually capable of