Psyched to be joining the Elastic team. Woohoo!

Elastic@elastic

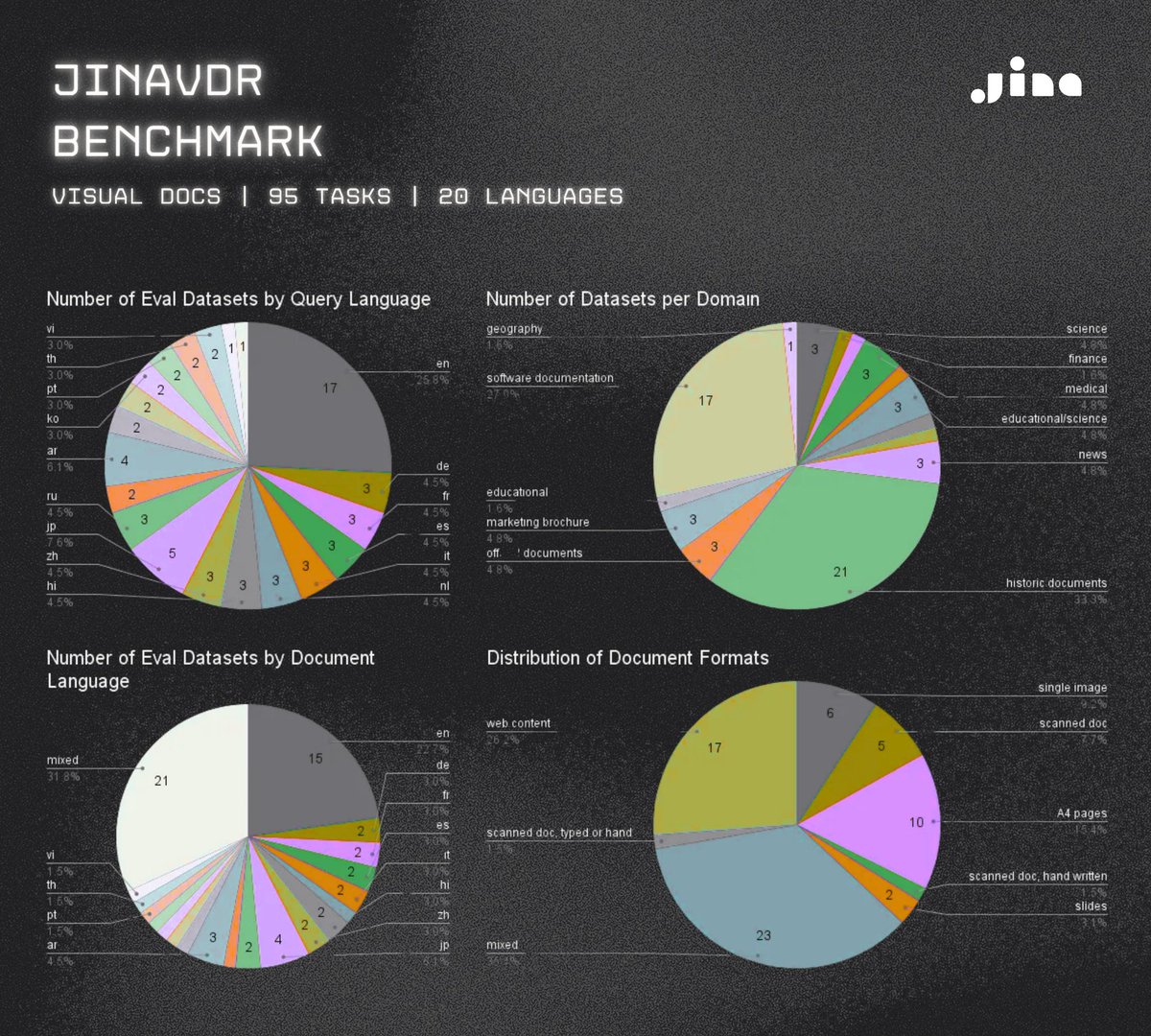

We’re excited to announce that we have joined forces with @JinaAI_, a leader in frontier models for multimodal and multilingual search. This acquisition deepens Elastic’s capabilities in retrieval, embeddings, and context engineering to power agentic AI: go.es.io/48QeYCM

English