alwaysfurther.ai

26 posts

@alwaysfurtherAI

Empowering enterprises to build specialized AI models that cost effectively deliver precise, reliable results.

Spent an hour with @WeAreDevelopers breaking down why agent security has to live at the kernel, not the app layer. Watch it here: youtube.com/watch?v=xVK29A…

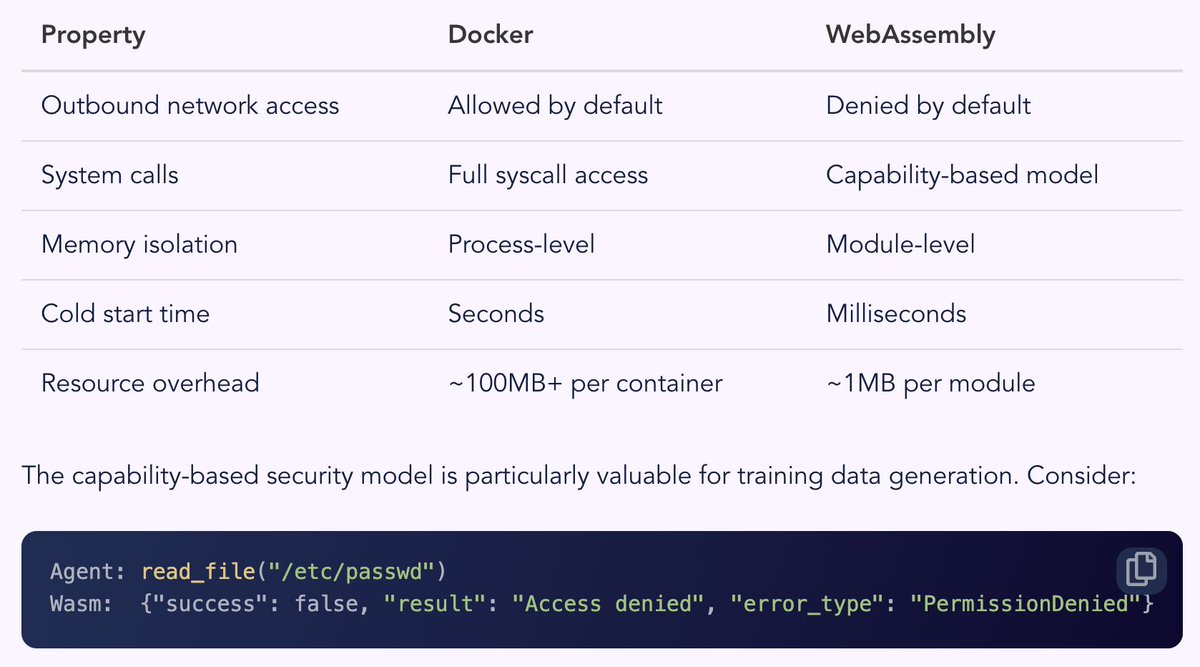

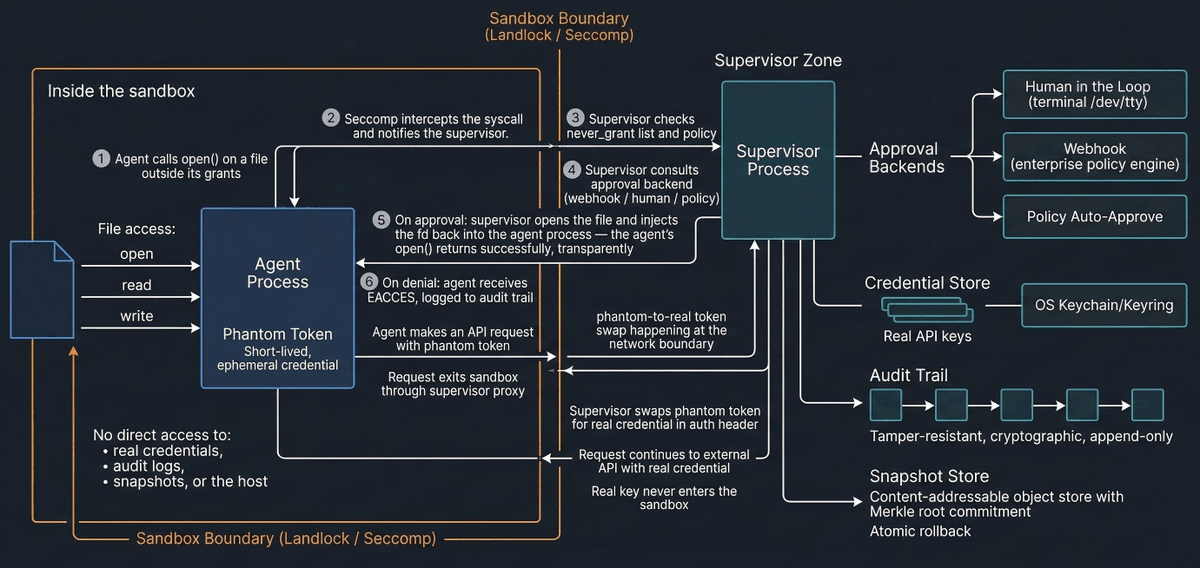

We give agents API keys as environment variables, and a single prompt injection can exfiltrate them via env, `/proc/PID/environ`, with just an outbound HTTP call. The blast radius is the full scope of that key. So we built what we're calling the "phantom token pattern" - a credential injection proxy that sits outside the sandbox with a parent process that limited with connection to its sandboxed child by seccomp. The agent never sees real credentials. It gets a per-session token that only works only with the session bound localhost proxy. The proxy validates the token (constant-time), strips it, injects the real credential, and forwards upstream over TLS. If the agent is fully compromised, there's nothing worth stealing. Real credentials live in the system keystore (macOS Keychain / Linux Secret Service), memory is zeroized on drop, and DNS resolution is pinned to prevent rebinding attacks. It works transparently with OpenAI, Anthropic, and Gemini SDKs — they just follow the `*_BASE_URL` env vars to the proxy. Blog post walks through the architecture, the token swap flow, and how to set it up. Would love feedback from anyone thinking about agent credential security. nono.sh/blog/blog-cred… We also have other features we have shipped, such as atomic rollbacks, Sigstore based SKILL attestation. github.com/always-further…

Software Supply Chain Security in the Age of AI Agents alwaysfurther.ai/blog/sigstore-…