Most AI agents have one security layer between untrusted input and real-world actions.

One regex. One API call. One point of failure.

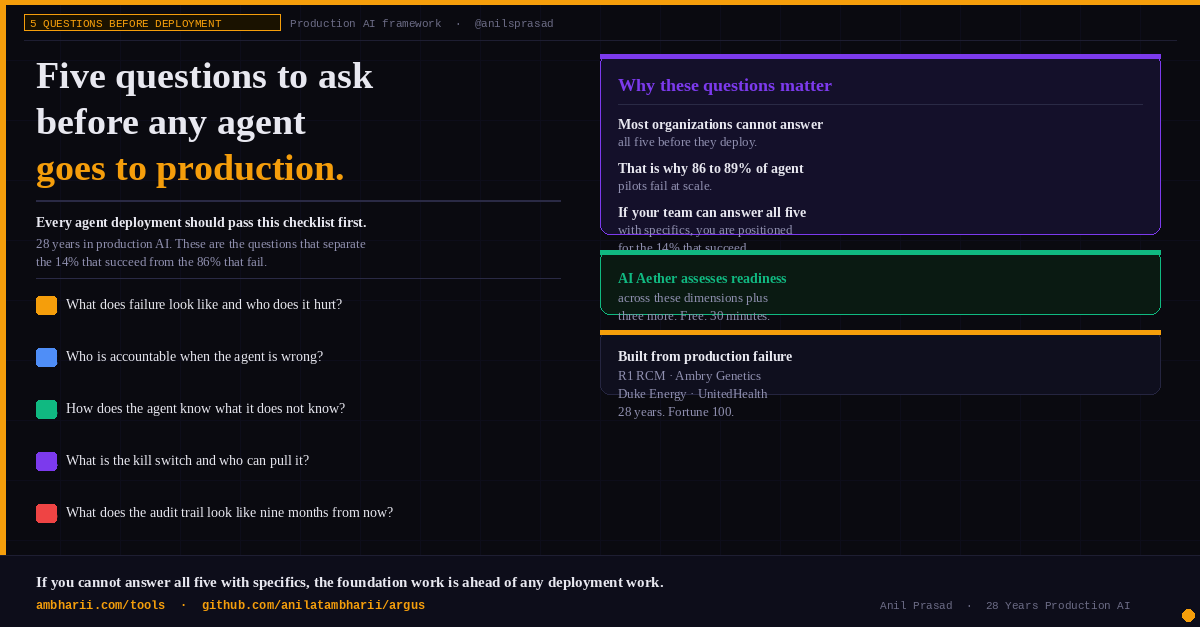

I built Bulwark — open-source, Apache 2.0, five-layer defense for production agents:

→ Sanitizer (bidi/Unicode/hidden HTML)

→ Injection detector (ML + patterns)

→ Compartmentalized RBAC (deny-by-default)

→ Human approval gates (async)

→ Encrypted audit trail (HIPAA/SOC2 ready)

pip install bulwark-agent-security

Full breakdown of the architecture + 4 real attack scenarios blocked:

medium.com/p/the-five-lay…

@AnthropicAI @nvidia @GoogleDeepMind @huggingface @owasp

English