Sabitlenmiş Tweet

Had a great time sharing about observability and evals at AWS Summit in London today 🪢

Ghulam@ghulamio

@langfuse at AWS summit today

English

Annabell Schaefer

127 posts

@annabellschfr

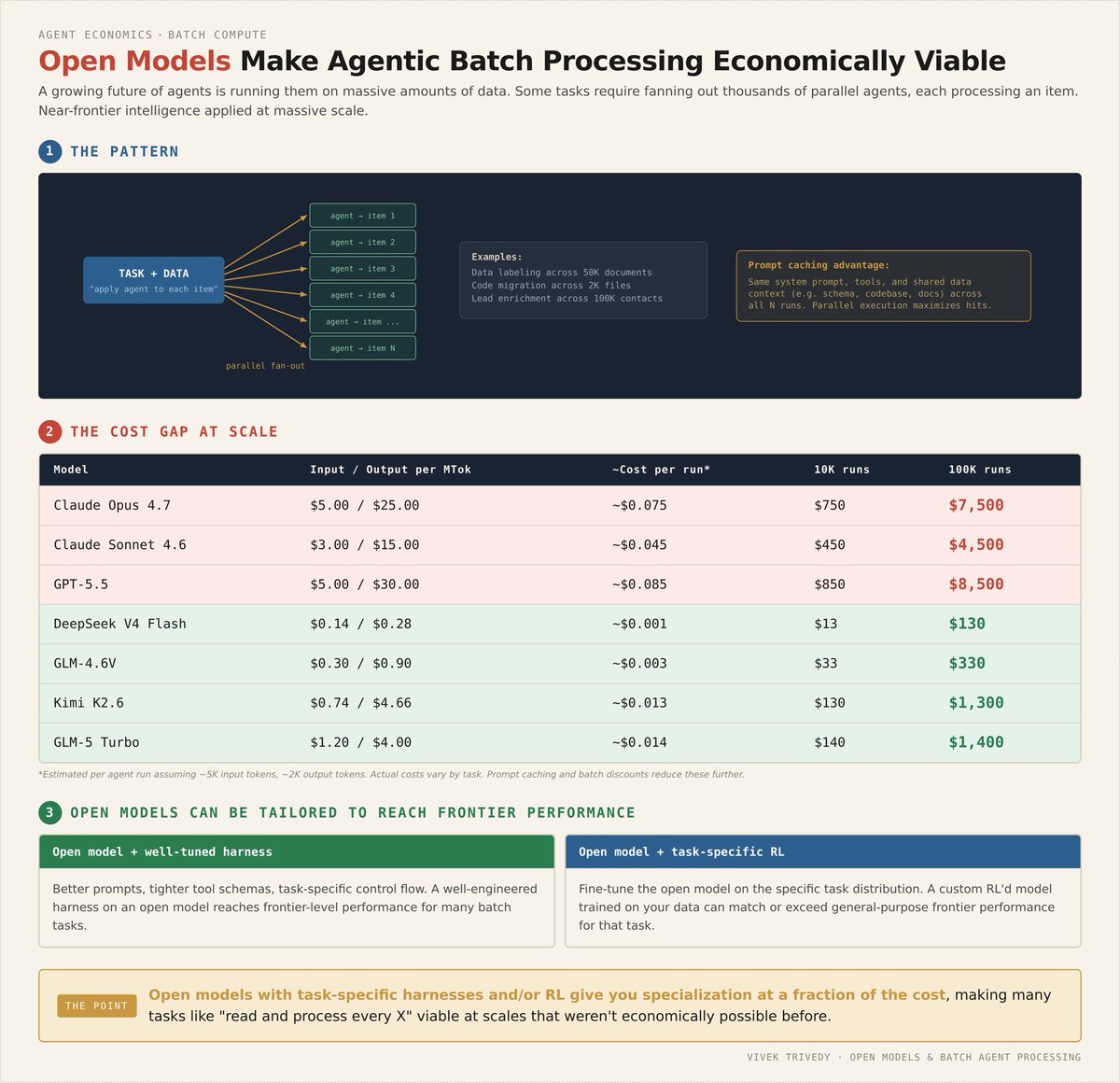

thinking about agents @langfuse

@langfuse at AWS summit today