Anton Abilov

66 posts

Anton Abilov

@AntonAbilov

MS Info Science @Cornell_Tech '22, ex-Tech Lead at @ArdoqCom Interested in CS for social good, NLP, startups & snowkiting

Oslo, Norway Katılım Ekim 2014

271 Takip Edilen168 Takipçiler

Sabitlenmiş Tweet

Just published my first paper and dataset with the Social Technologies Lab at Cornell Tech - the largest public dataset on voter fraud claims released to date.

Check out the analysis in an interactive @streamlit application here: voterfraud2020.io

English

cool article about CDE (contextual document embeddings) out in Venture Beat 📰

also chatting with them got me thinking about what future work looks like in this line of research. here are a few ideas, if you will allow me to pontificate for just a moment 🧐

- Multimodality. We could try applying this to other modalities where embeddings are useful, such as CLIP-like models. our ideas aren't really text-specific, so I would expect them to improve text-image retrieval too

- Scaling. Some work in retrieval shows that models follow instructions much better at larger scales. The very best retrieval models, too, are larger than ours; they're usually seven or eight billion parameters, while our model is around 50x smaller. We're hoping to find compute to train our model at that scale

- Better contextual batching. We proposed clustering as a solution to the optimization problem of "find the hardest possible ordering of the data", but there are certainly better solutions out there that could be used to train even better models, even at the same scale

- Learning smaller embeddings. Some models now are using Matryoshka embeddings, which allow one training process and model backbone to produce embeddings at a variety of sizes. I have a hunch that contextual embeddings will produce significantly better embeddings at smaller sizes, since they're better at filtering out the information that's not useful. We want to train a CDE model with the Matryoshka technique and measure the impact of context across model scales

- Better clustering architectures. I'd say we did close to the simplest possible thing, which was "just provide additional tokens of context in addition to the tokens that will be used for retrieval." There are probably better ways to incorporate contextual informaiton directly into the embedding process. One idea we had was to use an encoder-decoder where the encoder takes contextual information and the decoder produces embeddings. We haven't tried it yet, though

English

@marktenenholtz This looks like a solid course! Would it also serve as a good starting point for deepening understanding of time series anomaly detection?

English

The first project guides you through all of this on a dataset with millions rows and thousands individual time series.

You’ll also build everything from top-level statistical models to low-level ML models.

Check it out here: corise.com/go/forcasting-…

English

@dnnslmr I have the same problem... Have you been able to fill the void somewhere else? Is Mastodon working for this purpose?

English

Soooo I really like reading #MLtwitter but with the current state of the app it feels like I am listening to the orchestra on the titanic 🫡

English

Anton Abilov retweetledi

In "Measuring Progress on Scalable Oversight for Large Language Models” we show how humans could use AI systems to better oversee other AI systems, and demonstrate some proof-of-concept results where a language model improves human performance at a task. arxiv.org/abs/2211.03540

English

Great work by @dnnslmr 👏 Was fortunate to hear an in-depth presentation of his in NYC. Now thinking about: how can we estimate training data (aleatoric) uncertainty for pretrained LMs? Where does the the border between ID and OOD lie for real-world summarization systems?

Dennis Ulmer 🦋@dnnslmr

I'm so happy to finally announce our work with @jesfrellsen and @ch_hardmeier at @emnlpmeeting: We create a large benchmark of uncertainty quantification for #NLProc (8 models, up to 7 metrics) on three different languages! 🧵 (1/10) arxiv.org/abs/2210.15452 #emnlp2022

English

Anton Abilov retweetledi

There is this rule of thumb that an improvement >1.0 BLEU is significant. But is it correct? Is it not coincidental?

I ran statistical significance testing on 2500+ system pairs from WMT21/22 to check it:

pub.towardsai.net/yes-we-need-st…

English

Anton Abilov retweetledi

@ooobject Can you share a simple diagram/overview of your current smart-cabin setup? 😄

English

Anton Abilov retweetledi

Anton Abilov retweetledi

The supply of disinformation will soon be infinite, which will cause people to treat *all* information as disinformation, *unless* it comes with a traceable origin to a reliable and/or trusted source.

There are techs and standards to be deployed for this.

theatlantic.com/ideas/archive/…

English

Anton Abilov retweetledi

Excited to see that our VoterFraud dataset helped this analysis of "misinformation influencers"

Elizabeth Dwoskin@lizzadwoskin

NEW: Been working for months on this data project w/@jeremybmerrill. The ‘big lie’ wasn’t just a plan to overturn the election. It was massive clout-building exercise that spawned a generation of influencers. It continues to polarize even w/Trump offline. washingtonpost.com/technology/202…

English

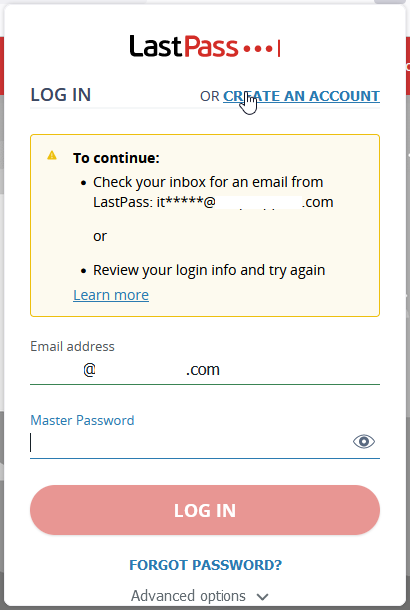

@PrinMaxAnderson @LastPassHelp Im having the same issue. It's extremely slow to the point of making @LastPass unusable. Tried several times over a few days now.. Every time it arrives once it's expired. Will have to switch to another provider unless it's resolved.

English

@LastPassHelp How long does this email take? Not in spam, requested twice now about 30 minutes ago

English

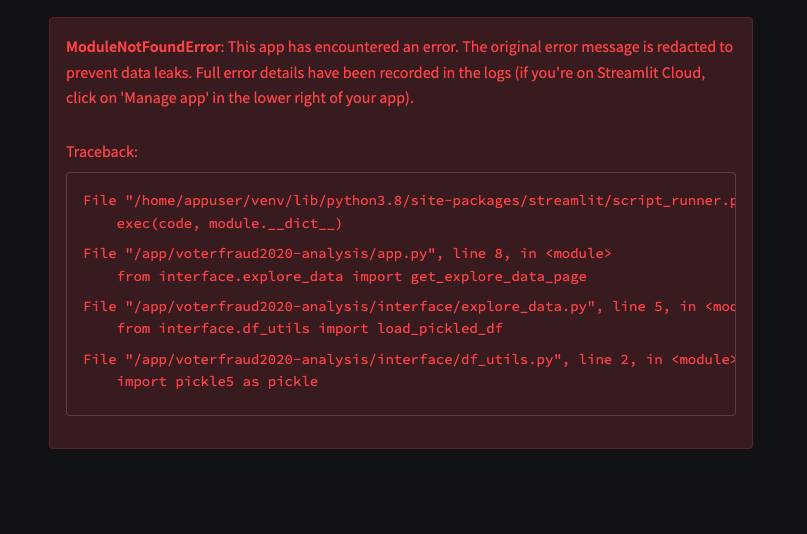

@georgelenton @streamlit Thanks for the heads up! Will look into this

English

Just published my first paper and dataset with the Social Technologies Lab at Cornell Tech - the largest public dataset on voter fraud claims released to date.

Check out the analysis in an interactive @streamlit application here: voterfraud2020.io

English