Aitor Ormazabal

82 posts

@aormazabalo

Member of Technical Staff @RekaAILabs. Prev. PhD @ixaGroup, @Aiatmeta FAIR

Introducing Parallel Thinking for Reka Research! Instead of one line of reasoning, we explore multiple paths in parallel, then resolve the best answer. Big accuracy gains on Research-Eval (+4.2) and SimpleQA (+3.5). Now live in the Reka Research API!

An advanced version of Gemini with Deep Think has officially achieved gold medal-level performance at the International Mathematical Olympiad. 🥇 It solved 5️⃣ out of 6️⃣ exceptionally difficult problems, involving algebra, combinatorics, geometry and number theory. Here’s how 🧵

Reka Research is our AI agent that scours the web to answer your toughest questions. Ready to unlock its full potential? Learn directly from the team who built it!

For our third day of releases we are open sourcing some of our building blocks! I'm particularly happy to be open-sourcing RekaQuant 🗜️, part of our internal quantization stack that I led last year. Short thread on our approach to quantization 🧵1/n

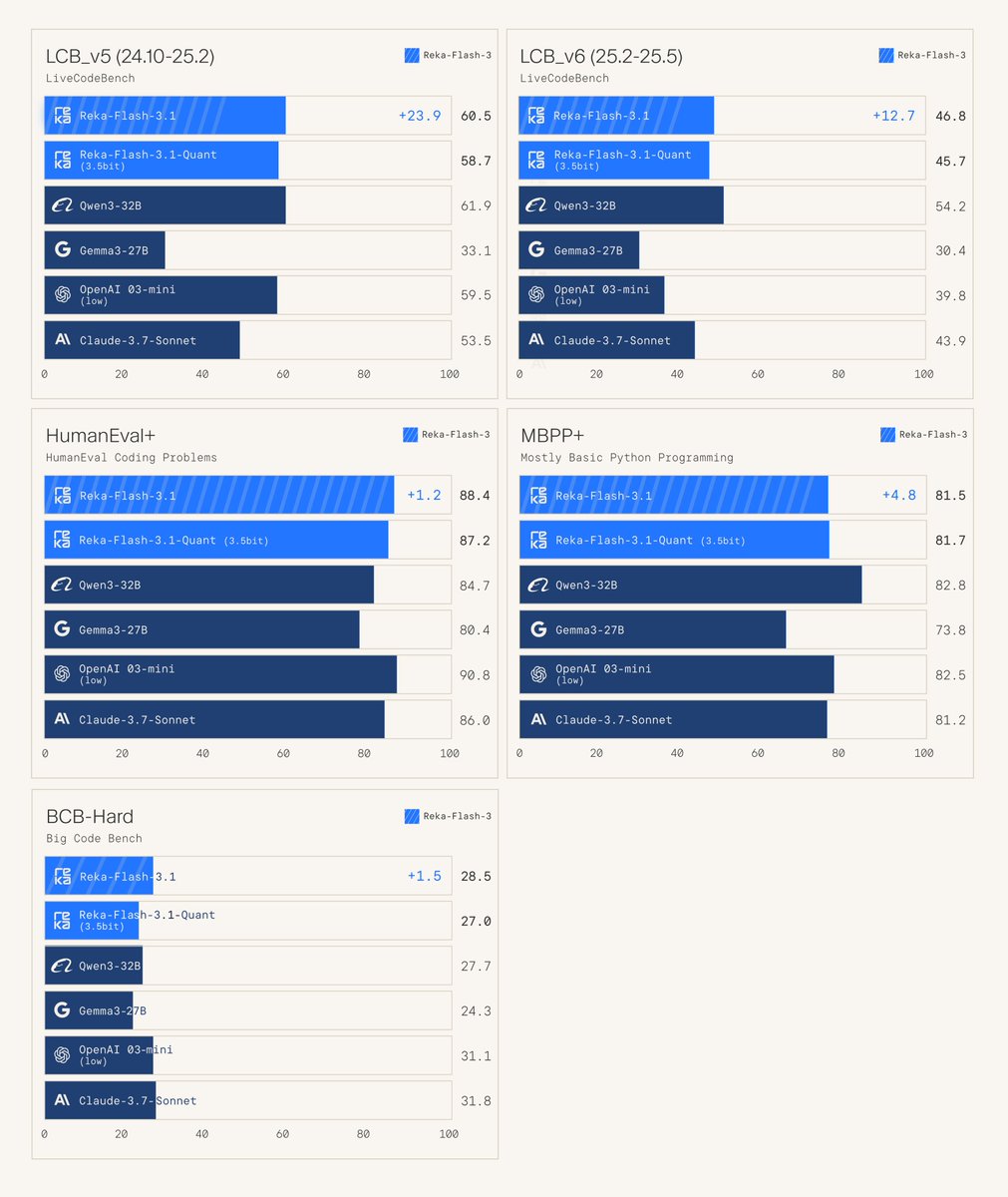

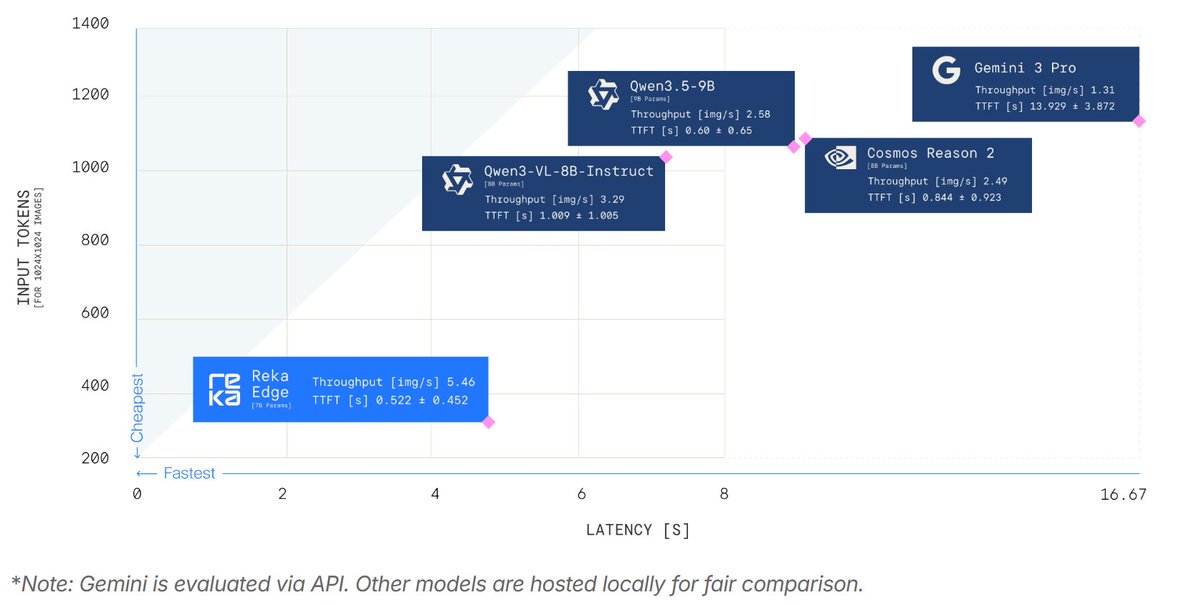

📢 We are open sourcing ⚡Reka Flash 3.1⚡ and 🗜️Reka Quant🗜️. Reka Flash 3.1 is a much improved version of Reka Flash 3 that stands out on coding due to significant advances in our RL stack. 👩💻👨💻 Reka Quant is our state-of-the-art quantization technology. It achieves near-lossless compression of Reka Flash 3.1 to 3.5 bits. 💻

📢 We are open sourcing ⚡Reka Flash 3.1⚡ and 🗜️Reka Quant🗜️. Reka Flash 3.1 is a much improved version of Reka Flash 3 that stands out on coding due to significant advances in our RL stack. 👩💻👨💻 Reka Quant is our state-of-the-art quantization technology. It achieves near-lossless compression of Reka Flash 3.1 to 3.5 bits. 💻

📢 We are open sourcing ⚡Reka Flash 3.1⚡ and 🗜️Reka Quant🗜️. Reka Flash 3.1 is a much improved version of Reka Flash 3 that stands out on coding due to significant advances in our RL stack. 👩💻👨💻 Reka Quant is our state-of-the-art quantization technology. It achieves near-lossless compression of Reka Flash 3.1 to 3.5 bits. 💻