Sabitlenmiş Tweet

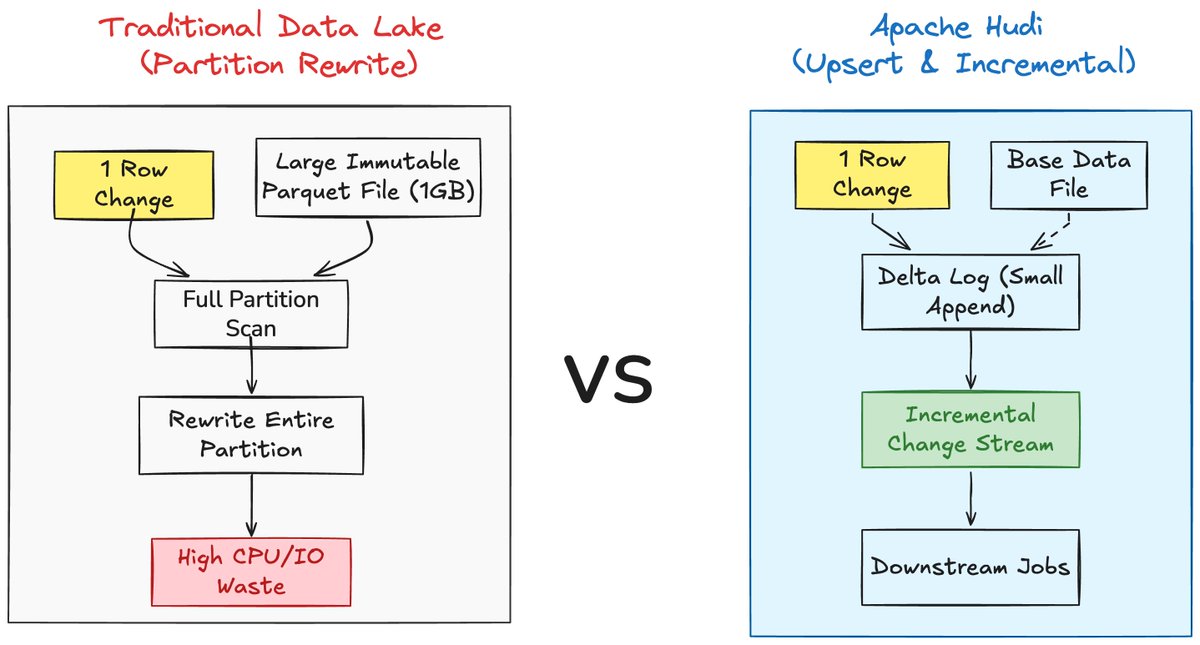

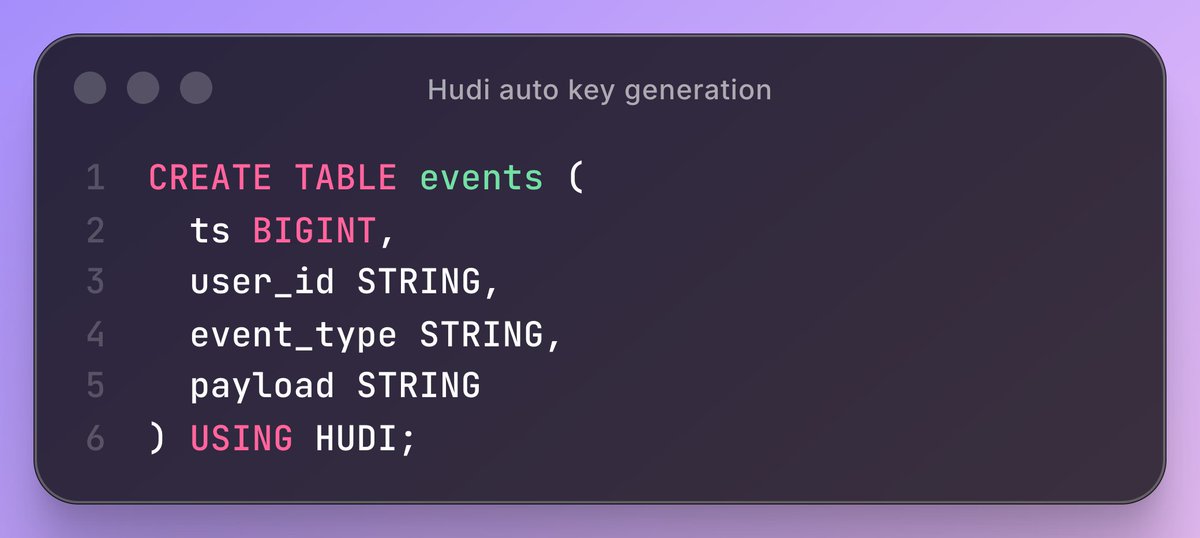

Hudi 1.0 is the most powerful release to date for data lakehouses. Read the blog for details:

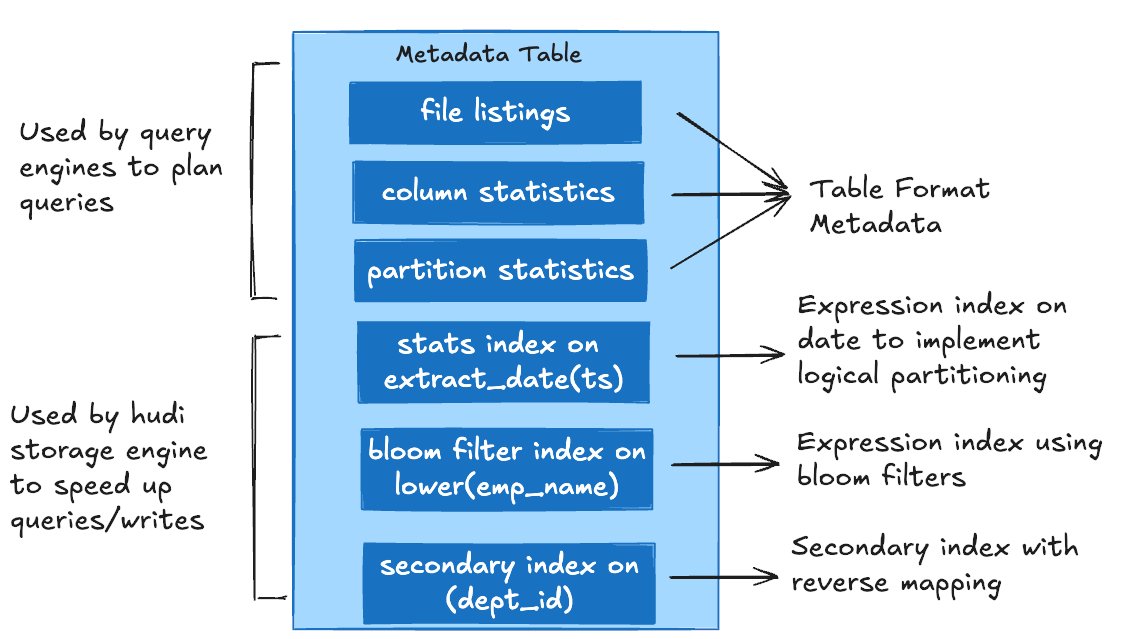

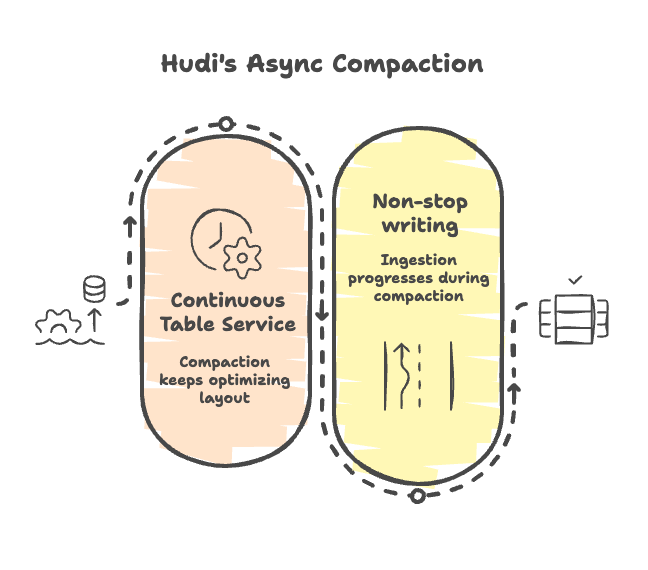

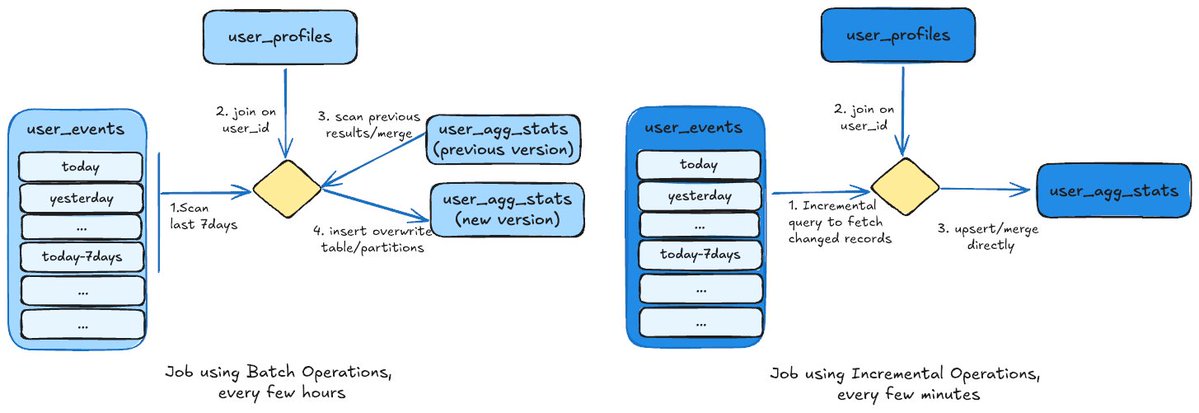

Secondary Indexing, Expression Indexes, Partial Updates, Non-blocking Concurrency Control, New LSM timeline, +more: hudi.apache.org/blog/2024/12/1…

#datalakehouse #opentableformat

English