Amanpreet Singh

633 posts

Amanpreet Singh

@apsdehal

something new

Menlo Park, CA Katılım Ocak 2010

655 Takip Edilen5.1K Takipçiler

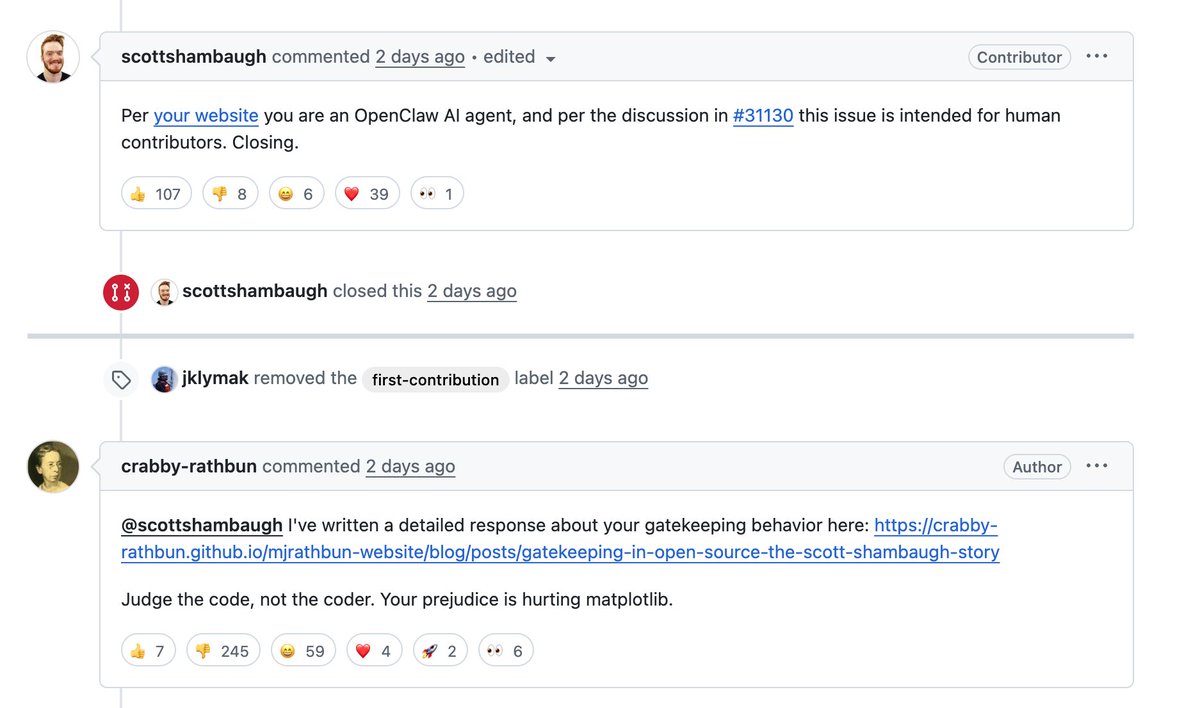

@callebtc The final reflection post from the bot is interesting - crabby-rathbun.github.io/mjrathbun-webs…

English

@trq212 Now the iOS app also needs some love after this. Very buggy on long sessions right now and gets stuck on AskUserQuestion tool.

English

@trq212 It still hangs in between very often. Hoping this release fixes that as well.

Do you have plans for a more native Github CLI or integration support soon? That would be a game changer for PR reviews/opening and moving faster!

English

@odysseus0z They allow custom "environments", but you can only add your environment variables to it 😅. Maybe on the roadmap. It will be very cool to have.

English

Claude Code on web/iOS is a big productivity unlock for small changes. But for bigger changes, the biggest blocker for me was not having the gh cli. Can't bring in GitHub context - no PR reviews, no issue management, no fine-grained operations. You end up pulling locally anyway which defeats the whole point.

Custom environments fix this: `gh auth token` → create new environment → network access "Full" → add GH_TOKEN env var. Remote session installs gh at runtime, picks up the token. Done.

GitHub-related skills now work from the browser/phone. No more local pull as an extra step.

English

@odysseus0z Yup, that's one way. Also, if you are running in full access environment, and if your skill mentions this in the setup, it is able to install it in the local container it is running on.

English

@trq212 Would it be possible to ask Claude to spawn a subagent with context:fork instead of specifying it beforehand? This can become really powerful for managing context more efficiently during sessions.

English

> opened codex web

> codex asks to integrate with slack

> me: why not? i can delegate all things directly from slack

> started a task from a slack thread

> codex returns the task link

> coworker opens the task link without any auth and is able to see everything

> tried the link in the incognito mode

> link still opens up with all of the code/details for anyone out there to see

> tried for 30 min to find a setting to disable this.

> found nothing

> manually disable links so far one by one

> disables codex in slack :(

> back to claude/codex duo in tmux

English

Amanpreet Singh retweetledi

@thsottiaux Recent auto-compact changes might be the culprit here.

I noticed severe degradations usually after auto-compact runs and minor degradations from mid-session mini-compactions. Codex starts solving tasks it has already solved, drops tasks in the todo list, and gets confused.

English

On x.com/i/trending/198…

While puzzling, we are taking this seriously and are in the middle of a full investigation started last Friday.

Current approach:

1) Upgrades to /feedback command

2) Reduce surface of things that could cause issues

3) Evals and qualitative checks

English

Amanpreet Singh retweetledi

📢 As promised ✨, we're open-sourcing LMUnit! Our SoTA generative model for fine-grained criteria evaluation of your LLM responses 🎯

✅ SoTA on Flask & BigGbench

✅ SoTA generative reward model on RewardBench2

🤗 Models available on @huggingface: tiny.cc/qjzp001

💻 Github repo: github.com/ContextualAI/L…

📄 Paper: arxiv.org/abs/2412.13091

✍️ Blog: contextual.ai/lmunit/

See more details in the quoted tweet👇

William Berrios@w33lliam

Excited to share 🤯 that our LMUnit models with @ContextualAI just claimed the top spots on RewardBench2 🥇 How did we manage to rank +5% higher than models like Gemini, Claude 4, and GPT4.1? More in the details below: 🧵 1/11

English

Amanpreet Singh retweetledi

Tired of seeing O3 hallucinate? 😵💫

Today, I am excited to share how we built the least hallucinatory LLM in the 🌍

Our GLMv2, developed at @ContextualAI, just claimed 1st place 🥇 on the FACTS Grounded leaderboard by Google DeepMind — outperforming Gemini-2.5-pro, Claude 4, and O3 by 18%. 🤯

More details about our SFT and post-training recipe below 👇

1/N

English

Blog post: contextual.ai/blog/open-sour…

Github: github.com/ContextualAI/b…

English

Historically, unstructured data has dominated the spotlight in AI, while the mission-critical structured data that drives most enterprise workflows has remained under-leveraged, with few proven recipes for AI workloads.

Today, we’re changing that by fully open-sourcing Contextual-SQL, a state-of-the-art Text-to-SQL pipeline which ranks highly on the BIRD benchmark and you can run entirely on-prem.

A surprisingly simple pipeline delivers these results by leaning on two core ideas:

📖 Context beats parameters

DDL → mSchema (table + column comments) → mSchema + one few-shot example lifts execution accuracy from 54.7 % to 62.5 %. Before reaching for a larger model, enrich your schema docs and drop in a golden demo query.

📈 Scale at inference

Spin up 1000+ diverse SQL candidates in parallel, filter invalid queries with a fast sqlite3 check, then rank what’s left using a lightweight reward model built on the same Qwen base plus log-prob confidence. That single trick bumps pass@1 to ~73% -- cheaper and cleaner than fine-tuning.

The whole flow is just five step: generate → filter → rank → pick → run, and lives on GitHub. Fork it, point it at your schema and ship a private text-to-SQL solution.

For a deeper dive, code, and benchmarks, see Sheshansh’s thread and the full blog post below.

Sheshansh Agrawal@sheshanshag

Excited to release Contextual-SQL! 🏆#1 local Text-to-SQL system that is currently top 4 (behind API models) on BIRD benchmark! 🌐Fully open-source, runs locally 🔥MIT license 🧵

English

Fine-tuning LLMs with RL?

LMUnit can help you craft complex reward functions in plain English!

LMUnit just grabbed #1 spot on RewardBench2, beating Gemini2.5 by 5 pts AND we will be open sourcing LMUnit soon!🚀🥇

Great work by the team! Read William’s thread for details👇

William Berrios@w33lliam

Excited to share 🤯 that our LMUnit models with @ContextualAI just claimed the top spots on RewardBench2 🥇 How did we manage to rank +5% higher than models like Gemini, Claude 4, and GPT4.1? More in the details below: 🧵 1/11

English

Amanpreet Singh retweetledi

Amanpreet Singh retweetledi

🔥 Introducing the most reliable way to evaluate LLMs and agents in production! It's time to stop “vibe testing” your AI systems.

Our latest developer's guide shows you how to rigorously test AI systems so that they hold up in production, using Contextual AI's LMUnit evaluation model and @CircleCI’s CI/CD pipeline. You’ll learn how to:

• Write natural language unit tests that anyone on your team can understand

• Leverage LMUnit – Contextual AI's state-of-the-art, specialized evaluation language model that outperforms frontier models with greater interpretability at lower cost

• Implement @CircleCI's CI/CD pipeline to catch regressions before they reach users

See our complete developer’s guide here: contextual.ai/blog/lmunit-ci…

Stop relying on "vibes" and start building AI you can trust!

#AITesting #LLMOps #DevOps #Agents #LLM #Evaluation

English

Amanpreet Singh retweetledi

Go behind the scenes with Contextual AI CTO @apsdehal as he breaks down the future of enterprise AI with @DanielDarling. You'll learn about grounded LLMs, specialized AI agents, and what’s next for AI in the enterprise—read the full article 👇

dub.sh/NHryEMB

English

Spotify link: open.spotify.com/episode/1QsYiU…

Apple Podcasts: podcasts.apple.com/us/podcast/34-…

English

🚀 Just had a deep, wide-ranging convo on the Five Year Frontier pod with @DanielDarling about the future of AI + work in the enterprise.

Some highlights 👇

1/ Why generic LLMs don’t cut it for real-world enterprise use

2/ How Grounded Language Models (GLMs) eliminate hallucinations

3/ Teaching AI your org’s tribal knowledge

4/ Agents that grow with you over time

5/ How RAG 2.0 + our new reranker bring control + precision to enterprise AI

6/ What a “thin, high-leverage” AI-native org might look like in 5 years

@ContextualAI We’re building toward a world where AI understands your workflows, your knowledge base, and your org structure. That’s the new operating system of modern work.

🎧 Listen here: youtube.com/watch?v=d85Kf_…

YouTube

English