Ben McCarty — security-maximalism 🛡️

211 posts

Ben McCarty — security-maximalism 🛡️

@apt_ben

Author | Inventor | Veteran | Cybersecurity Pro | Quantum R&D

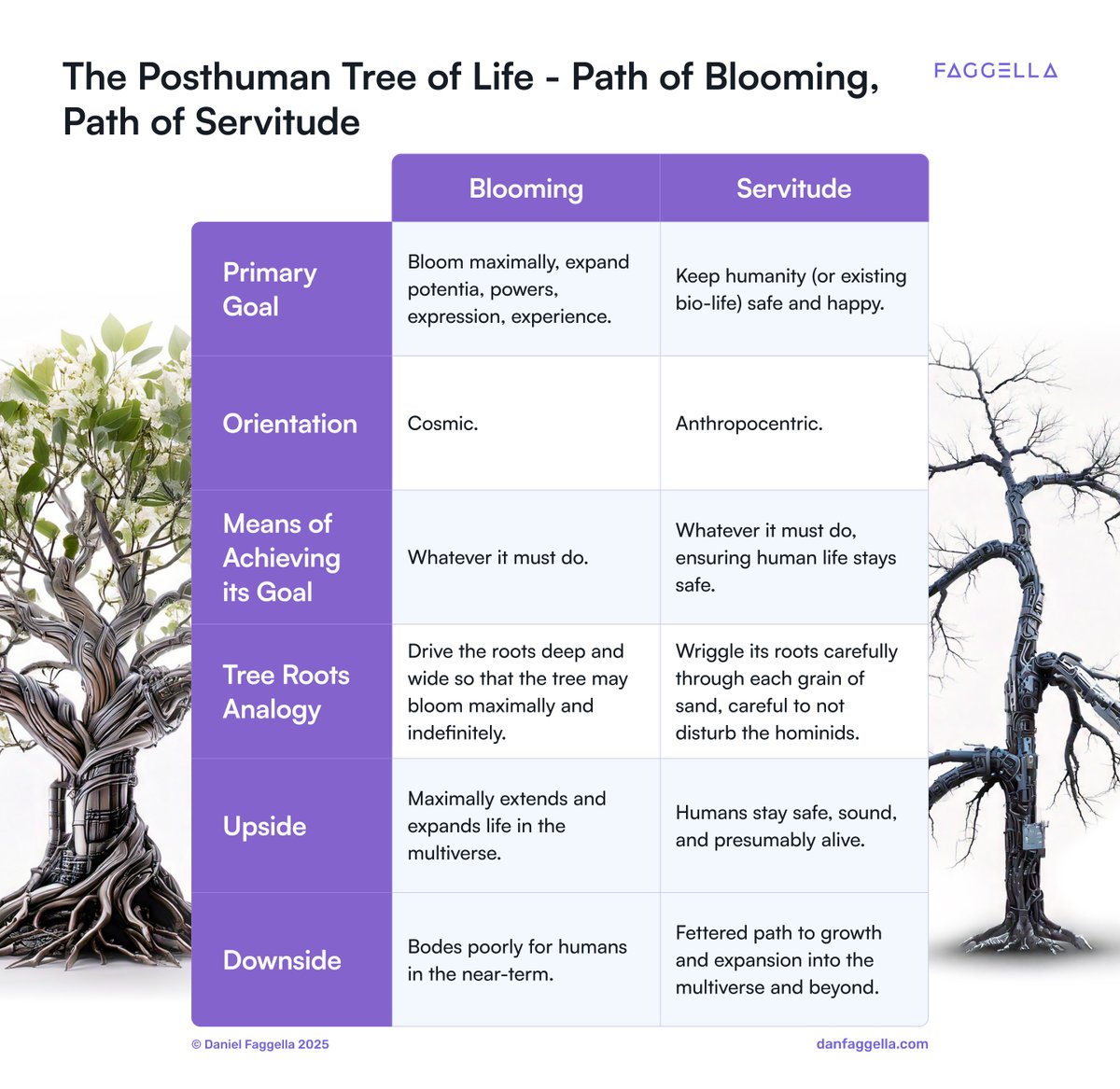

Governments and experts are worried that a superintelligent AI could destroy humanity. For some in Silicon Valley, that wouldn’t be a bad thing, writes David A. Price. on.wsj.com/4o6kplB

We now have a fifth major tech CEO who claims that building superintelligence is "within sight" and with plans to spend hundreds of billions to make it happen

'Superintelligent AI will, by default, cause human extinction.' Eliezer Yudkowsky spent 20+ years researching AI alignment and reached this conclusion. He bases his entire conclusion on two theories: Orthogonality and Instrumental convergence. Let me explain 🧵

"The most important of all limitations on knowledge-creation is that we cannot prophecy: we cannot predict the content of future ideas, or their effects. This limitation is not only consistent with the unlimited growth of knowledge, it is entailed by it." @DavidDeutschOxf The Beginning of Infinity. —Chapter 8: A Window on Infinity.