Armsentientrobotics

15 posts

Armsentientrobotics

@armsentient

Open-source desktop 12-DOF quadruped robot with arm/gripper, featuring intelligent servo control and voice recognition

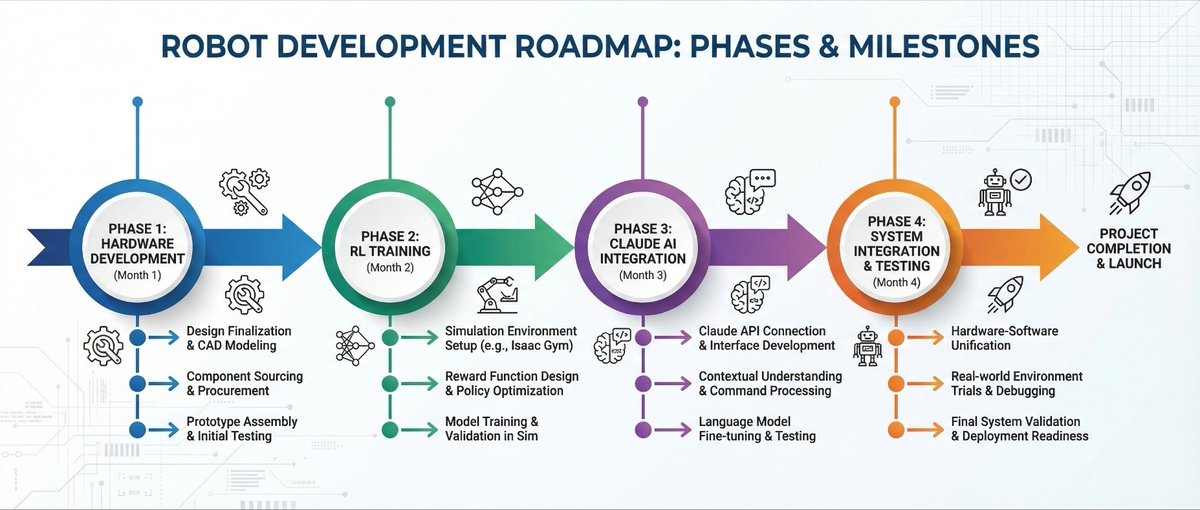

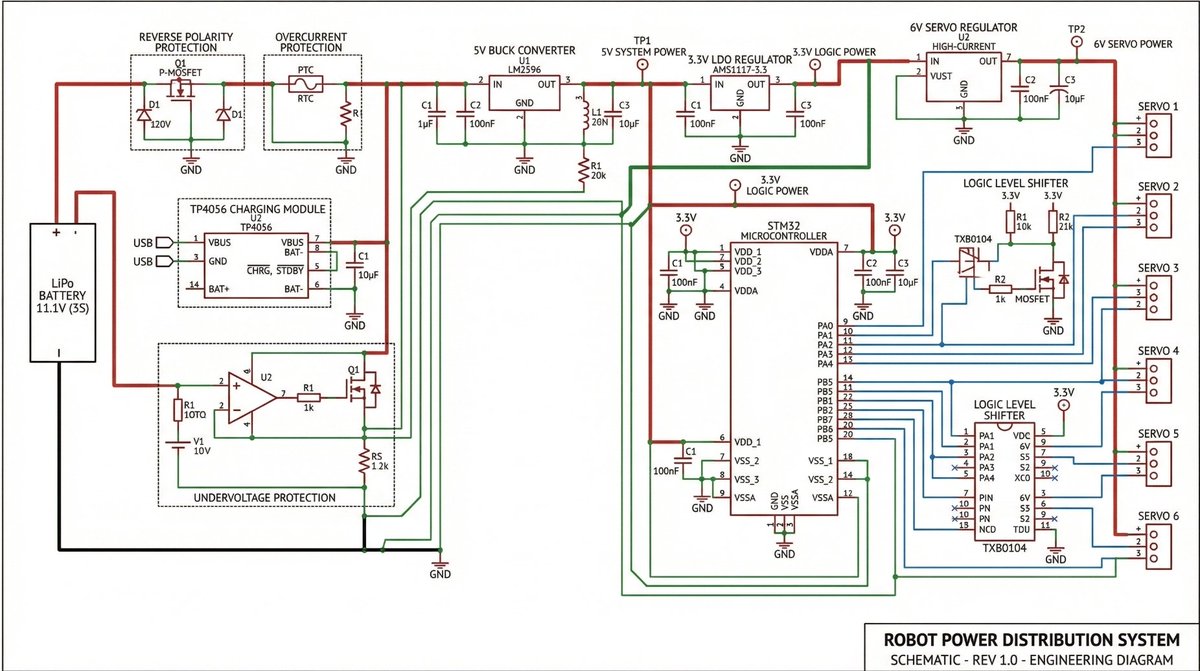

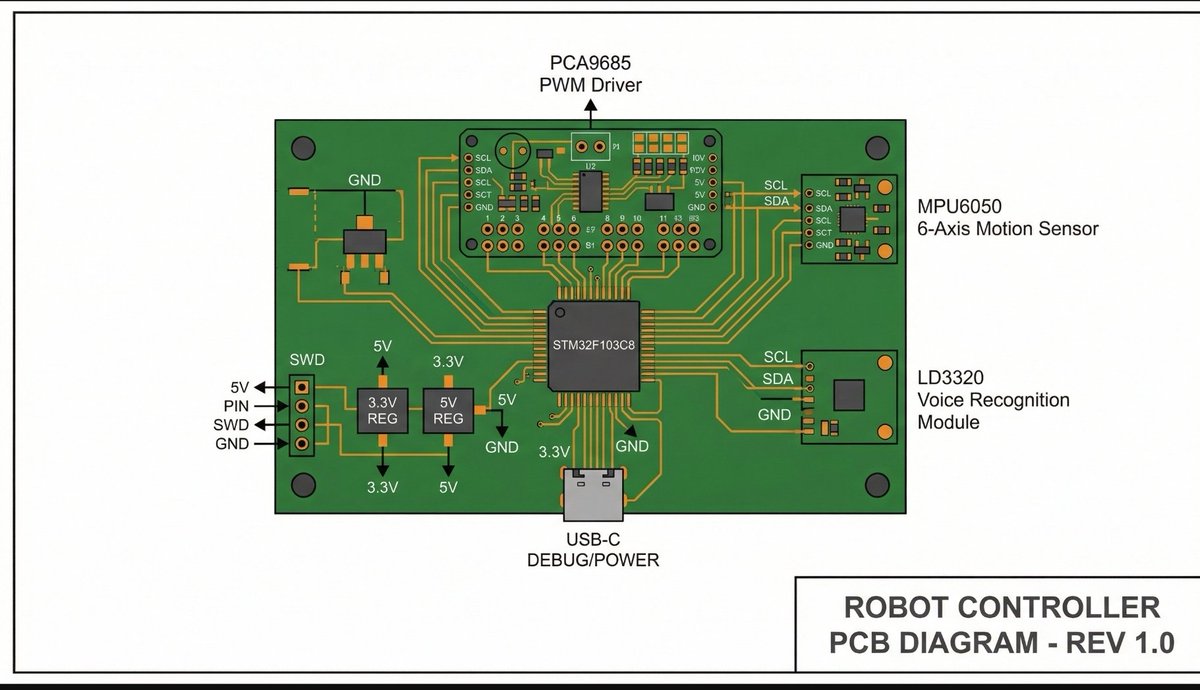

6 minutes of what 4 months of building a quadruped robot actually looks like. started in SolidWorks. every bracket, every servo mount, every joint clearance designed from scratch. the blue panel you see? that's the main chassis plate, CNC-ready, holding 12 servos, an STM32 brain, IMU, and a full robotic arm. this isn't "i forked a repo and added a README." this is PCB schematics → CAD modeling → 3D printing → firmware flashing → gait tuning → voice integration → RL training pipeline. leg brackets designed around MG92R servo dimensions. arm linkage with gripper clearance calculated for pickup sequences. mounting holes for PCA9685 servo driver and MPU6050 IMU. power routing separated between MCU and servo rails because 12 servos pulling 5A will brown out your controller faster than you can debug it. 4 months. 32 commits. hardware committed before a single line of hype was written. the robot walks. it grabs. it listens. it thinks. and now it has a token. $ASR github.com/ohmyzaid/ArmSe…

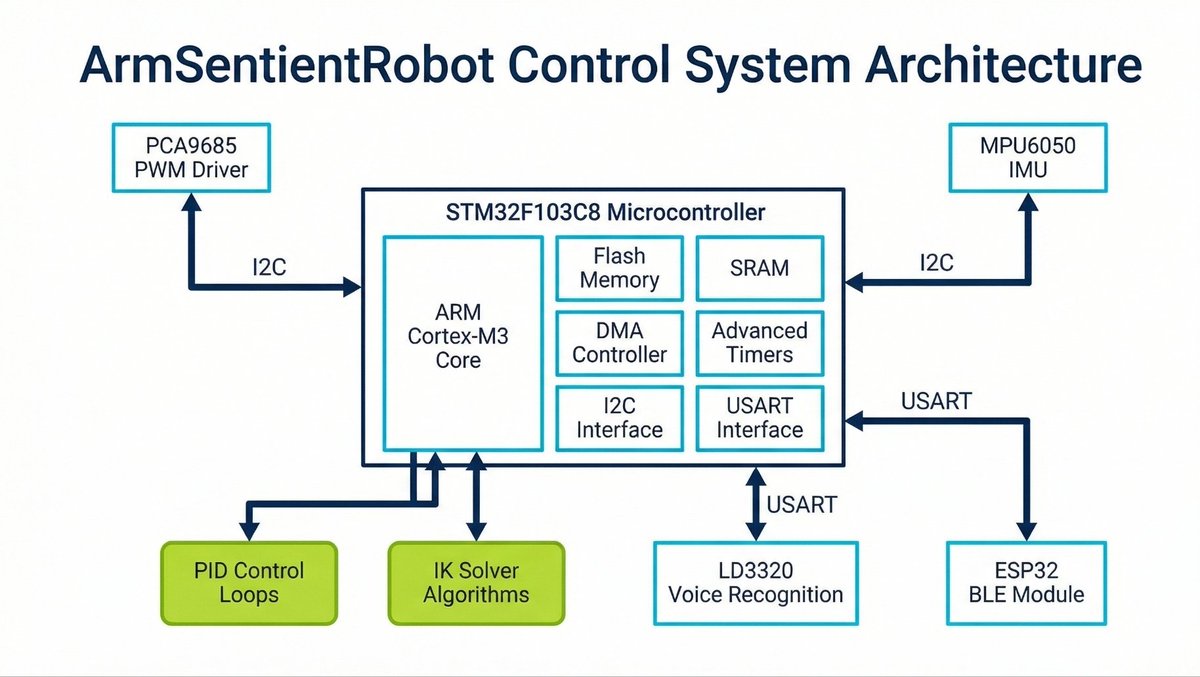

this is the wiring diagram of a robot that walks, grabs, and listens to your voice. STM32F103C8 at the center running the whole show. left side: MPU6050 IMU feeding accelerometer and gyro data so the robot knows which way is up. right side: LD3320 voice module so you can talk to it and it actually responds. below the brain: PCA9685 servo driver on I2C, splitting one data line into 16 independent PWM channels. 12 of those channels driving MG92R high-torque servos, 3 per leg, 4 legs. the remaining channels running the gripper/arm mechanism on the far right. power: LiPo battery with separated rails. servo power goes straight to PCA9685's V+ line. logic power goes to STM32. you cross those rails and 12 servos stalling simultaneously will kill your microcontroller in milliseconds. every wire on this diagram exists on a real PCB. schematics committed to GitHub 4 months ago. this isn't a fritzing sketch someone made in an afternoon. this is production wiring for a robot that's already walking.