Arnavi Chheda-Kothary retweetledi

Arnavi Chheda-Kothary

129 posts

Arnavi Chheda-Kothary

@arnavic

PhD student @ University of Washington CSE | Previously @ Ai2, MSFT, Columbia, UW

Katılım Mart 2010

174 Takip Edilen209 Takipçiler

Arnavi Chheda-Kothary retweetledi

Congratulations to the Super Bowl champion @Seahawks! This defense was special. MVP Kenneth Walker was dominant. And Sam Darnold gave us one of the best comeback stories in a long time. Enjoy the celebration.

English

Belated update: I defended my PhD last month!

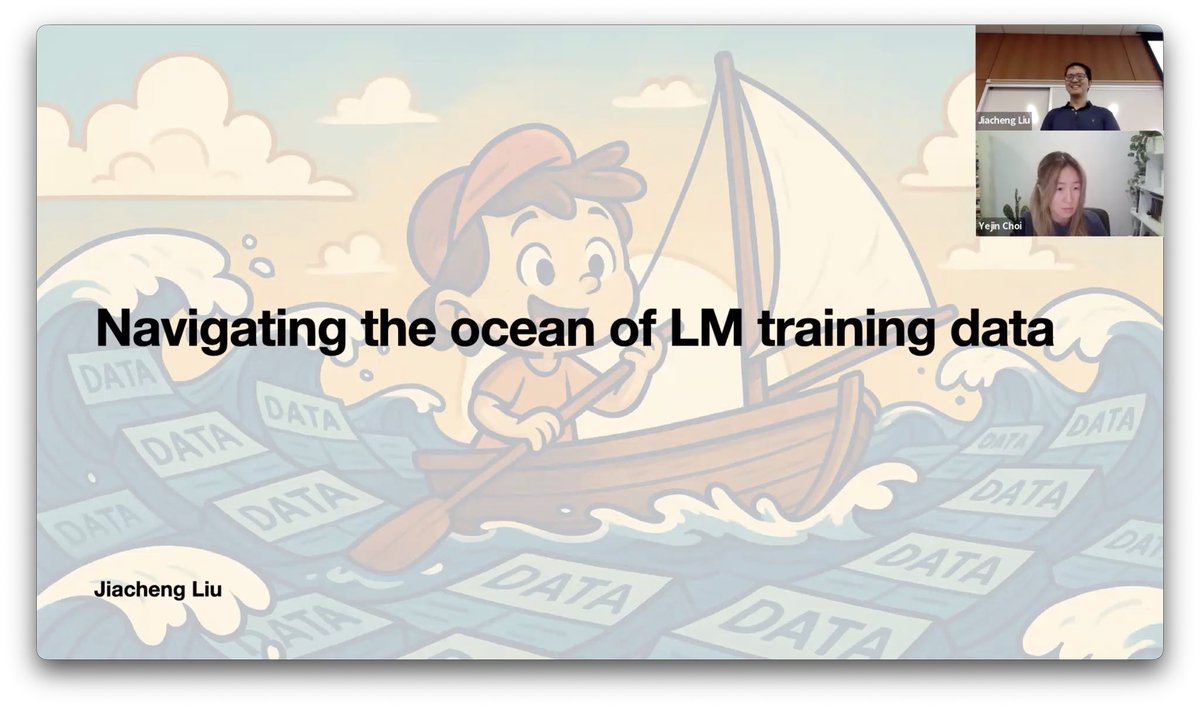

I am tremendously grateful to my advisors, @HannaHajishirzi and @YejinChoinka. Without their incredible support, I wouldn’t have had so much fun exploring bold ideas, like taking a journey into the ocean of LLM pretraining data. 🥰🥰

English

It’s been incredible for me to return to Ai2 as a student researcher on this project with @turingmusician @lucyluwang and @josephcc, getting to combine my background in accessibility and engineering to bring this prototype to life! Please feel free to reach out with feedback.

English

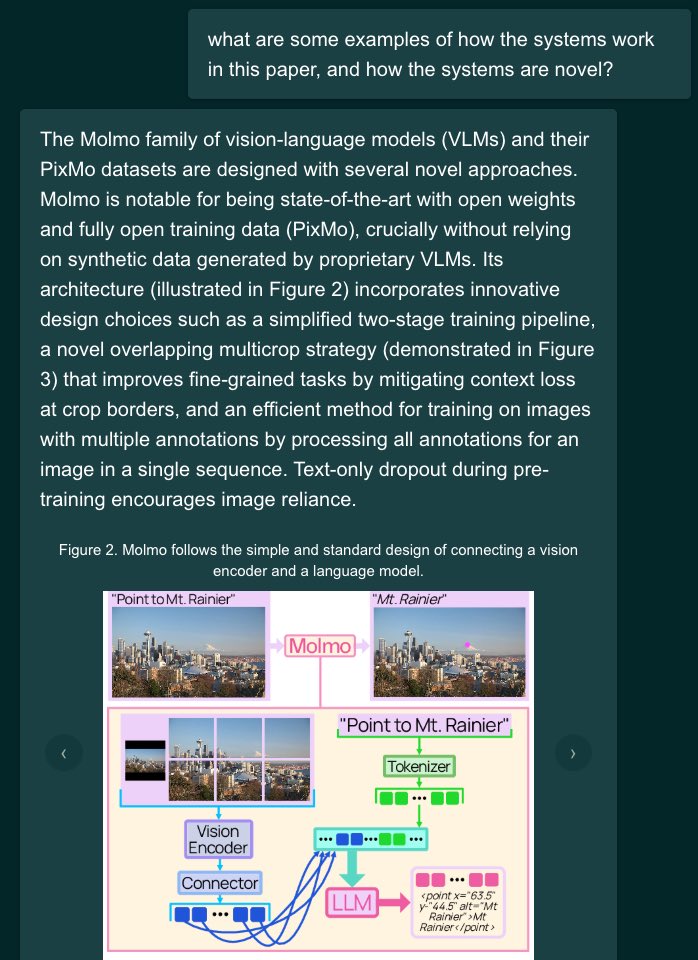

Ever want to ask questions about a paper, including its figures & tables? 📊📈 Want smoother interactions w/papers on desktop & mobile?

Try Paper+Figure QA, a new tool from @allen_ai that answers with the original figures, tables, and excerpts from papers: paperfigureqa.allen.ai

English

Arnavi Chheda-Kothary retweetledi

Agent benchmarks don't measure true *AI* advances

We built one that's hard & trustworthy

👉AstaBench tests agents w/ *standardized tools* on 2400+ scientific research problems

👉SOTA results across 22 agent *classes*

👉AgentBaselines agents suite

🆕arxiv.org/abs/2510.21652

🧵👇

English

Arnavi Chheda-Kothary retweetledi

@umsi we developed accessibility guidelines to evaluate AI coding tools like @Replit , @julesagent and @code . Blind or visually impaired developers, #A11Y experts and accademics, please participate in our study to ensure programming remains accessible!

idea11y.dev/VibeCheck/

English

Thank you to my collaborators, and a huge huge thank you to @BrianHCI who has stuck with me and with this work through several rejection cycles. This work happened as a part of my Master’s thesis working at the CEAL lab, and I’m grateful that we saw it over the finish line! (6/6)

English

Read more about our work here — dl.acm.org/doi/10.1145/37…. For those at CHI, I’ll be presenting on Wednesday during the Spatial Interactions session! Please come find me then or anytime this week to chat further about all things screen readers, accessibility, and AI! (5/6)

English

Kicking off my very first CHI today! Great to be at #CHI2025 in beautiful Yokohama, where I’ll be presenting work on incorporating spatial interactions into desktop screen readers titled: "It Brought Me Joy": Opportunities for Spatial Browsing in Desktop Screen Readers. (1/6)

English

It was such a great experience to have been a part of bringing this to the Ai2 Playground! Congrats to the whole team 🎉

Ai2@allen_ai

For years it’s been an open question — how much is a language model learning and synthesizing information, and how much is it just memorizing and reciting? Introducing OLMoTrace, a new feature in the Ai2 Playground that begins to shed some light. 🔦

English

Today we're unveiling OLMoTrace, a tool that enables everyone to understand the outputs of LLMs by connecting to their training data.

We do this on unprecedented scale and in real time: finding matching text between model outputs and 4 trillion training tokens within seconds. ✨

Ai2@allen_ai

For years it’s been an open question — how much is a language model learning and synthesizing information, and how much is it just memorizing and reciting? Introducing OLMoTrace, a new feature in the Ai2 Playground that begins to shed some light. 🔦

English

A huge thanks to all my collaborators, and special shoutout to my wonderful advisors @wobbrockjo and @jonfroehlich.

English

Excited to be at #IUI25 in Cagliari, Italy this week to present our paper “ArtInsight: Enabling AI-Powered Artwork Engagement for Mixed Visual-Ability Families”. You can find the full paper here: dl.acm.org/doi/full/10.11…

English