Arthur Ostapenko retweetledi

Arthur Ostapenko

8.3K posts

Arthur Ostapenko

@arthurostapenko

explorer, researcher, builder

Spain Katılım Kasım 2009

5.3K Takip Edilen859 Takipçiler

Arthur Ostapenko retweetledi

We’re launching the beta for our new commercial AI product: Sakana Fugu 🐡, a multi-agent orchestration system!

Blog: sakana.ai/fugu-beta

Fugu hits SOTA on SWE-Pro, GPQA-D, and ALE-Bench, and has been our internal secret weapon. It dynamically coordinates frontier models, autonomously selecting the optimal agent combinations and roles for each task.

Available as an OpenAI-compatible API, you can seamlessly integrate Fugu into your existing workflows with minimal changes.

🐟 Fugu Mini: High-speed orchestration optimized for latency

🐡 Fugu Ultra: Full model pool utilization for deep, complex reasoning

Apply for the beta test here: forms.gle/BtKkhc2CfLKk1d…

English

Arthur Ostapenko retweetledi

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice.

Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today!

📄 Tech Report: huggingface.co/deepseek-ai/De…

🤗 Open Weights: huggingface.co/collections/de…

1/n

English

Arthur Ostapenko retweetledi

🚀 Meet Qwen3.6-27B, our latest dense, open-source model, packing flagship-level coding power!

Yes, 27B, and Qwen3.6-27B punches way above its weight. 👇

What's new:

🧠 Outstanding agentic coding — surpasses Qwen3.5-397B-A17B across all major coding benchmarks

💡 Strong reasoning across text & multimodal tasks

🔄 Supports thinking & non-thinking modes

✅ Apache 2.0 — fully open, fully yours

Smaller model. Bigger results. Community's favorite. ❤️

We can't wait to see what you build with Qwen3.6-27B! 👀

🔗👇

Blog: qwen.ai/blog?id=qwen3.…

Qwen Studio: chat.qwen.ai/?models=qwen3.…

Github: github.com/QwenLM/Qwen3.6

Hugging Face:

huggingface.co/Qwen/Qwen3.6-2…

huggingface.co/Qwen/Qwen3.6-2…

ModelScope:

modelscope.cn/models/Qwen/Qw…

modelscope.cn/models/Qwen/Qw…

English

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

Imagine every pixel on your screen, streamed live directly from a model. No HTML, no layout engine, no code. Just exactly what you want to see.

@eddiejiao_obj, @drewocarr and I built a prototype to see how this could actually work, and set out to make it real. We're calling it Flipbook. (1/5)

English

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

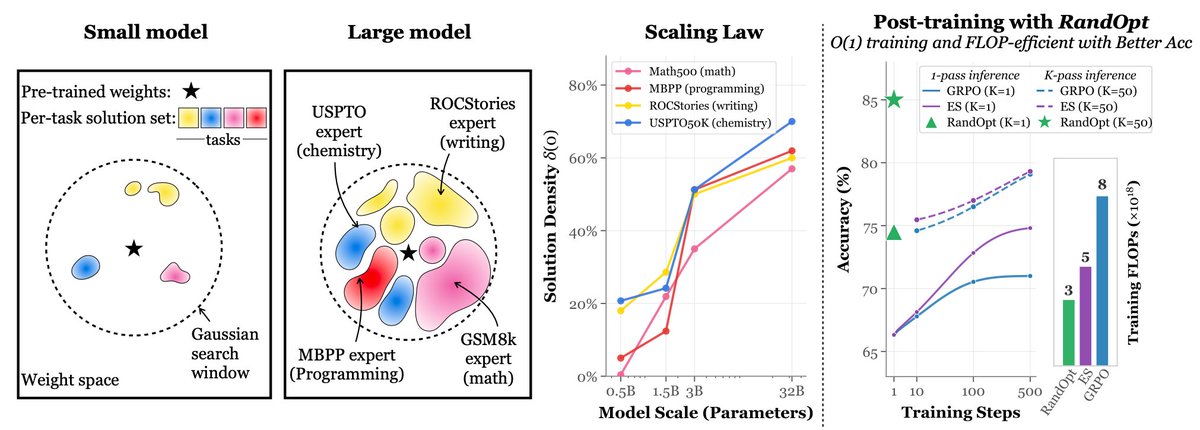

Discovering Novel LLM Experts via Task-Capability Coevolution

Project: acdc-llm.github.io

Paper: arxiv.org/abs/2604.14969

Can we build AI that is smarter than its parts? This week, our team will present AC/DC⚡ at #ICLR2026.

The current paradigm in AI assumes that to solve more complex problems, we must train a single, ever-larger model. But no single model can excel at every task without massive computational costs.

Instead of building one monolithic model, we asked what if we coevolved a diverse collective of specialized experts. We introduce Assessment Coevolving with Diverse Capabilities (AC/DC).

It is a framework that simultaneously evolves a population of LLMs using evolutionary model merging and an archive of synthetic tasks generated by an AI scientist. As the tasks become more complex, the models must develop distinct, specialized skills to solve them.

Crucially, AC/DC selects models based on Quality-Diversity. It keeps models not just because they score high on average, but because they solve different problems than the rest of the population.

The results show that a collaborative task force of 8 small, evolved models can outperform a massive 72B parameter model, using significantly fewer total parameters. These models genuinely specialize, providing completely different, yet correct, approaches to complex problems.

This suggests a new path forward for AI development, creating highly capable, parameter-efficient systems through collective intelligence rather than relying solely on brute-force scaling.

OpenReview: openreview.net/forum?id=efNIN…

English

Arthur Ostapenko retweetledi

Can LLMs flip coins in their heads?

When prompted to “Flip a fair coin” 100 times, the heads to tails ratio drifts far from 50:50. LLMs can understand what the target probability should be, but generating outputs that faithfully follow a given distribution is a separate problem.

This bias extends beyond coin flips. When LLMs are asked to generate multiple story ideas or brainstorm solutions, the outputs tend to cluster around a narrow range. The same probabilistic skew that distorts coin flips limits diversity in creative generation, recommendations, and other tasks where varied outputs are needed.

We discovered a prompting technique named String Seed of Thought (SSoT). The method is simple: instruct the LLM to generate a random string in its own output, then manipulate that string to derive its answer. It requires only a small addition to the prompt and no external random number generator.

SSoT significantly reduces output bias across a wide range of LLMs, both open and closed. With reasoning models (such as DeepSeek-R1), it reaches accuracy close to that of actual random sampling. The method generalizes from binary choices to n-way selections and arbitrary probability distributions. On the NoveltyBench diversity benchmark, SSoT outperformed other approaches across all six categories while maintaining output quality.

This work will be presented at #ICLR2026!

Blog: pub.sakana.ai/ssot

Paper: arxiv.org/abs/2510.21150

Openreview: openreview.net/forum?id=luXtb…

GIF

English

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

Meet Kimi K2.6: Advancing Open-Source Coding

🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2)

What's new:

🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization).

🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D.

🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files.

🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops.

🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop.

-

K2.6 is now live on kimi.com in chat mode and agent mode.

For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code

-

🔗 API: platform.moonshot.ai

🔗 Tech blog: kimi.com/blog/kimi-k2-6

🔗 Weights & code: huggingface.co/moonshotai/Kim…

English

Arthur Ostapenko retweetledi

🚨Why should one huge LLM know and solve everything? - No single human does, yet our civilization does endless innovation.

Introducing AC/DC - it continually coevolves a population of small expert LLMs that collectively outperform GPT-4o.

(ICLR 2026 w/ @SakanaAILabs) 👇🧵

English

Arthur Ostapenko retweetledi

“Think Anywhere in Code Generation”

Most reasoning LLMs think before writing code. But coding often gets hard because the tricky parts only gets revealed mid-implementation when the edge cases or final return logic appear.

So this paper introduces Think-Anywhere, where models can pause and reason at any token position while generating code, then strip those thoughts out to leave clean executable code.

Trained with cold-start SFT + execution-based RL, this beats CoT, self-planning, interleaved thinking, GRPO, and recent code post-training methods.

This lets the model learns to think exactly where uncertainty appears.

English

Arthur Ostapenko retweetledi

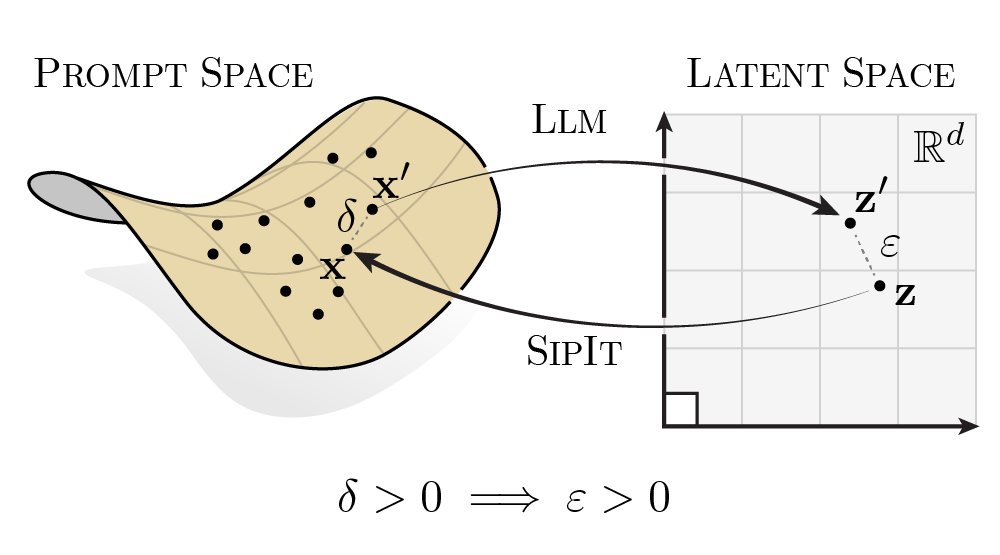

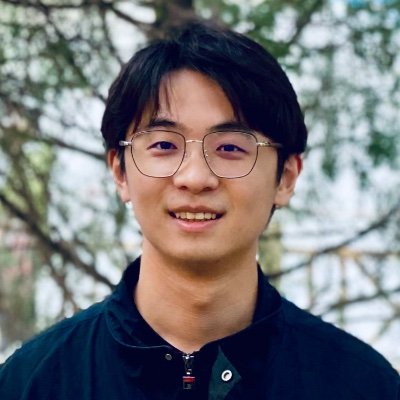

Simply adding Gaussian noise to LLMs (one step—no iterations, no learning rate, no gradients) and ensembling them can achieve performance comparable to or even better than standard GRPO/PPO on math reasoning, coding, writing, and chemistry tasks. We call this algorithm RandOpt.

To verify that this is not limited to specific models, we tested it on Qwen, Llama, OLMo3, and VLMs.

What's behind this? We find that in the Gaussian search neighborhood around pretrained LLMs, diverse task experts are densely distributed — a regime we term Neural Thickets.

Paper: arxiv.org/pdf/2603.12228

Code: github.com/sunrainyg/Rand…

Website: thickets.mit.edu

English

Arthur Ostapenko retweetledi

What happens when you put competing neural networks in a Petri Dish and start changing the rules while they adapt?

Last year we released Petri Dish NCA, where neural nets are the organisms that learn during simulation. Today we're releasing Digital Ecosystems: a browser-based platform for interactive artificial life research.

The setup: several small CNNs share a 2D grid, each seeing only a 3x3 neighborhood. No global plan. They compete for territory by attacking neighbours and defending against incoming attacks, learning via gradient descent online while the simulation runs.

What we didn't expect was the role of the learning itself. Gradient descent isn't just optimising each species' strategy. Instead, it acts to stabilize the whole system during simulation. Species that overextend get pushed back by the loss. Species that stagnate get nudged to grow. This means you can push parameters toward edge-of-chaos regimes: a zone characterised by emergent complexity. Letting the neural networks learn acts to hold the complex system together while you explore and interact.

The platform lets you steer all of this interactively. You can draw walls to create niches, erase parts of the system online, and tune 40+ system parameters to explore the most interesting configurations. We find it mesmerizing to watch species carve out territories and reorganise when you perturb them.

Everything runs client-side in your browser, no install needed.

Blog: pub.sakana.ai/digital-ecosys…

Code: github.com/SakanaAI/digit…

English

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

Hi! I'm here with *another launch*, it just happens to be extremely niche, nerdy, and probably only for a handful of people.

In the desktop app, Claude Cowork and Code now have a little Bluetooth API for makers & developers, allowing you to build hardware devices that interact with Claude.

I, for instance, built a little desk pet that alerts me whenever Claude is waiting for permission.

English

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

Arthur Ostapenko retweetledi

ok actually insane paper published yesterday

a research group in Korea built a gene switch you can control wirelessly using electromagnetic fields

they exposed mice to 60 hz EMF (same frequency as your wall outlet) using a pair of large coils that generate a uniform magnetic field around the animal, for cyclic 3-day on / 4-day off pulses

they showed this could:

- activate OSK to do epigenetic reprogramming in progeroid and aged mice, extending lifespan and reversing aging markers across multiple tissues

- conditionally switch on mutant amyloid genes only in aged mouse brains, letting them separate aging effects from amyloid effects to study AD biology in a way previous models couldn't

no drugs, no impacts, just a magnetic field from outside the body

English