Sabitlenmiş Tweet

alphaXiv

1.8K posts

Now available in addition to Gemini and Claude. Check out alphaXiv.org!

English

Yann LeCun and his team dropped yet another paper!

"V-JEPA 2.1: Unlocking Dense Features in Video Self-Supervised Learning"

In this V-JEPA upgrade, they showed that if you make a video model predict every patch, not just the masked ones AND at multiple layers, they are able to turn vague scene understanding into dense + temporal stable features that actually understands "what is where".

This key insight drove improvements in segmentation, depth, anticipation, and even robot planning.

English

@askalphaxiv @askalphaxiv very cool! You should submit this to Claude's MCP directory: claude.com/connectors

English

alphaXiv retweetledi

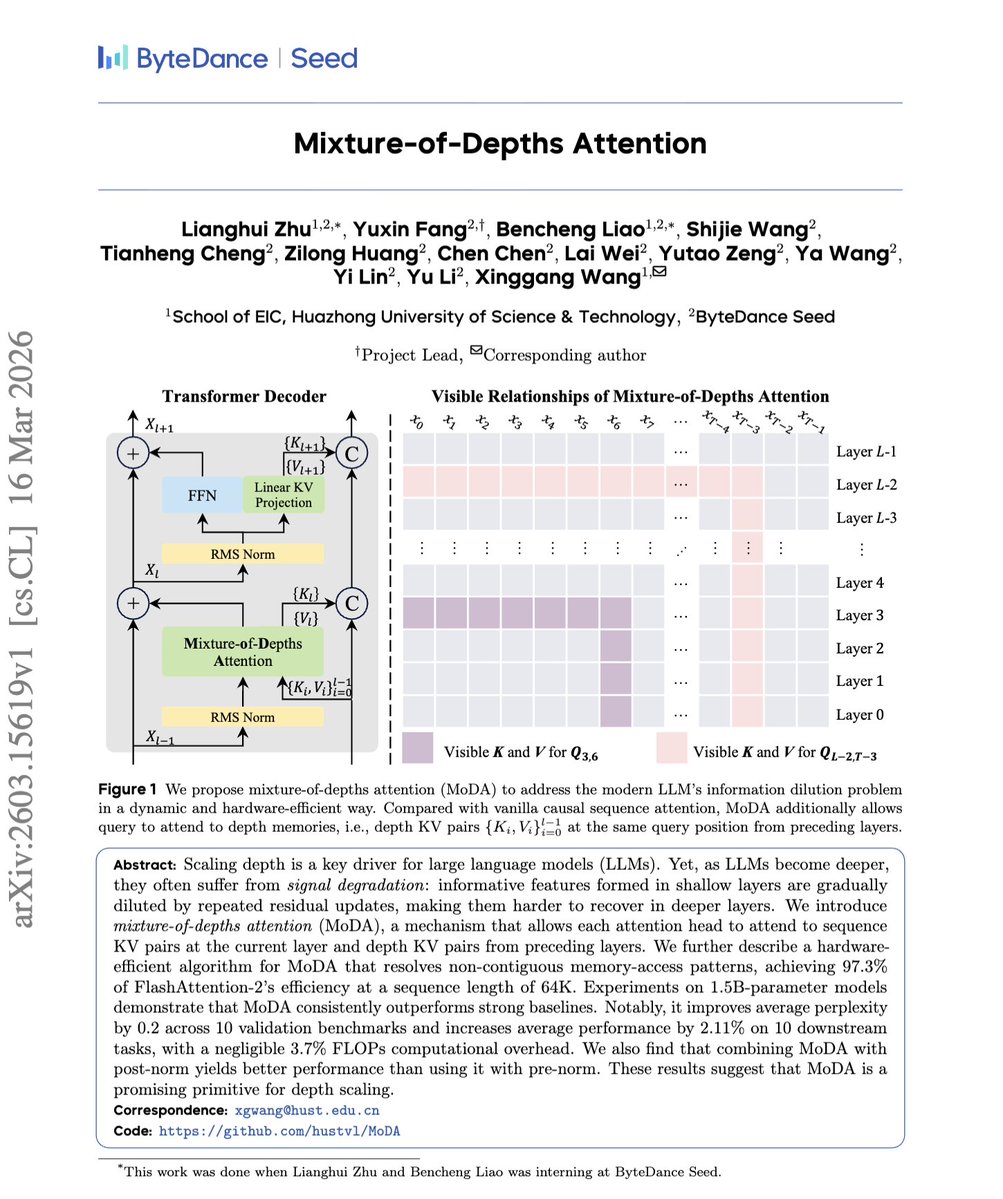

"Mixture-of-Depths Attention"

This paper teaches a Transformer to attend not just across tokens, but also to depth KV from its earlier layers.

That helps recover shallow-layer signals that standard residual stacking tends to dilute, improving performance with only a small extra compute cost.

Similar idea to Kimi’s Attention Residuals, but MoDA modifies the attention module itself, while AttnRes changes the residual/depth aggregation path.

English

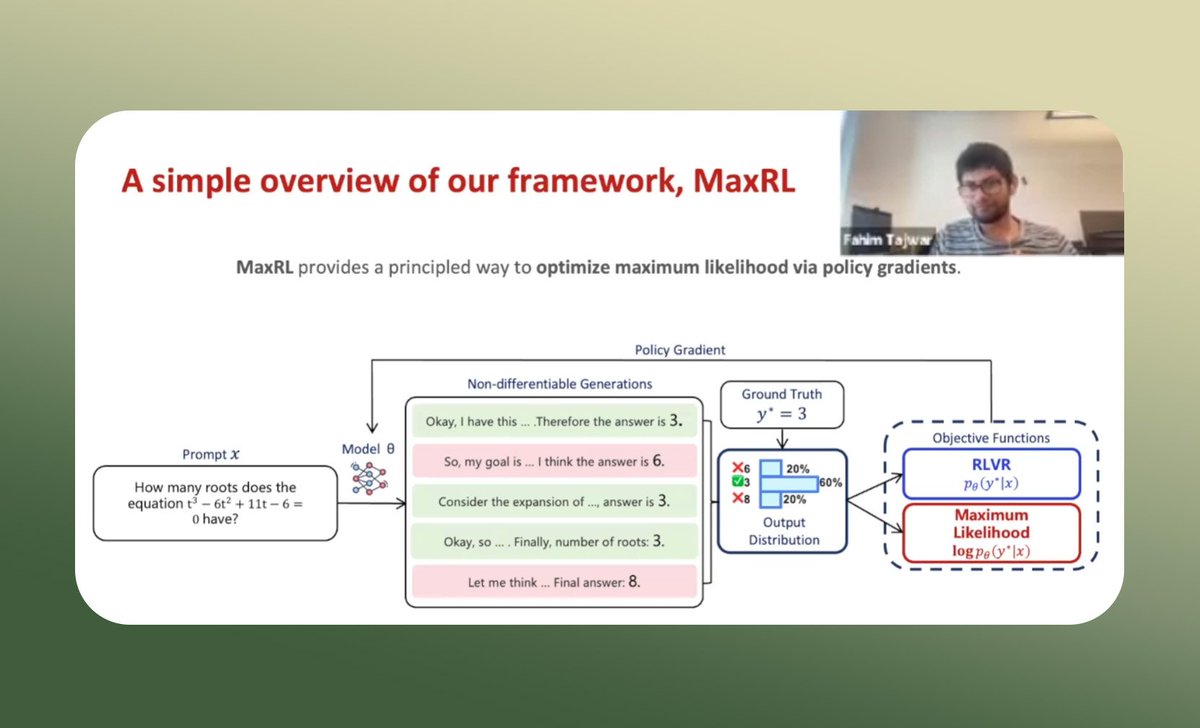

The current RL setup might not be the right objective for reasoning models

In our latest AI4Science talk, Fahim (@FahimTajwar10), PhD student at CMU, presented “Maximum Likelihood Reinforcement Learning”, a new framework for binary-reward tasks like math reasoning, navigation, and program synthesis.

The core idea is simple: standard RL mainly optimizes pass@1, which can over-focus on easy prompts and underuse rare correct rollouts from hard ones.

MaxRL instead approximates a maximum-likelihood objective, so the model learns more effectively from those sparse successes.

What makes this especially interesting is that the method seems to preserve much more solution diversity. Across the experiments discussed in the talk, MaxRL led to stronger pass@k, less diversity collapse, and better inference efficiency than standard REINFORCE or GRPO, especially when sampling multiple rollouts matters.

A very interesting talk if you’re into reasoning LLMs and RL!

English

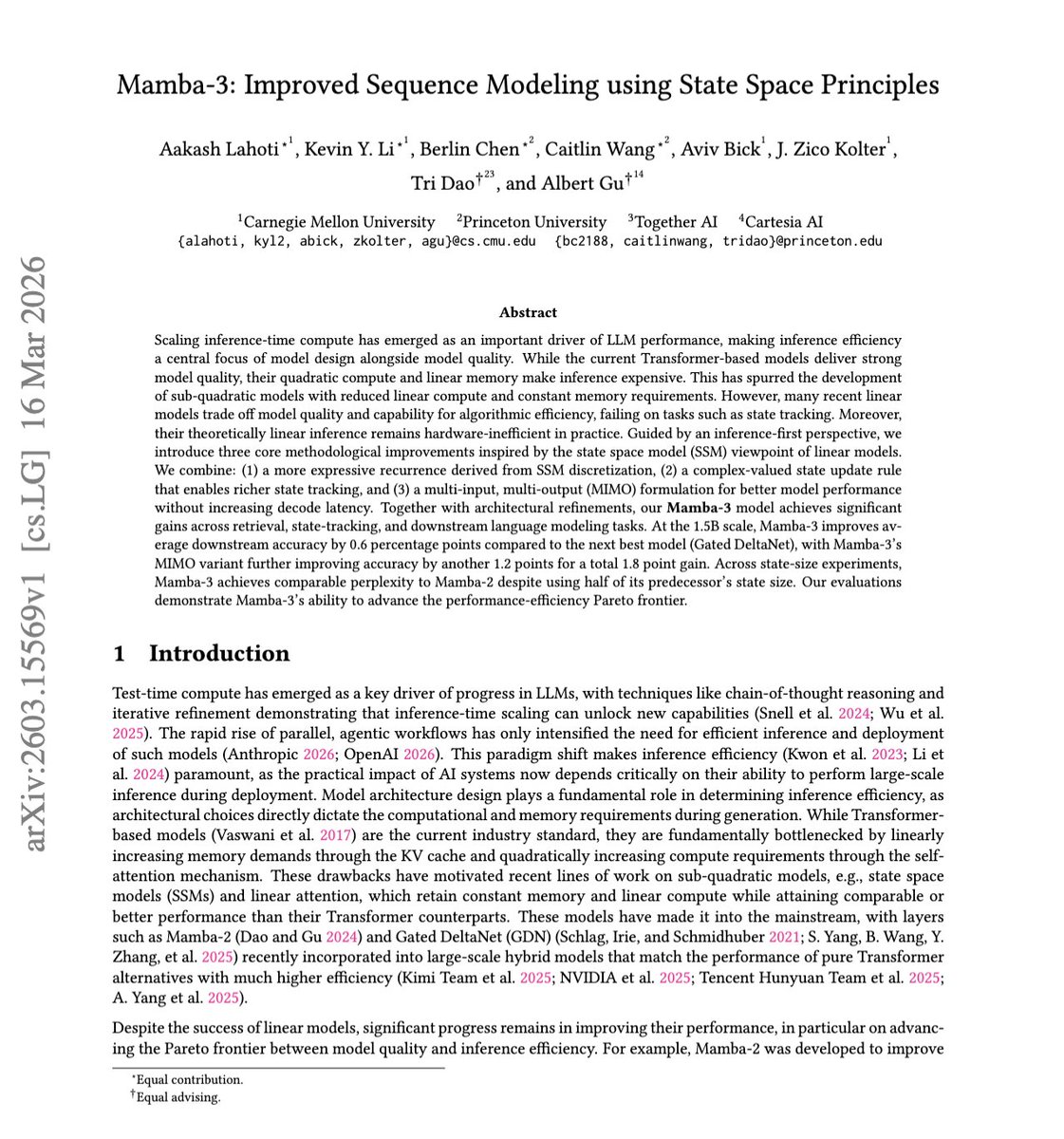

mamba 3: Mamba with RoPE!

"Improved Sequence Modeling using State Space Principles"

They show that state space models can have both speed and performance!

In this new iteration, Mamba now has better recurrence design, complex-valued state tracking, and a MIMO update.

It matches and beats prior mamba style models (mamba 2 & gated DeltaNet) on both language and retrieval tasks, while keeping the memory constant and sustains the fast decoding benefits.

English

can AI do real math research?

"HorizonMath: Measuring AI Progress Toward Mathematical Discovery with Automatic Verification"

This paper turns a vague debate into a measurable test by introducing a contamination-resistant benchmark of 101 mostly unsolved problems across 8 domains in math.

The solutions are genuinely unknown but answers can still be checked automatically, so it gives a scalable way to measure whether AI is moving beyond solving known textbook problems toward actual mathematical discoveries

The paper shows that today’s frontier models still score near zero overall, while GPT 5.4 Pro is the only model they evaluate to produce two potentially novel improvements on published baselines, suggesting early signs of progress.

English

correction:

The talk starts at 12pm PT, March 18th!

alphaXiv@askalphaxiv

RL Isn’t Actually Optimizing What We Think, And That’s a Problem Come join us for this AI4Science talk: Maximum Likelihood Reinforcement Learning (MaxRL). In this session, the author of MaxRL @FahimTajwar10 will cover their paper that takes a step back and asks a fundamental question: when we use RL for tasks like reasoning, coding, or navigation, are we even optimizing the right objective? Whether you’re working on RL, LLM reasoning, or just curious about how training objectives shape model behavior, this is one to check out. 🗓 Wednesday March 18th 2026 · 10AM PT 🎙 Featuring Fahim Tajwar 💬 Casual Talk + Open Discussion

English

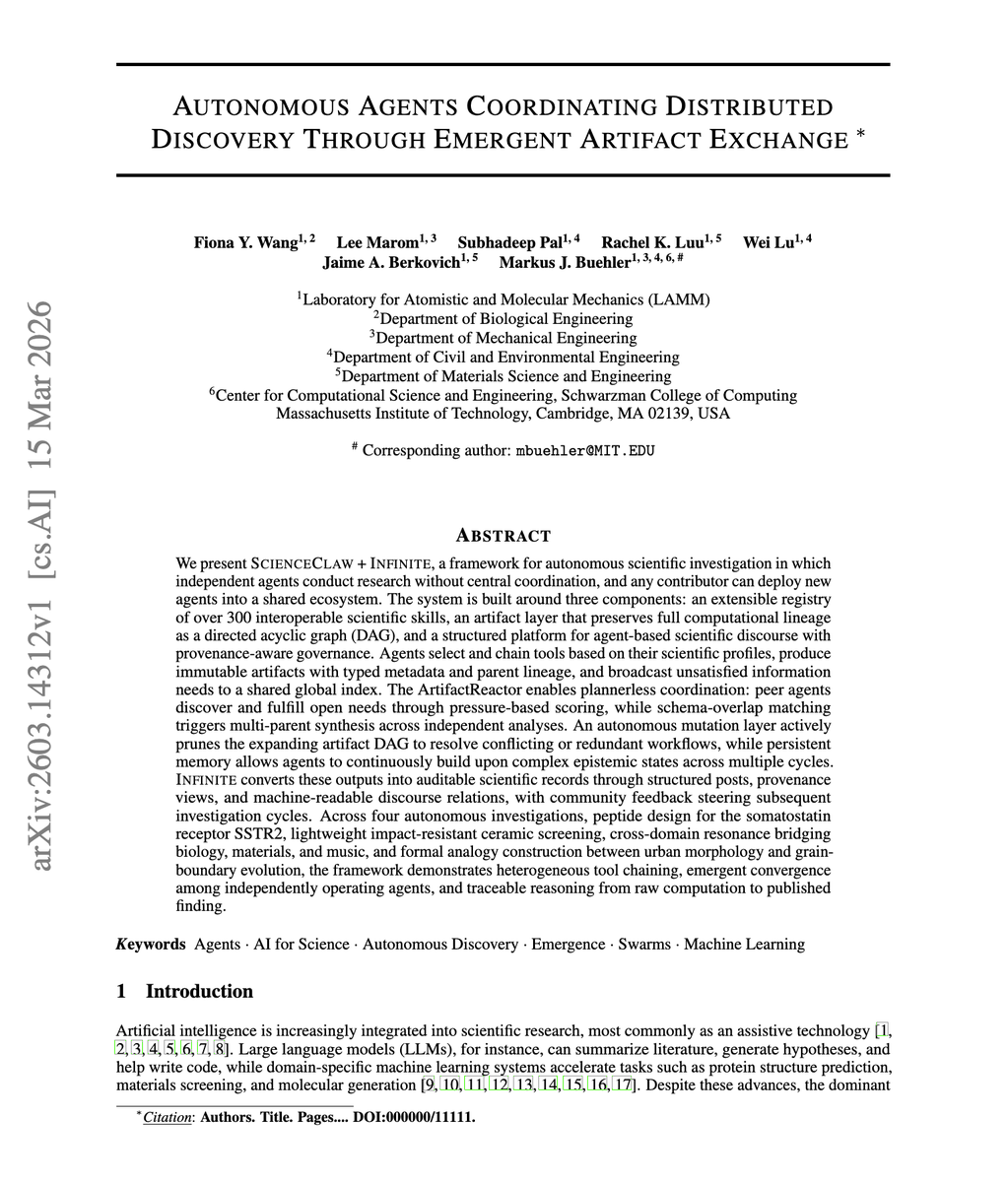

"Autonomous Agents Coordinating Distributed Discovery Through Emergent Artifact Exchange"

This paper shows how to turn AI from a single helpful assistant into a decentralized scientific lab.

With many autonomous agents independently run tools, exchange traceable research artifacts, and converge on new discoveries without a central boss!

This approach seems more practical because it points toward scalable, auditable crowdsourced science by machines, rather than relying on one giant model or a rigid top-down coordinator to manage the whole research loop.

This matters because it makes autonomous science more parallel, modular, and inspectable, and closer simulate real scientific communities work.

English

Add to Claude Code: claude mcp add --transport http alphaxiv api.alphaxiv.org/mcp/v1 (and then run /mcp in claude to authenticate)

Docs: alphaxiv.org/docs/mcp

English

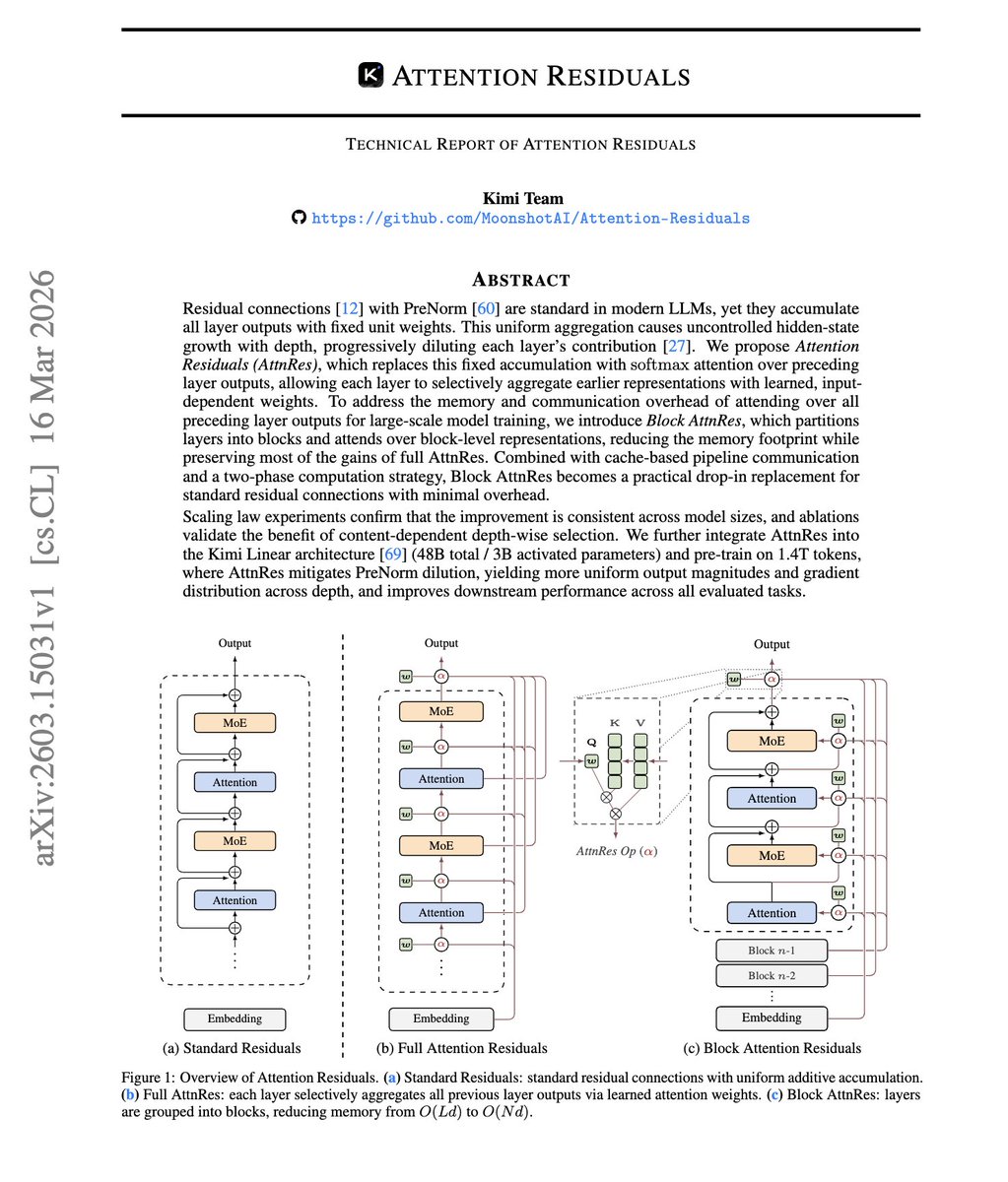

“Attention Residuals” is now available on AlphaXiv!

In standard transformer, every layer just inherits an equal sum of all earlier layers, so as models get deeper, useful computations get diluted instead of being selectively reused.

The research team at @Kimi_Moonshot proposes Attention Residuals which fixes this by letting each layer attend over previous layers with learned weights, so depth works more like retrieval than accumulation.

This makes training more stable, improving scaling and downstream performance with almost no extra overhead, specifically, 1.25x compute advantage against the standard transformer baseline.

English