Al Shao

7 posts

@dmvaldman I still think that it had some explanations of this joke in its corpus, but at the very least it's able to extend basic variations of logic pretty well.

English

Excited to present our work "Squeezeformer: An Efficient Transformer for Automatic Speech Recognition" at NeurIPS 2022 in New Orleans tomorrow Thursday December 1st!

Stop by our poster at 11AM - 1 PM (CST) in Hall J Poster #620

nips.cc/Conferences/20…

#NeurIPS2022

English

@quocleix @tanmingxing This is really great work! I was wondering about a detail. I was looking at the interesting lite-R ASPP network design and was curious about the pooling layer's striding. Why is it set to output a different aspect ratio instead of being 1:1 with the input? Thanks!

English

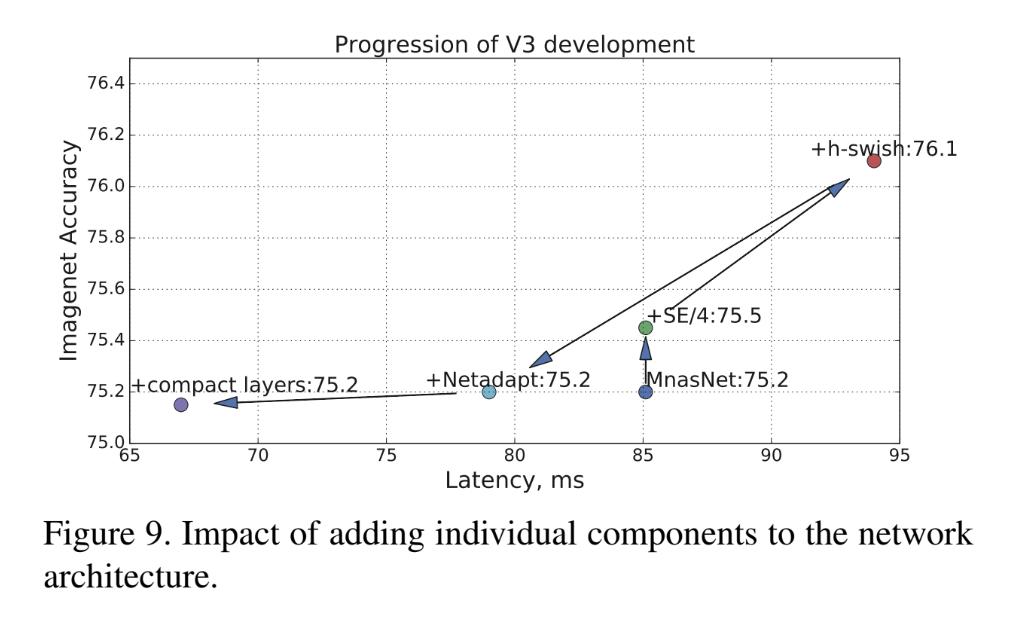

Introducing MobileNetV3: Based on MNASNet, found by architecture search, we applied additional methods to go even further (quantization friendly SqueezeExcite & Swish + NetAdapt + Compact layers). Result: 2x faster and more accurate than MobileNetV2. Link: arxiv.org/abs/1905.02244

English