Ashesh

159 posts

@ashesh0

Assistant Professor, CSE, Ashoka University

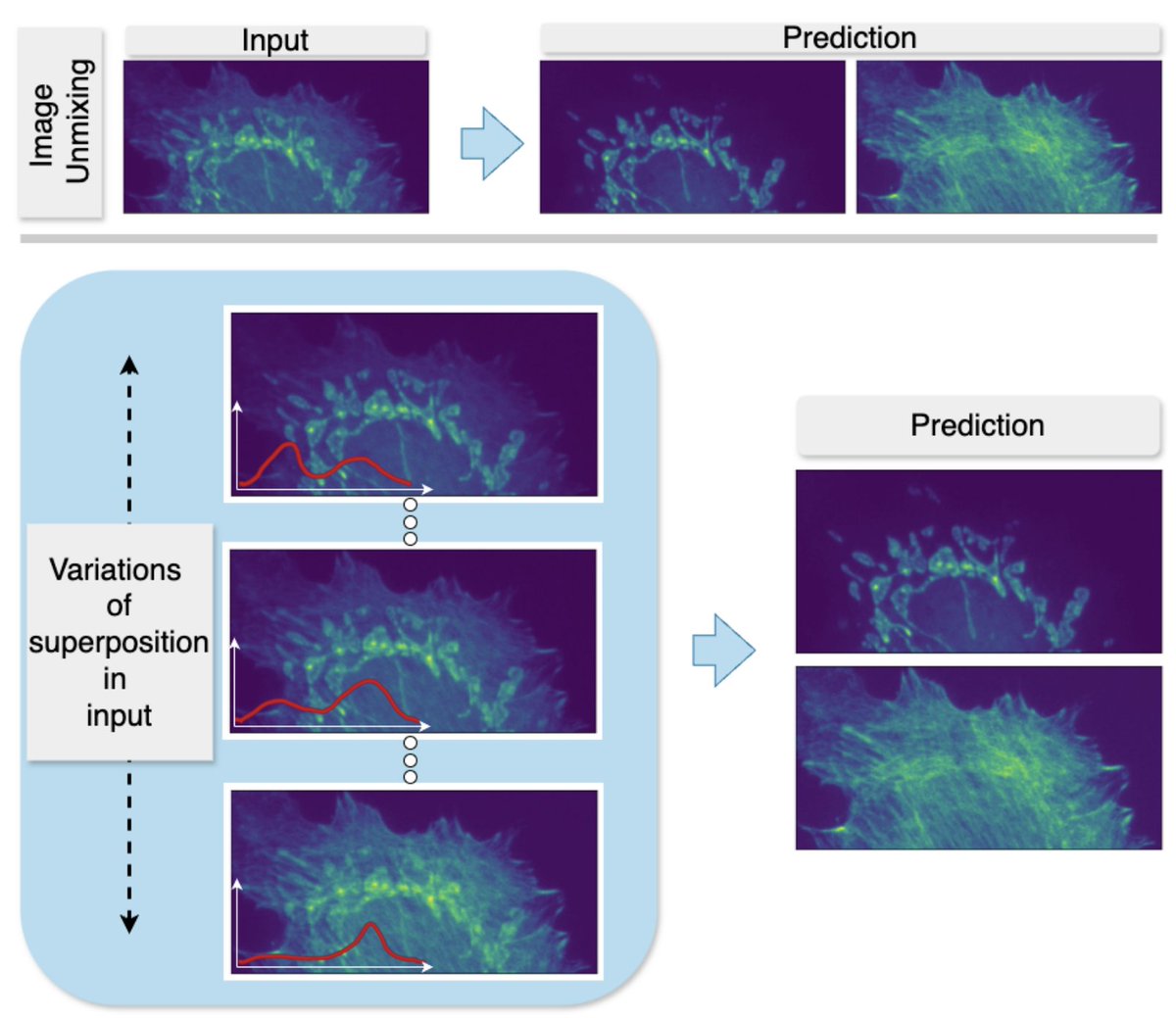

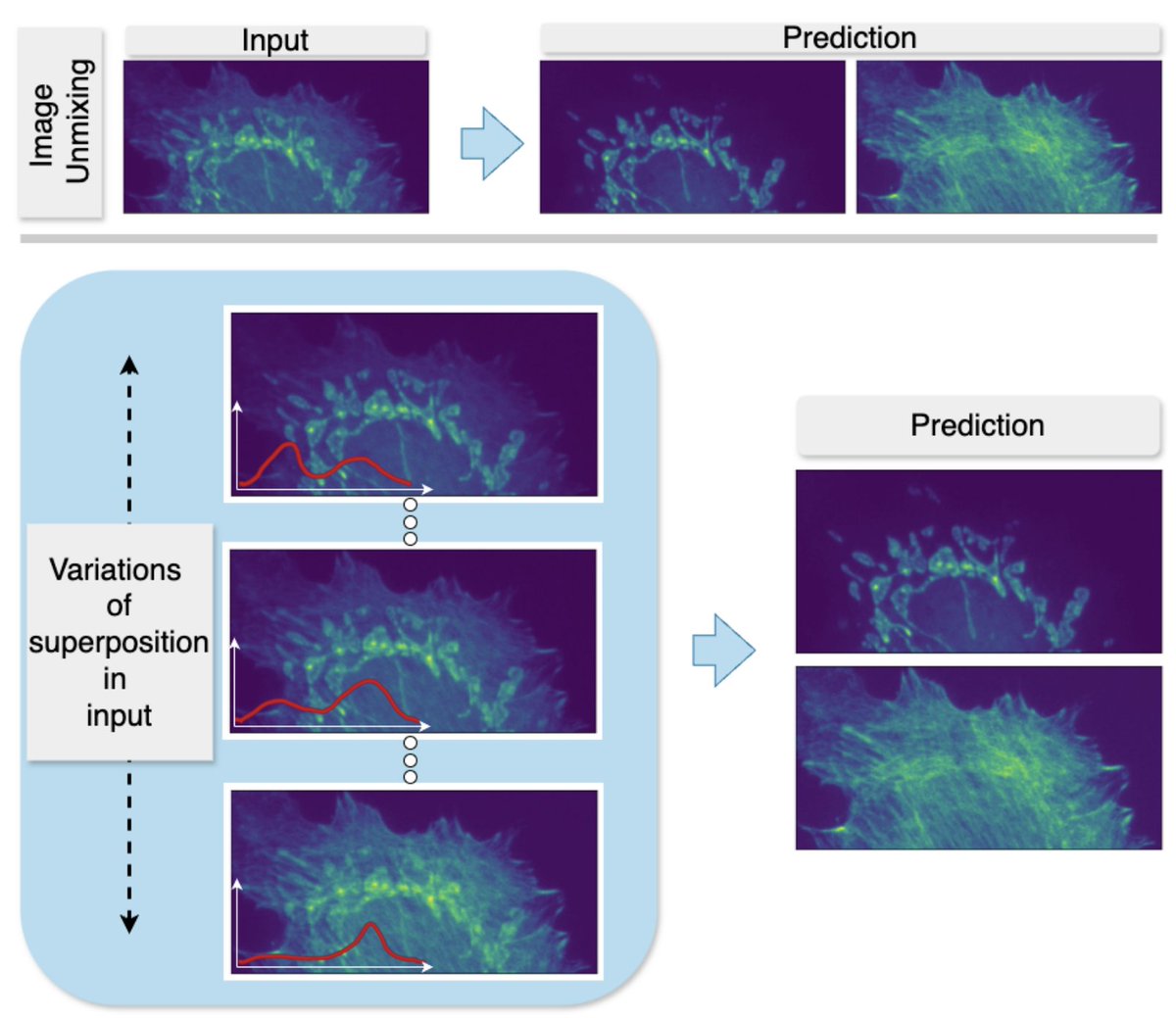

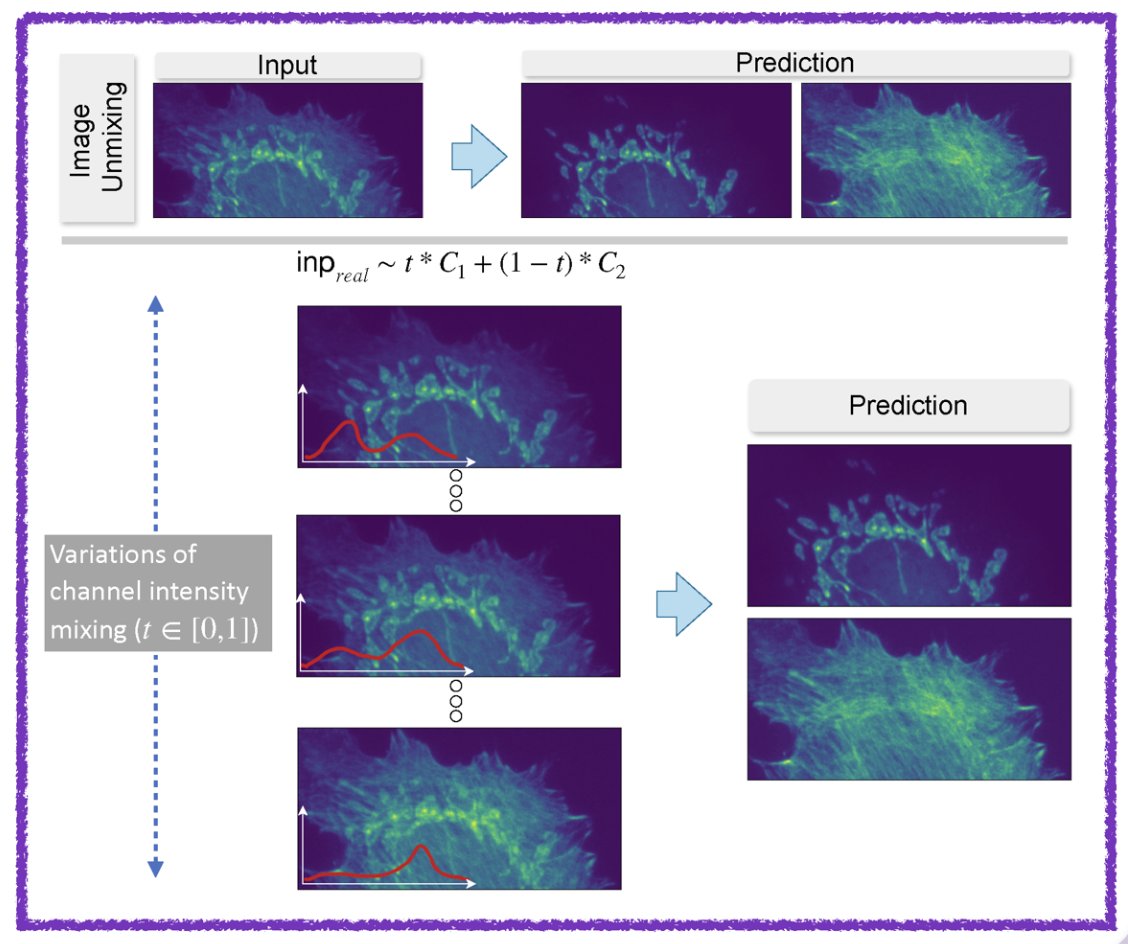

MicroSplit: a computational unmixing method for multiplexed fluorescence imaging of up to 4 structures per imaging channel. @ashesh0 @florianjug nature.com/articles/s4159…

YIM 2026 is just 3 days away — and as we count down, here’s an inspiring #JOYI2026 story. From a remote village in Haryana to leading a lab at @IISERPune — @Saritapuri24’s journey to science was anything but conventional. With no academic role models growing up, she built her path through persistence, mentorship, and self-belief. #JOYI2026 Read 👉 buff.ly/F4um47v @LaStatale @IBCSinica @IITDelhi

#researcherspotlight @Saritapuri24 along with Sharvari Palkar, Ishaan Chaudhary and Basudha Patel from IISER Pune talks about their lab's first work on "How Amyloid Fibrils Form Diverse Structures in AL Amyloidosis" published in JMB #amyloid biopatrika.com/academia/resea… @biopatrika

APPLICATIONS are NOW OPEN Jan 2026 PhD intake at the Department of Biology @IISERPune Submission Deadline: 5pm - OCT 23, 2025. For details, check the link below iiserpune.ac.in/education/admi…

Our work on generalizing across variations in the strength of structures within superimposed images—an issue relevant for semantic unmixing and bleed-through removal in fluorescence microscopy—has been accepted at NeurIPS 2025! (arxiv.org/abs/2503.22983) @florianjug